Inside Modern Speech to Text Technology and Its Enterprise Impact

Kaushal Choudhary

Learn how modern speech-to-text technology works, its real limitations, and how enterprises evaluate accuracy, latency, and deployment for production use.

Every live call, meeting, or support interaction creates decisions in real time, yet most teams still rely on memory or delayed notes to act on them. When conversations move faster than systems can capture them, context gets lost, agents repeat questions, and downstream workflows slow to a crawl. That gap is exactly where speech-to-text technology now sits inside modern operations.

As voice becomes the primary interface for sales, support, and compliance-heavy workflows, expectations around accuracy and latency have tightened. The booming Speech-to-Text Service Platforms market is projected to reach $70 billion by 2033, reflecting how central speech-to-text technology has become to scaling real-time execution without adding headcount.

In this guide, we break down how enterprise-grade STT actually works, where it fails, and how to evaluate it for production use.

Key Takeaways

Speech-to-Text Is Infrastructure: Modern speech-to-text technology underpins real-time decision systems, not just transcription, directly impacting agent workflows, automation reliability, and downstream execution speed.

Architecture Drives Outcomes: Streaming-first, end-to-end neural STT consistently outperforms legacy pipelines in live conversations, especially under interruptions, overlapping speech, and telephony-grade audio.

Accuracy Depends on Context: Low Word Error Rate alone is insufficient; domain adaptation, numeric handling, accent strength, and confidence scoring determine production-grade usability.

Latency Is a Business Constraint: Sub-100ms time-to-first-transcript is critical for conversational systems, allowing agent assist, real-time routing, and interruption-aware voice interactions.

Deployment Choice Matters: Cloud, on-prem, or hybrid STT deployments directly affect data control, compliance posture, latency guarantees, and long-term operating cost at scale.

What Is Speech-to-Text Technology?

Speech-to-text technology converts live or recorded audio into machine-readable text using neural speech recognition systems optimized for real conversational inputs, not scripted audio.

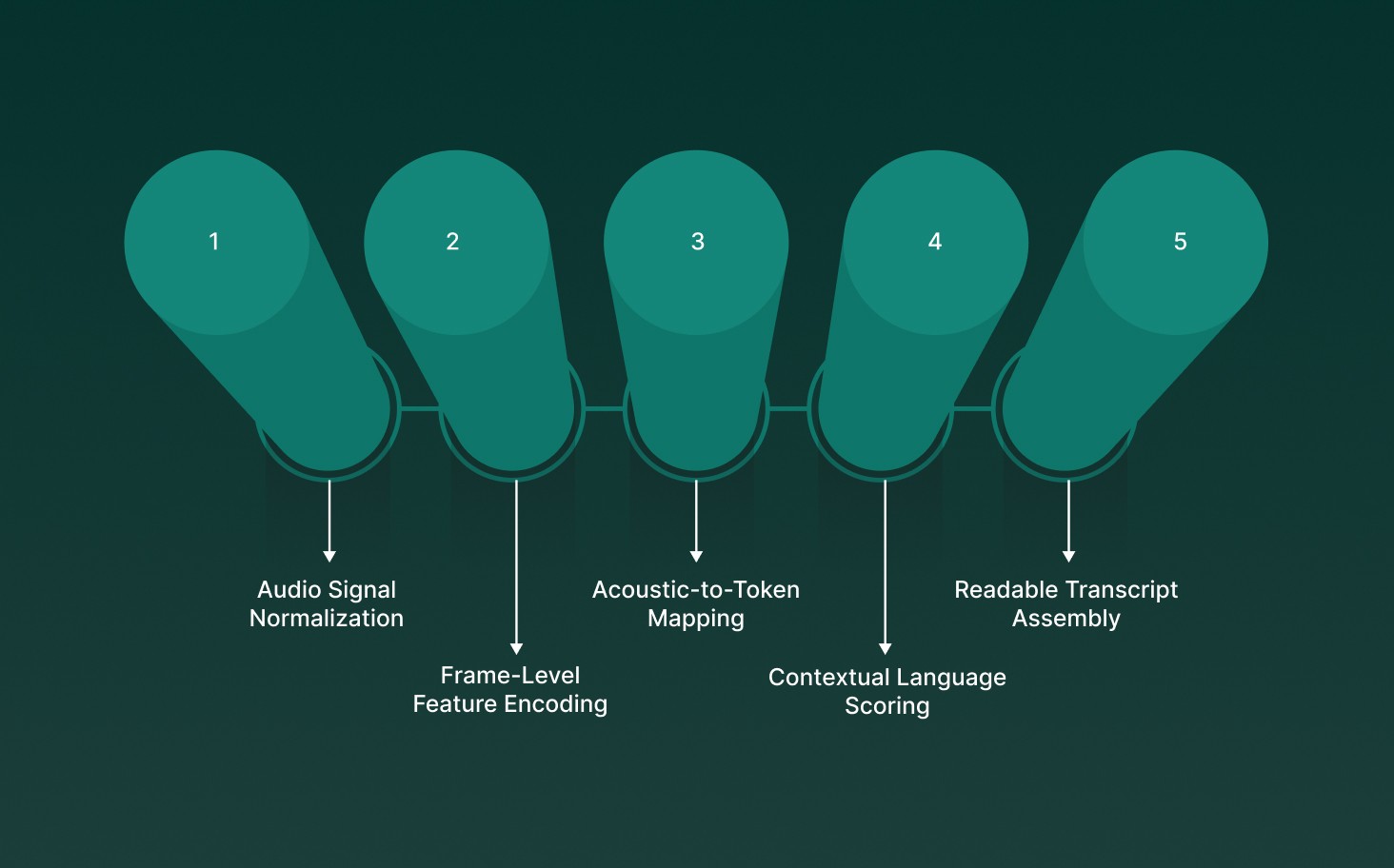

Speech-to-text technology operates through tightly coupled technical layers that turn raw sound into usable language data:

Audio Signal Normalization: Incoming audio is sampled, denoised, and amplitude-leveled to stabilize unpredictable microphone and phone-call inputs.

Frame-Level Feature Encoding: Millisecond audio frames are converted into spectral features optimized for phoneme discrimination, not raw waveform matching.

Acoustic-to-Token Mapping: Neural acoustic models translate sound patterns into subword tokens instead of full words to reduce error propagation.

Contextual Language Scoring: Probabilistic language models resolve ambiguity using surrounding phrases, numbers, and conversational context.

Readable Transcript Assembly: Decoding layers apply punctuation, casing, and timing metadata for downstream use in agents, analytics, or workflows.

At its core, speech-to-text technology serves as the foundational layer that allows voice-driven systems to reason, respond, and act on spoken input in real time.

If you are building voice experiences that need to listen and respond in real time, explore how Speech-to-Text and Text-to-Speech Technology: Making Interactions Smarter work together to power natural, production-ready conversations.

Types of Speech-to-Text Systems

Speech-to-text systems differ based on how audio is modeled, how speakers are handled, and how quickly text is produced, directly affecting accuracy, latency, and production reliability.

Architectural Speech-to-Text Systems

Architectural systems define how audio signals are converted into text, determining contextual awareness, error propagation, and how well models perform under real conversational conditions.

Acoustic–Phonetic Pipelines: Audio is mapped to phonemes using HMMs and DNNs, then assembled into words, creating brittle pipelines sensitive to noise and compounding errors.

End-to-End Neural Models: Single neural networks learn direct audio-to-text mappings, reducing handoffs between stages and improving contextual consistency across long, natural conversations.

Specialized ASR Architectures: CTC, transducer, and encoder–decoder models optimize alignment, streaming behavior, and decoding speed depending on real-time or batch transcription needs.

Speaker Recognition-Based Systems

Speaker recognition design determines whether a system adapts to one voice or reliably handles unpredictable callers across phones, headsets, and varying acoustic environments.

Speaker-Dependent Systems: Models are tuned to a single voice profile, improving dictation accuracy but failing when exposed to unfamiliar accents or changing microphones.

Speaker-Independent Systems: Models generalize across speakers without prior training, making them suitable for phone speech recognition and high-volume customer interactions.

Adaptive Speaker Modeling: Runtime speaker embeddings adjust decoding dynamically, improving accuracy mid-conversation without explicit user enrollment or retraining.

Processing and Latency-Based Systems

Processing mode controls how quickly text appears, which directly impacts conversational flow, interruption handling, and usability in live voice-driven applications.

Streaming Speech-to-Text: Audio is decoded incrementally, emitting partial transcripts while speech continues, allowing real-time agent assist and live conversational AI.

Batch Speech-to-Text: Full audio files are processed after capture, allowing deeper context analysis, but are unsuitable for interactive or latency-sensitive use cases.

Synchronous Short-Form Recognition: Very short audio segments are decoded immediately, optimized for commands, wake words, and broadcast captioning scenarios.

Delivery and Deployment Models

Deployment models determine operational control, scalability, compliance posture, and how tightly speech-to-text integrates into existing enterprise systems.

Cloud Speech-to-Text APIs: Managed APIs provide fast onboarding, elastic scaling, and advanced features but introduce data residency and latency trade-offs.

Self-Hosted and Open Models: Teams run models on owned infrastructure, gaining control over data and tuning at the cost of operational complexity.

Embedded Device Speech Recognition: Speech recognition runs directly on devices, minimizing latency but constrained by compute, memory, and update flexibility.

Speech-to-text systems are not interchangeable; architecture, speaker handling, and processing mode must align with latency targets, accuracy requirements, and real production workloads.

Enterprise Use Cases of Speech-to-Text Technology

Enterprise speech-to-text technology converts high-volume voice interactions into structured data that drives automation, compliance, and real-time decision support across critical business workflows.

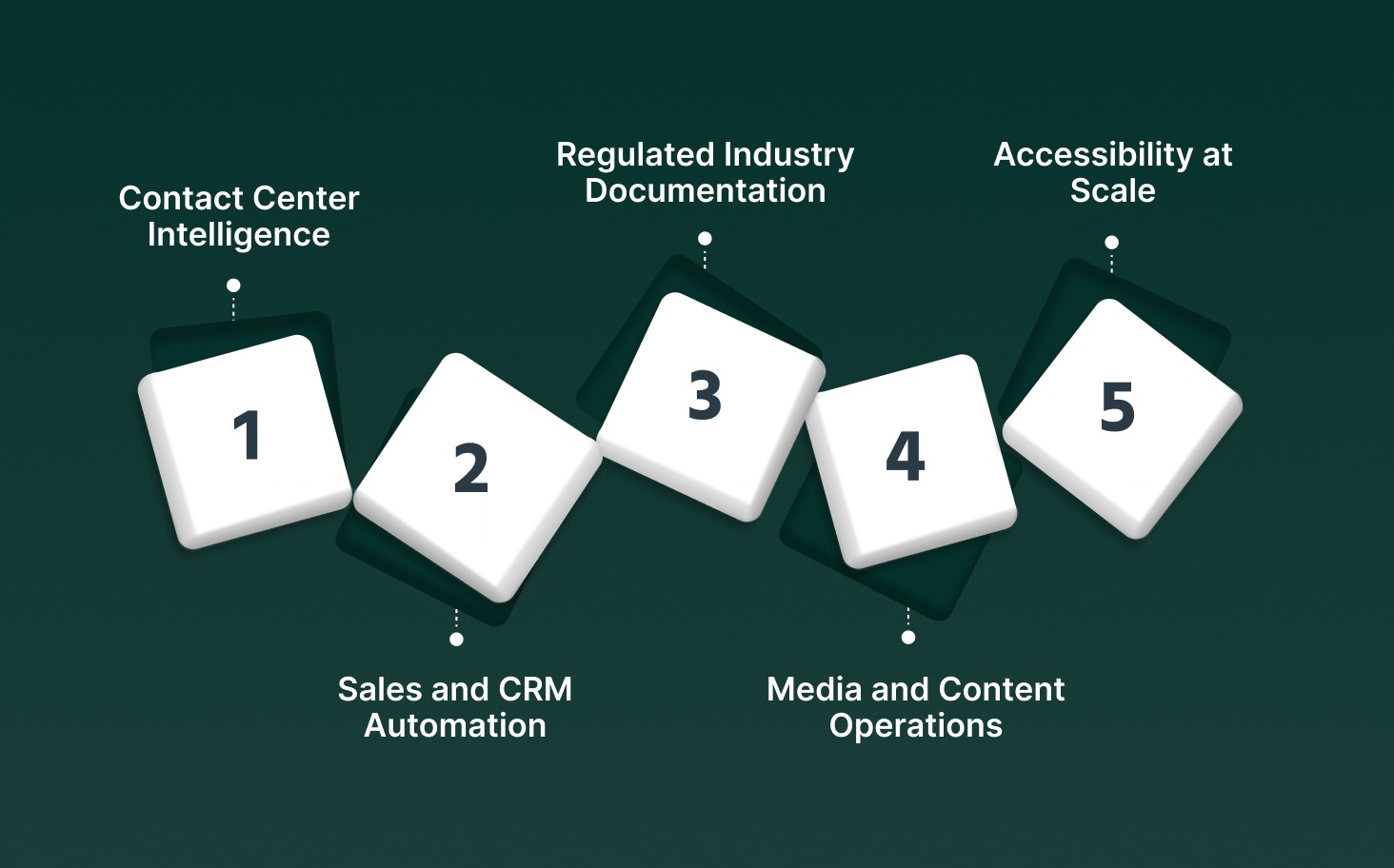

Enterprise deployments of speech-to-text technology typically focus on the following production-grade scenarios:

Contact Center Intelligence: Live call transcription feeds agent-assist systems, sentiment scoring, and post-call analytics without waiting for batch processing or manual review.

Sales and CRM Automation: Voice notes and customer conversations are transcribed instantly and written directly into CRM records with timestamps, speaker labels, and action-item extraction.

Regulated Industry Documentation: Domain-trained models capture medical, legal, and financial terminology accurately enough to meet audit, compliance, and record-retention requirements.

Media and Content Operations: Audio and video streams are transcribed into searchable text for captioning, moderation, localization, and downstream content analysis pipelines.

Accessibility at Scale: Real-time captions and transcripts support employees and customers with hearing, language, or learning differences during live enterprise interactions.

Across industries, speech-to-text technology acts as the connective layer that turns spoken conversations into operational data enterprises can trust, search, and act on in real time.

Accuracy, Language Support, and Domain Adaptation in Modern Speech-to-Text

Modern speech-to-text systems balance transcription accuracy, multilingual coverage, and domain adaptation to perform reliably in live, noisy, and industry-specific conversational environments.

Modern enterprise STT performance is shaped by the following tightly coupled technical dimensions:

Accuracy Measurement Frameworks: Word Error Rate, diarization error, and timestamp alignment metrics quantify transcription reliability under real call conditions, not clean lab audio.

Latency-Accuracy Tradeoffs: Streaming models sacrifice lookahead context to deliver sub-second responses, requiring architectural optimizations to avoid accuracy collapse during live conversations.

Multilingual and Accent Handling: Language identification, accent normalization, and code-switching support prevent failure when speakers mix languages or shift pronunciation mid-sentence.

Domain Vocabulary Adaptation: Fine-tuned language layers and injected vocabularies improve recognition of acronyms, numbers, and regulated terminology common in finance and healthcare.

Confidence and Error Signaling: Per-token confidence scoring allows downstream systems to flag uncertainty, trigger fallbacks, or route sensitive transcripts for verification.

In practice, speech-to-text systems only perform well when accuracy, language handling, and domain adaptation are engineered together for the specific conversational reality they operate in.

If you are comparing free tools before committing to production-grade systems, start with the Top 7 Free Voice-to-Text Software to Evaluate in 2026.

Speech-to-Text for Accessibility and Inclusive Communication

Speech-to-text allows inclusive communication by converting live speech into readable text streams that support participation across hearing, cognitive, physical, and language-related access needs.

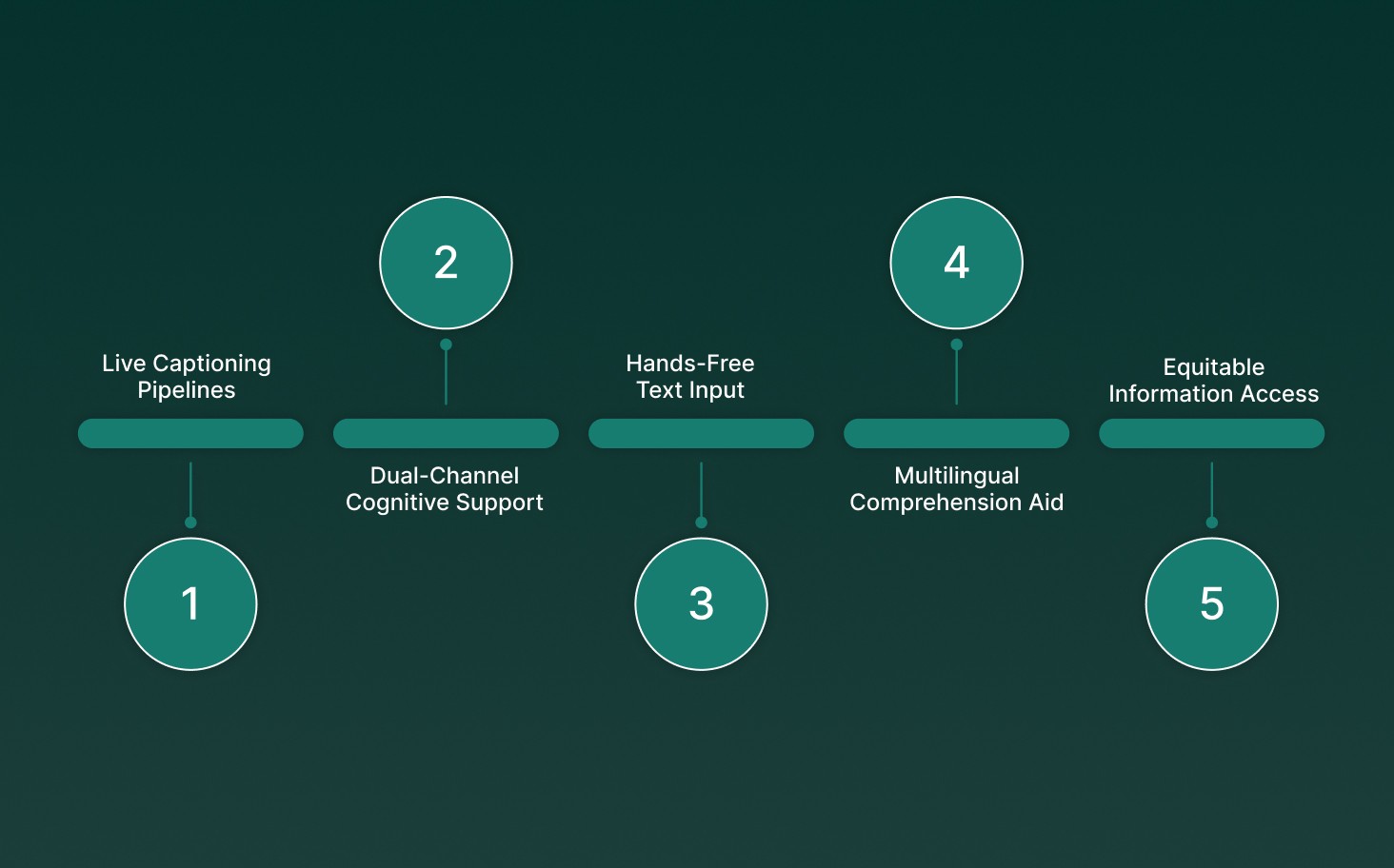

In inclusive, real-world environments, speech-to-text supports accessibility through the following technical capabilities:

Live Captioning Pipelines: Streaming STT generates low-latency captions for meetings and events, preserving timing alignment so participants can follow conversations without audio dependency.

Dual-Channel Cognitive Support: Synchronized audio and text outputs reinforce comprehension for users with dyslexia by reducing decoding load and improving short-term recall.

Hands-Free Text Input: Dictation-grade STT replaces keyboards with voice input, allowing users with mobility limitations to create, edit, and navigate text-based systems.

Multilingual Comprehension Aid: Real-time transcription slows conversational pace for non-native speakers and allows later review of unfamiliar terms through searchable transcripts.

Equitable Information Access: Automatic transcription creates durable records of spoken content, making sure that the absent participants receive identical information without reliance on summaries.

When engineered for real-time accuracy and low latency, speech-to-text becomes a foundational accessibility layer that allows spoken communication to remain inclusive, searchable, and usable for everyone.

If you are evaluating voice agents that can operate reliably across regions and languages, take a closer look at Top 10 AI Voice Agents with Multilingual Capabilities 2025

Speech to Text APIs and System Integration

Speech-to-text APIs embed real-time transcription into production systems, allowing applications to ingest live audio, extract structured language data, and trigger downstream actions programmatically.

Enterprise speech-to-text integrations typically rely on the following system-level components:

Streaming API Interfaces: WebSocket-based STT endpoints accept continuous audio frames and emit partial transcripts, allowing live agent assist, interruption handling, and conversational state updates.

Batch Transcription Pipelines: REST-based APIs process stored audio asynchronously, returning timestamped transcripts optimized for analytics, compliance review, and post-call processing.

SDK and Runtime Support: Language-specific SDKs manage authentication, audio chunking, retries, and backpressure control inside production services.

Structured Output Schemas: JSON responses include word-level timing, speaker labels, and confidence scores for direct ingestion into analytics, CRMs, or automation engines.

Workflow Orchestration Hooks: Transcribed text triggers downstream logic such as ticket creation, intent routing, summarization, or database updates without manual intervention.

When speech-to-text APIs are designed as infrastructure rather than plugins, they integrate cleanly into enterprise systems and support reliable, real-time voice-driven workflows at scale.

Limitations and Challenges of Speech-to-Text

Speech-to-text systems face technical and operational constraints that affect reliability in real-world environments, especially when audio quality, language complexity, and latency requirements collide.

Challenge Area | What Breaks in Practice | Why It Matters in Production |

Noisy and Low-Bandwidth Audio | Background noise, call compression, and 8–16kHz telephony audio distort phonemes and reduce model confidence. | Transcription errors increase sharply in contact centers and field recordings where clean audio is unrealistic. |

Overlapping and Quick Speech | Simultaneous speakers and fast turn-taking confuse token alignment and diarization boundaries. | Multi-speaker calls lose attribution accuracy, breaking analytics, compliance logs, and agent-assist workflows. |

Accent and Dialect Variability | Regional pronunciation and informal speech diverge from dominant training distributions. | Models skew toward high-resource accents, causing uneven accuracy across global user bases. |

Domain-Specific Language | Acronyms, numbers, and jargon fall outside generic language models. | Regulated industries require fine-tuning or vocab injection to avoid critical transcription errors. |

Latency vs Accuracy Trade-Offs | Streaming models limit context to reduce delay, increasing partial or corrected outputs. | Conversational systems must balance real-time responsiveness with stable, usable transcripts. |

Operational Overhead | Open models require hosting, tuning, and monitoring; APIs add cost and data constraints. | Teams must choose between control, scalability, compliance posture, and total cost of ownership. |

Speech-to-text works best when its limitations are anticipated at design time, with architecture, deployment, and domain adaptation chosen to match real operating conditions.

If you are building voice workflows from the ground up and want full control over STT pipelines, start with Build Voice AI in Python: Complete Speech-to-Text Developer Guide (2026)

Latest Advances in Speech Recognition Software

Modern speech recognition software has progressed from transcription engines into low-latency, streaming language systems built on specialized neural architectures optimized for real-world conversational audio.

Recent technical advances shaping production-grade speech recognition systems include the following core innovations:

Neural Streaming Architectures: Transducer and chunk-based transformer models decode audio incrementally, maintaining context across frames while avoiding full-utterance buffering delays.

Partial Hypothesis Stabilization: Confidence-weighted token locking reduces transcript churn in live streams, allowing downstream systems to act on early results without constant rewrites.

Advanced Acoustic Frontends: Learned spectral encoders replace handcrafted MFCC pipelines, improving strength against telephony compression, microphone variance, and background noise.

Joint ASR–NLP Coupling: Speech decoders integrate lightweight language reasoning layers to disambiguate homophones, numbers, and entities during decoding rather than post-processing.

Adaptive Runtime Optimization: Dynamic beam sizing and compute throttling adjust inference cost in real time based on audio complexity and latency targets.

These advances allow modern speech recognition software to operate as a real-time, context-aware system that supports conversational AI, analytics, and automation without sacrificing speed or reliability.

If you are bringing real-time voice interactions into mobile products, this hands-on guide shows what it takes when building an AI Voice Chatbot with React Native.

Choosing the Right Speech-to-Text Technology

Choosing speech-to-text technology means aligning accuracy, latency, deployment model, and domain requirements with how voice is actually used inside production systems.

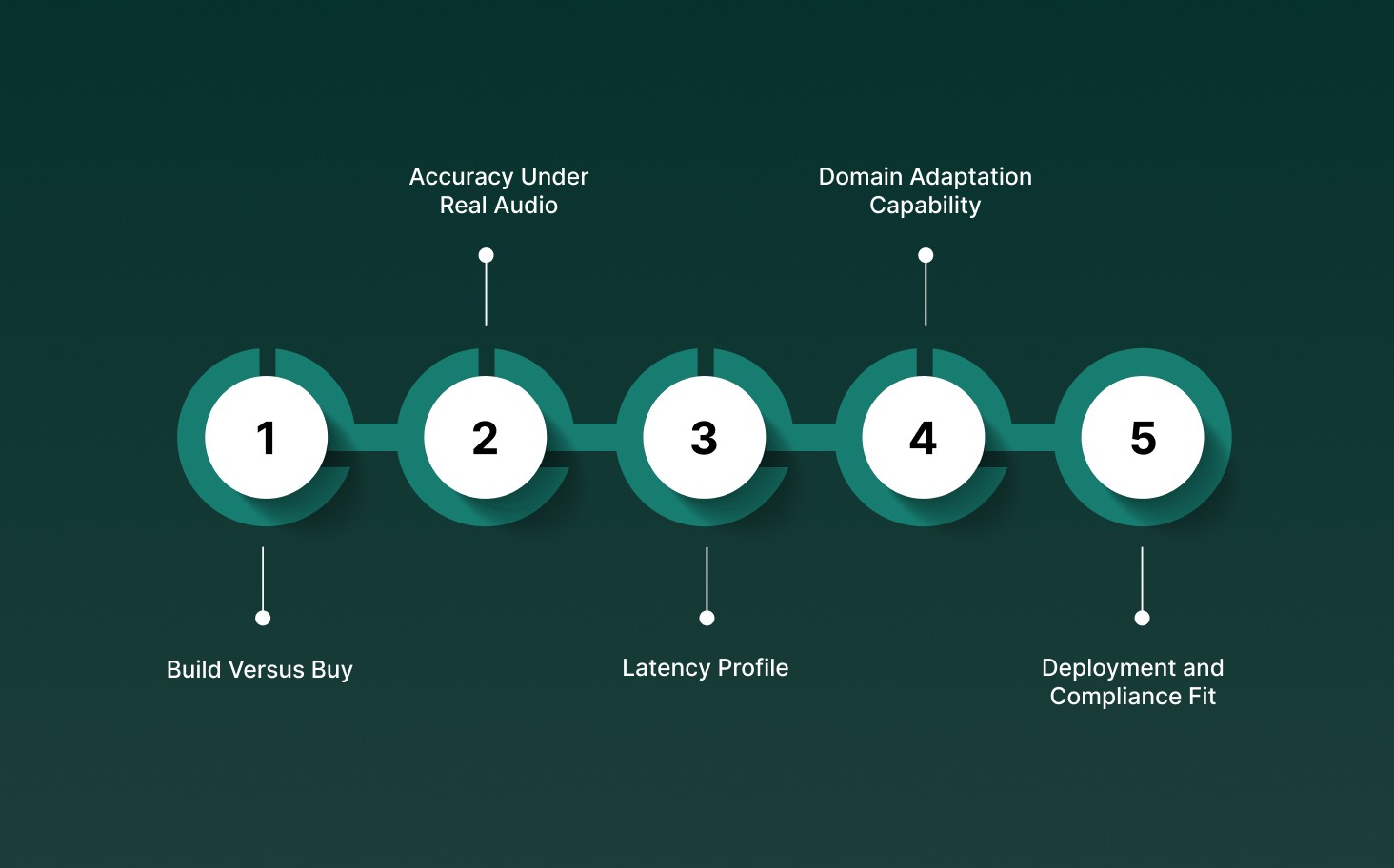

When evaluating speech-to-text options, teams typically assess the following technical decision factors:

Build Versus Buy: Open-source models offer architectural control but require owning inference optimization, scaling, and monitoring, while managed APIs reduce operational load at the cost of customization.

Accuracy Under Real Audio: Word Error Rate on clean benchmarks matters less than performance on telephony audio, overlapping speech, accents, and domain-specific vocabulary.

Latency Profile: Streaming systems must meet sub-300ms response budgets for live conversations, while batch systems optimize for depth, context, and post-call analysis.

Domain Adaptation Capability: Fine-tuning, vocabulary injection, and numeric handling determine whether models survive regulated environments like finance, healthcare, and legal workflows.

Deployment and Compliance Fit: Cloud, single-tenant, or on-prem deployment choices affect data residency, compliance posture, and long-term operating cost.

The right speech-to-text technology is the one engineered for your audio conditions, latency tolerance, and domain complexity, not the one with the most features on paper.

How Pulse STT Approaches Modern Speech-to-Text

Pulse STT is engineered for real-time, enterprise-grade speech recognition, combining ultra-low latency, multilingual accuracy, and production-ready intelligence for live conversational workloads.

Pulse STT’s approach to modern speech-to-text is defined by the following system-level capabilities:

Streaming-First Architecture: Audio is decoded incrementally with sub-70ms time-to-first-transcript, allowing live systems to react before speakers finish sentences.

Language-Aware Decoding: Automatic language detection and code-switch handling adjust decoding paths dynamically as speakers shift languages mid-conversation.

Accuracy Under Telephony Constraints: Models are optimized for compressed 8kHz and 16kHz audio, maintaining low word error rates in real call-center conditions.

Embedded Speech Intelligence: Speaker diarization, emotion recognition, profanity filtering, and word boosting are applied during decoding, not bolted on afterward.

Enterprise Deployment Flexibility: Pulse STT runs in cloud or fully on-prem environments, meeting strict latency, data residency, and compliance requirements.

By treating speech-to-text as real-time infrastructure rather than offline transcription, Pulse STT delivers predictable accuracy and responsiveness at enterprise scale.

Conclusion

Speech-to-text has quietly shifted from a supporting tool to an operational infrastructure. When it is reliable, fast, and context-aware, teams stop reacting late and start acting in real time, with conversations turning into decisions instead of loose ends.

That difference comes down to how the system is built and deployed, not surface features. If speech is central to your workflows, the bar is consistency under load, predictable latency, and control over how language behaves in your domain.

At Smallest AI, Pulse STT is designed for exactly those production realities, from sub-70ms transcription to on-prem deployment when data cannot leave your stack. If you are evaluating speech systems for real workloads, not demos, now is the right moment to see how Pulse STT fits.

How does a speech-to-text conversion algorithm handle interruptions in live phone calls?

Is phone speech recognition fundamentally different from standard speech-to-text STT?

Why do some speech-to-text technologies fail on acronyms and numbers?

Can speech recognition assistive technology work reliably without perfect grammar?

What differentiates the latest speech recognition software from older STT systems?