The Practical Guide to Building Conversational AI with Chatbot Voice Recognition

Wasim Madha

Boost business efficiency with innovative chatbot voice recognition. Enhance user experience and explore cutting-edge AI. Discover now!

Voice interactions rarely fail in obvious ways. Instead, problems appear through delayed responses, missed intent, or chatbots that slightly misunderstand what users say. These small gaps are often when teams begin focusing on chatbot voice recognition, especially as conversational AI starts handling real time customer conversations and automation.

For teams deploying voice enabled chatbots, reliable voice recognition directly affects response speed, accuracy, and user trust. When spoken input triggers workflows or automated actions, even minor recognition issues can interrupt conversations and degrade the overall experience.

In this guide, we explain how chatbot voice recognition works, where real-world voice interactions break down, and what teams should prioritize to build stable, responsive voice-driven conversations.

Key Takeaways

Real-Time Voice Conversations: Chatbot voice recognition enables conversational AI to capture speech, interpret intent, and respond instantly during live interactions, rather than relying solely on text.

Multi-Layer AI Integration: Speech recognition, language understanding, dialogue management, and voice generation combine to maintain natural, continuous conversations.

Enterprise Use Cases: Voice chatbots support customer service, healthcare coordination, financial workflows, retail voice commerce, and internal enterprise automation through spoken interactions.

Future Voice Evolution: Persistent memory, emotion-aware processing, adaptive language handling, and speech-to-speech interaction will define next-generation conversational AI systems.

Production-Ready Platforms: Solutions like Pulse STT and Lightning enable scalable real-time speech processing, natural voice responses, and enterprise-grade conversational automation.

What Chatbot Voice Recognition Means in Conversational AI Systems?

Chatbot voice recognition is the ability of conversational AI systems to capture spoken audio, convert it to text, interpret user intent, and respond intelligently via voice or text.

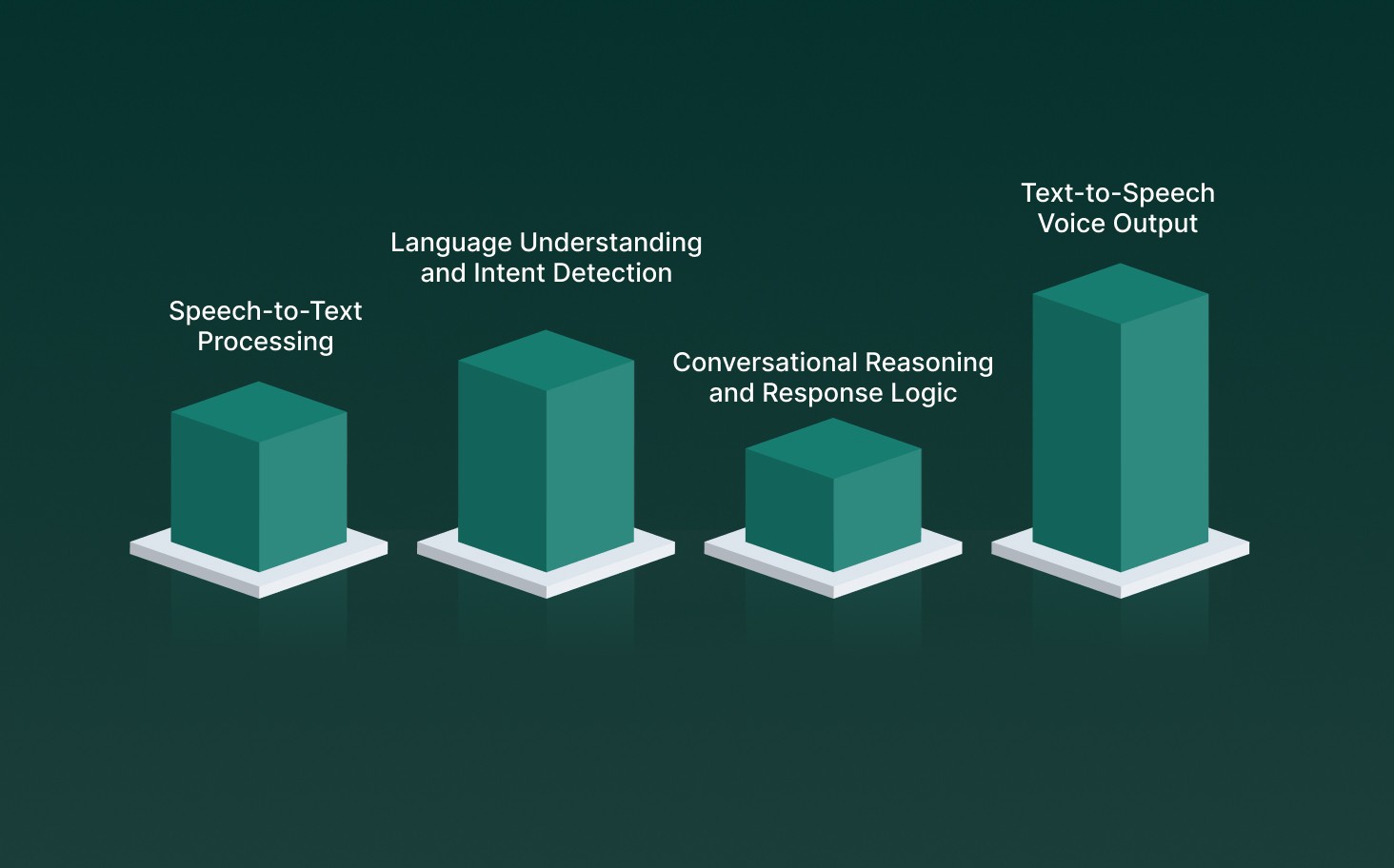

Unlike traditional chatbots that rely solely on typed input, voice-enabled chatbots allow users to interact naturally through speech. This technology combines multiple AI components, including:

Speech-to-Text Processing: Real-time Pulse STT engines convert live audio into structured text so AI systems can begin processing requests instantly during conversations.

Language Understanding and Intent Detection: Natural language processing models interpret meaning, context, and conversational cues to identify what users want without rigid commands.

Conversational Reasoning and Response Logic: AI reasoning layers analyze user input, apply workflow rules, and generate accurate responses aligned with business processes or customer journeys.

Text-to-Speech Voice Output: Advanced TTS systems like Lightning convert text into natural-sounding speech, enabling AI agents to maintain fluid, human-like dialogue.

When these components work together, conversational AI agents can handle complex, real-time voice interactions.

Key Features of Chatbot Voice Recognition in Conversational AI

Instead of operating as a single tool, conversational AI systems move through structured stages that capture speech, interpret meaning, manage dialogue, and generate spoken responses. Each component plays a specific role in maintaining accuracy, context, and real-time responsiveness.

1. Automatic Speech Recognition (ASR) as the Entry Point

Automatic Speech Recognition (ASR) serves as the foundation of chatbot voice recognition, converting spoken audio into structured text that AI systems can process. It ensures that voice conversations begin instantly without manual input delays. These capabilities allow ASR to capture spoken input quickly and prepare conversations for real-time AI processing:

ASR converts spoken user input into accurate text transcripts in real time, allowing chatbots to begin processing requests immediately.

Modern ASR systems support multiple languages and accents, ensuring accessibility across diverse global audiences.

Neural acoustic models analyze sound patterns and speech signals to improve recognition accuracy in live conversations.

Real-time speech recognition enables faster customer support workflows and automated conversational responses.

Continuous model training allows ASR engines to adapt to changing speech patterns and industry-specific terminology.

2. Integration with Natural Language Understanding (NLU)

Once speech is converted into text, Natural Language Understanding (NLU) interprets the meaning behind the words to determine user intent and contextual relevance. The following functions help conversational AI interpret user intent and extract meaningful context from speech:

NLU analyzes sentence structure and context to accurately determine user intent during conversations.

Entity recognition allows conversational AI to extract names, dates, locations, and other key information from speech.

Context-aware processing enables AI agents to understand follow-up questions and conversational continuity.

Advanced language models help chatbots effectively manage complex or multi-step user queries.

Intent classification improves response precision and reduces misunderstandings during automated interactions.

3. Dialogue Management for Contextual Flow

Dialogue management controls how conversations progress and ensures that chatbot responses remain relevant throughout ongoing interactions. It acts as the decision-making layer that determines the next conversational step. To keep conversations structured and relevant, dialogue systems rely on the following operational mechanisms:

Dialogue systems track conversation history so that AI agents can respond based on previous user inputs.

Context management allows chatbots to maintain logical conversation flow across multiple exchanges.

Decision engines automatically evaluate user intent and select the most appropriate response path.

Workflow integration enables AI agents to trigger actions such as bookings, updates, or data retrieval.

Adaptive conversational strategies help voice agents handle unexpected questions without breaking flow.

4. Natural Language Generation (NLG) and Text-to-Speech (TTS)

Natural Language Generation and Text-to-Speech technologies enable conversational AI systems to produce clear responses and deliver them through realistic synthetic voices.

Together, they complete the voice interaction cycle by turning AI decisions into spoken communication. Together, NLG and TTS enable AI systems to transform decisions into natural, voice-based communication through these capabilities:

NLG converts structured AI responses into conversational language that sounds natural to users.

TTS systems generate realistic voice outputs with human-like pronunciation and pacing.

Neural voice synthesis enables businesses to maintain a consistent brand voice across interactions.

Emotion and tone adjustments make AI responses sound more engaging and contextually appropriate.

Real-time speech generation enables instant voice replies in live customer conversations.

5. End-to-End Workflow in Voice Agents

Voice agents combine multiple AI technologies into a unified conversational pipeline that handles listening, understanding, responding, and speaking automatically. This integrated workflow enables seamless real-time interactions.

An integrated voice pipeline works through several coordinated stages that enable seamless conversational responses:

Voice agents capture spoken input and convert it into text using real-time speech recognition models.

Language processing layers analyze intent and determine the appropriate conversational response.

Dialogue management systems coordinate responses and maintain conversation continuity.

Response generation modules create structured replies that are converted into natural speech output.

Low-latency processing ensures that users experience minimal delays during live voice interactions.

6. Omnichannel and Multi-Platform Support

Modern conversational AI solutions extend beyond a single communication channel, enabling voice interactions across calls, mobile apps, websites, and connected devices. This flexibility ensures consistent user experiences across digital ecosystems. Modern conversational AI platforms extend voice interactions through the following infrastructure and integration capabilities:

Cloud-based AI infrastructure allows chatbots to operate consistently across multiple platforms.

Integration with telephony systems enables automated voice interactions in call centers.

Cross-device compatibility ensures seamless transitions between voice-enabled applications.

Unified communication workflows help businesses maintain consistent messaging across channels.

Centralized analytics provide insights into voice interactions across different platforms.

7. Machine Learning for Continuous Improvement

Machine learning models allow chatbot voice recognition systems to improve accuracy and efficiency over time through ongoing training and performance optimization. Continuous learning helps AI agents adapt to evolving user behavior. Continuous learning mechanisms allow conversational AI to refine performance through the following processes:

Training datasets enable speech recognition systems to refine accuracy based on real-world usage patterns.

Feedback loops help conversational AI systems learn from errors and improve future responses.

Predictive analytics support proactive conversational strategies and smarter recommendations.

Continuous model updates allow systems to adapt to new languages, slang, and conversational styles.

Automated performance monitoring ensures long-term scalability and reliability of voice agents.

Also Read: The Future of AI Voice-Driven Interactions and Their Impact

Types of Chatbot Voice Recognition in Conversational AI

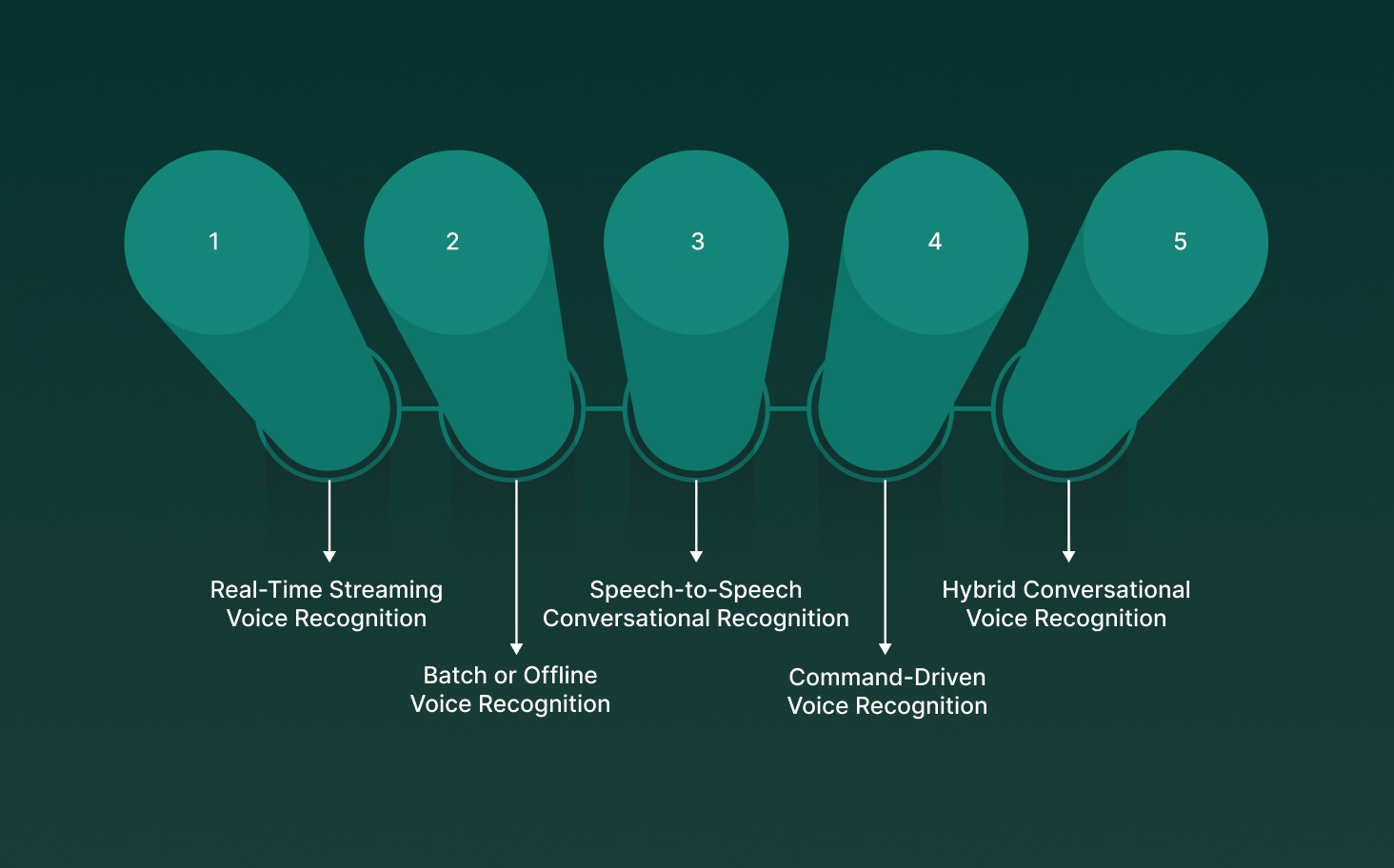

Each recognition type prioritizes specific operational outcomes such as real-time responsiveness, transcription accuracy, conversational depth, or task execution efficiency.

Rather than using a single architecture, modern conversational AI deployments combine multiple recognition types to maintain performance across live conversations, analytics workflows, and structured voice commands. Selecting the right recognition type ensures that chatbot interactions remain responsive, context-aware, and aligned with real-world communication patterns.

Below are the primary types of chatbot voice recognition systems commonly used in conversational AI environments.

1. Real-Time Streaming Voice Recognition

Real-time streaming voice recognition processes spoken audio continuously as users speak, allowing conversational AI chatbots to interpret speech instantly and generate dynamic responses during ongoing conversations. This recognition type forms the foundation of live voice assistants, AI call agents, and interactive customer support systems.

Operational characteristics defining streaming recognition include:

Continuous audio processing that generates partial transcripts while speech is still in progress

Immediate conversational feedback loops that reduce response delays during live interactions

Context-aware dialogue tracking that allows chatbots to manage multi-turn conversations smoothly

What it means for conversational AI: Streaming recognition enables natural, human-like dialogue where users can speak freely without waiting for long pauses before receiving responses.

2. Batch or Offline Voice Recognition

Batch voice recognition processes recorded audio after conversations conclude, focusing on accurate transcription and large-scale speech analysis rather than live conversational interaction. This recognition type supports workflows where conversational review, compliance checks, or performance analytics take priority.

Technical behaviors shaping batch recognition systems include:

Full-audio processing that captures entire conversations before generating transcripts

High AI transcription precision supported by complete linguistic context

Integration with analytics pipelines that evaluate conversation trends and agent performance

What it means for conversational AI: Batch recognition strengthens post-call insights and operational reporting while complementing real-time chatbot interactions.

3. Speech-to-Speech Conversational Recognition

Speech-to-speech recognition allows conversational AI chatbots to listen, interpret spoken language, and respond directly in voice without requiring visible text-based processing layers. This recognition type supports immersive conversational experiences where dialogue flows naturally between users and AI agents.

Technical features supporting speech-to-speech recognition include:

Direct audio understanding combined with conversational reasoning capabilities

Continuous context retention across long voice interactions

Smooth response generation that maintains conversational rhythm and tone

What it means for conversational AI: Speech-to-speech recognition enables advanced AI agents capable of sustaining fluid, voice-first interactions that feel closer to human conversation.

4. Command-Driven Voice Recognition

Command-driven voice recognition focuses on structured voice inputs designed to trigger predefined actions or workflows rather than open-ended dialogue. This recognition type is commonly used in automation-driven chatbot environments where interactions follow predictable conversational paths.

Operational traits defining command-driven recognition include:

Intent-based speech processing optimized for short instructions

Lightweight recognition pipelines designed for fast execution

Reliable action triggering for task automation and transactional workflows

What it means for conversational AI: Command-driven recognition ensures consistent performance in environments where chatbots execute clear instructions such as bookings, device control, or menu navigation.

5. Hybrid Conversational Voice Recognition

Hybrid recognition combines multiple voice processing strategies to manage diverse conversational scenarios within a single chatbot system. By blending real-time streaming, batch analysis, and command-driven recognition, conversational AI platforms maintain flexibility across different interaction modes.

Architectural characteristics defining hybrid recognition systems include:

Adaptive switching between conversational and transactional recognition modes

Integration of live dialogue handling with background analytics processing

Scalable conversational intelligence capable of handling both complex conversations and quick commands

What it means for conversational AI: Hybrid recognition allows chatbot systems to operate efficiently across real-world voice environments where conversation patterns shift between casual dialogue and structured task execution.

The Working Process Behind Chatbot Voice Recognition Technology

Voice-enabled chatbots allow users to speak naturally while AI systems listen, interpret requests, and reply instantly. Rather than waiting for complete recordings, modern conversational AI platforms handle speech as a live stream, allowing responses to begin while the conversation is still in progress. This real-time processing keeps interactions smooth, fast, and close to human dialogue.

The core stages that make real-time chatbot voice conversations possible include:

Continuous Voice Input Processing: Chatbots capture spoken audio through apps, devices, or call systems and process small audio segments instantly so conversations start without noticeable delays.

Speech Conversion Engine: Voice signals are transformed into structured text using real-time speech processing models, giving conversational systems a format they can interpret and respond to immediately.

Intent Detection and Context Tracking: Language processing layers examine meaning, tone, and previous messages to identify user goals and maintain conversation continuity across multiple exchanges.

Conversation Control Logic: Decision-making engines analyze intent and dialogue history to determine how the chatbot should respond next, keeping interactions consistent and purposeful.

Response Creation and Voice Playback: AI systems generate replies in natural language and convert them into spoken output, allowing chatbots to communicate through realistic synthetic voices.

Audio Quality and Speech Adaptation: Signal processing components reduce background noise and adjust for accents, pronunciation differences, and varied speaking speeds to maintain reliable recognition.

Low-Latency Processing Flow: Streaming inference and adaptive computing techniques help chatbots deliver near-instant responses, ensuring conversations feel responsive and uninterrupted.

Looking at this layered workflow helps explain how chatbot voice recognition blends speech processing, conversational intelligence, and real-time response generation to support modern AI-driven voice experiences.

Also Read: How AI Chatbots Drive Customer Service: 7 Key Use Cases

How Developers Integrate Chatbot Voice Recognition Into Production Systems?

Developers integrate chatbot voice recognition into production by connecting live audio streams, processing conversations in real time, and linking voice inputs directly to AI-driven workflows. Integration goes beyond basic speech capture.

It requires building a continuous pipeline where audio ingestion, intent processing, and voice responses work together without delays or broken conversation flow.

In production environments, modern voice chatbot systems typically include these technical layers:

Streaming Voice Input: Developers stream audio from browsers, mobile apps, or telephony systems into voice recognition engines using real-time buffers. This keeps conversations continuous without pausing application performance.

Live Transcript Events: Partial transcripts trigger application logic instantly. Chatbots can prepare responses, update user interfaces, or fetch backend data while the user is still speaking.

SDK-Based Streaming Pipelines: Node.js or Python SDKs manage secure connections, websocket streaming, and callbacks, helping developers maintain low latency and stable real-time performance.

Intent and Context Processing: Spoken text moves into conversational AI models that identify user goals, extract key details, and maintain context across multi-turn conversations.

Dialogue and Workflow Control: Conversation engines decide how the chatbot responds, trigger business actions such as bookings or updates, and keep interactions structured from start to finish.

Real-Time Voice Response Delivery: AI-generated replies are converted into natural speech immediately so users experience smooth, human-like conversations without noticeable delays.

Post-Processing and Analytics Pipelines: Transcripts are cleaned, structured with timestamps and speaker labels, and routed into analytics dashboards, CRMs, or monitoring tools for business insights.

Successful chatbot voice recognition integration treats voice as live infrastructure rather than an add-on feature. Well-designed pipelines keep responses fast, conversations natural, and production systems stable under real user traffic.

Key Challenges Enterprise Teams Face With Chatbots Using Voice Recognition

Enterprise teams often see that chatbot voice recognition problems stem from real conversational patterns, platform limitations, and workflow complexity rather than pure speech accuracy. Smooth deployment depends on spotting technical gaps that affect response speed, conversation quality, and automation stability before voice bots go live.

Common technical barriers enterprise teams experience during chatbot voice recognition implementation include:

Challenge Area | What Happens Technically | Why It Interrupts Production Workflows |

Processing Delays Across Voice Systems | Voice conversations pass through multiple layers, including audio capture, speech processing, intent detection, reasoning engines, and response generation. | Slow processing leads to noticeable pauses that make conversations feel robotic and reduce user confidence. |

Breakdowns In Conversation Context | Dialogue systems fail to track prior interactions when users change topics or provide incomplete responses. | Chatbots lose continuity, repeat prompts, or deliver off-topic replies, slowing task completion. |

Complex Natural Speech Behavior | Real users use fillers, accents, interruptions, and informal phrasing, which complicate intent recognition. | Bots misunderstand the conversation flow, leading to incorrect responses or awkward conversational timing. |

Integration Gaps Between Voice Components | Chatbot ecosystems rely on seamless coordination among speech-to-text conversion, language models, automation logic, and voice output systems. | Integration failures cause inconsistent responses, dropped conversations, or incomplete automation cycles. |

Scaling Real-Time Voice Conversations | Simultaneous voice sessions increase demand on the transcription, processing, and response-generation infrastructure. | High traffic causes slower performance, reduced stability, and inconsistent user experiences during peak loads. |

Domain-Specific Communication Challenges | General-purpose models struggle with technical terms, structured workflows, and industry-specific language without customization. | Errors occur in regulated industries like finance or healthcare where precise terminology is essential for automation accuracy. |

Enterprise chatbot voice recognition challenges usually result from multiple factors working together. Most deployment issues arise from the interaction between conversational complexity, voice processing layers, system design decisions, and infrastructure planning made during early implementation stages.

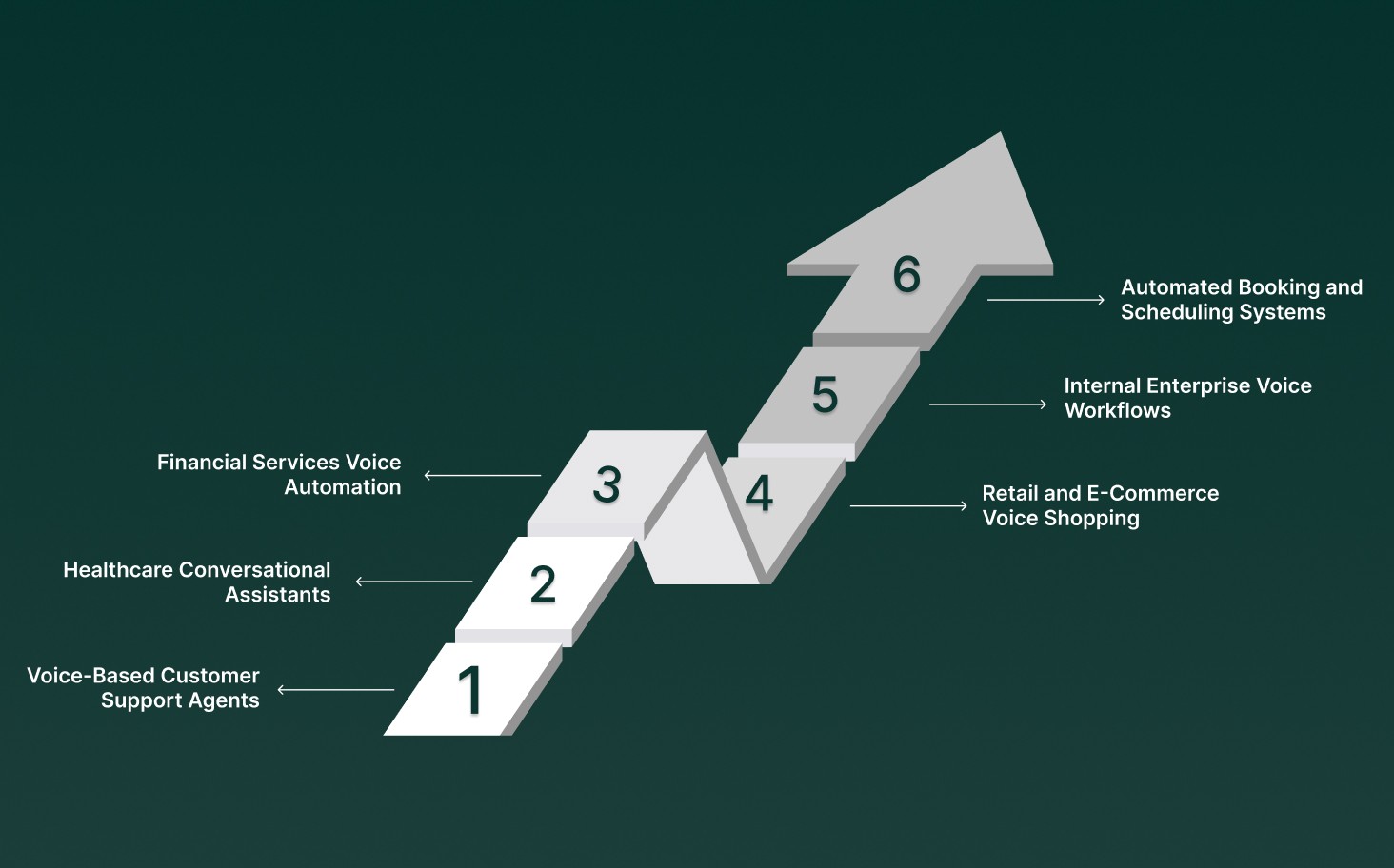

Real-World Use Cases of Chatbot Voice Recognition in Conversational AI

Chatbot voice recognition powers production conversational AI systems by converting live speech into structured conversational signals that drive automation, real-time reasoning, and intelligent response generation at scale. Modern deployments move beyond simple voice commands toward continuous dialogue intelligence, where spoken interactions trigger workflows, update systems, and guide AI agents while conversations are still in progress.

Enterprise conversational AI relies on chatbot voice recognition across several specialized operational scenarios:

Voice-Based Customer Support Agents: Real-time voice conversations allow AI agents to interpret customer intent, provide instant solutions, trigger backend workflows, and escalate complex issues during live interactions without requiring manual intervention or post-call processing.

Healthcare Conversational Assistants: Voice-enabled chatbots manage appointment bookings, medication reminders, and patient queries through natural conversations, reducing administrative pressure while maintaining structured conversational records for operational tracking.

Financial Services Voice Automation: Conversational AI systems process spoken customer requests for account updates, payment confirmations, and service inquiries while maintaining secure, structured dialogue flows that support compliance and audit requirements.

Retail and E-Commerce Voice Shopping: Customers interact with AI assistants via spoken queries to search for products, place orders, track shipments, and receive support, enabling businesses to deliver seamless voice-first purchasing experiences across digital platforms.

Internal Enterprise Voice Workflows: Employees use voice-enabled conversational bots to log IT tickets, retrieve internal information, update records, and complete routine operational tasks through natural spoken interactions without navigating multiple enterprise tools.

Automated Booking and Scheduling Systems: Conversational voice agents manage reservations, rescheduling requests, and confirmations through real-time dialogue, automatically syncing spoken requests with backend calendars and operational systems.

Enterprise teams use it as a live conversational intelligence layer, enabling AI agents to listen, reason, and respond instantly while maintaining structured, scalable voice interactions across modern conversational AI environments.

Also Read: Top 11 Conversational AI Platforms In 2025

What Chatbot Voice Recognition Will Look Like Beyond 2026

As conversational AI continues to mature, chatbot voice recognition is shifting from a simple speech input feature to a real-time intelligence layer that enables AI agents to listen, reason, and respond naturally during live conversations. Future systems will focus less on isolated commands and more on sustained, context-rich dialogue that evolves across multi-turn interactions.

In emerging conversational platforms, chatbot voice recognition will rely on several next-phase innovations:

Context-Persistent Conversations: Voice agents will retain conversational memory across long sessions, enabling follow-up questions, clarifications, and multi-step workflows without restarting context.

Speech-to-Speech Interaction Pipelines: Systems will process spoken input and generate spoken responses directly, reducing delays caused by intermediate text processing and creating more fluid dialogue experiences.

Emotion-Aware Voice Processing: Advanced models will analyze tone, pacing, and vocal signals to detect urgency, frustration, or intent shifts, allowing AI agents to adjust responses in real time.

Adaptive Accent and Language Handling: Future voice chatbots will continuously adjust to regional accents, mixed languages, and conversational slang without requiring manual configuration or retraining.

Workflow-Driven Voice Intelligence: Conversational AI will connect voice recognition directly with business logic, enabling automated actions such as bookings, account updates, or compliance checks while conversations are still in progress.

Beyond 2026, chatbot voice recognition will be judged not only by transcription accuracy but by how naturally AI agents participate in conversations. Systems that maintain context, respond quickly, and behave predictably during live interactions will define the next generation of conversational AI experiences.

How Chatbot Voice Recognition Enables Real-Time Conversational AI with Pulse STT?

Chatbot voice recognition allows conversational AI to listen to users, process spoken input, and respond instantly during live conversations. Using real-time speech engines like Pulse STT, AI agents convert voice into text quickly and deliver natural replies without delays.

In real conversational AI systems, chatbot voice recognition supports live interactions through these core capabilities:

Real-Time Speech Recognition: Pulse STT converts spoken language into text instantly so AI agents can start processing requests while conversations are still ongoing.

Intent and Context Analysis: AI models review transcripts to identify user intent, follow conversation flow, and produce accurate responses based on context.

Fast Voice Response Generation: Speech synthesis systems generate spoken replies quickly, enabling conversations to flow smoothly without long pauses.

Multilingual and Accent Flexibility: Voice recognition models support multiple languages and regional accents, enabling chatbots to communicate effectively with diverse users.

Continuous Conversation Tracking: Dialogue systems remember prior exchanges, enabling AI agents to manage follow-up questions and ongoing discussions naturally.

Integrated Speech Intelligence: Keyword detection, emotional signals, and contextual cues help AI respond appropriately during live conversations.

Omnichannel Voice Support: Voice chatbots operate across calls, mobile apps, websites, and connected devices to deliver consistent conversational experiences.

Scalable Real-Time Automation: AI systems manage large volumes of simultaneous voice interactions while maintaining speed and reliability.

Chatbot voice recognition with Pulse STT serves as a real-time conversational layer, combining speech recognition, language processing, and voice generation to deliver fast, natural AI-driven voice experiences.

Conclusion

Chatbot voice recognition is no longer just a feature added to conversational AI. In real deployments, it shows up in how smoothly AI agents listen, how accurately they interpret intent, and how naturally conversations continue without delays or repeated inputs. Teams that succeed treat chatbot voice recognition as a real-time system capability that must perform reliably during live calls, in the presence of background noise, and during high-volume interactions.

If you are building or scaling conversational AI where speed and natural voice interaction matter, Smallest AI is where you fit. With real-time speech recognition, intelligent reasoning, and natural voice generation, the platform helps teams deploy chatbot voice recognition that remains fast, stable, and production-ready across industries.

Explore how chatbot voice recognition can power modern conversational AI agents and help you deliver scalable, real-time voice experiences. Get in touch with us!

How does chatbot voice recognition manage interruptions or overlapping speakers during live conversations?

Can chatbot voice recognition adapt to industry-specific language without rebuilding the entire AI system?

How does latency affect chatbot voice recognition performance in real-time AI agents?

Latency directly affects how natural conversations feel. Streaming processing and incremental transcription enable AI agents to respond while users are still speaking, keeping conversations fluid and reducing awkward delays.

How do teams monitor chatbot voice recognition performance in production environments?