Complete Insights into Speech Recognition im AI Automation Systems

Abhishek Mishra

Understand speech recognition in AI systems including core components, ASR functionality, and accuracy challenges. Discover its applications today!

Every automated workflow depends on input fidelity. Yet in many enterprises, critical decisions are still buried inside live conversations, support calls, and operational discussions that systems cannot process in real time. When voice moves faster than automation layers can interpret it, context fragments, follow-ups stall, and downstream systems operate on incomplete information.

Speech recognition in AI automation systems now sits at the center of that gap. As conversational interfaces expand across customer support, compliance monitoring, sales operations, and internal tooling, speech recognition is no longer just AI transcription software.

It functions as execution infrastructure. It captures spoken language, structures it instantly, and feeds it into automation engines that trigger actions without human mediation.

This guide explores how speech recognition operates inside AI automation systems, where production systems break down, and how to evaluate enterprise-grade deployments built for real operational load.

Key Takeaways

Speech Recognition Is Automation Infrastructure: In AI automation systems, speech recognition directly powers workflow triggers, AI reasoning, compliance checks, and real-time decision routing.

System Architecture Determines Stability: Streaming-first, end-to-end neural models consistently outperform fragmented pipelines in live, interruption-heavy environments.

Accuracy Is Multidimensional: Word Error Rate alone is insufficient. Numeric handling, domain vocabulary control, diarization accuracy, and confidence scoring determine real production reliability.

Latency Is a Business Constraint: Sub-100ms time-to-first-transcript is critical when speech directly drives agent assist, automated prompts, or workflow execution.

Deployment Impacts Risk and Cost: Cloud, on-prem, or hybrid deployments shape data residency, compliance posture, operational control, and long-term scalability.

What Is Speech Recognition in AI Automation Systems?

Speech recognition in AI automation systems is the process of transforming live or recorded voice into structured, machine-actionable language that automation engines can interpret and execute in real time.

It goes beyond basic transcription. In automation environments, speech recognition functions as an input layer for decision systems. The output is not just readable text for humans. It is structured data designed to trigger workflows, update records, guide AI models, and activate downstream logic without manual intervention.

Unlike generic transcription tools built for documentation or note-taking, automation-focused speech recognition systems are engineered for operational performance. They prioritize:

Real-time streaming inference that emits partial transcripts while a speaker is still talking

Structured JSON outputs with timestamps, speaker labels, and confidence scores

Intent classification and entity enrichment for workflow routing and automation logic

Direct API integration to trigger downstream systems instantly

Production-grade latency control to maintain conversational responsiveness

The objective is not simply to convert speech into readable text. The objective is to convert speech into executable language that drives automated systems reliably under live conditions.

Also Read: How AI Chatbots Drive Customer Service: 7 Key Use Cases

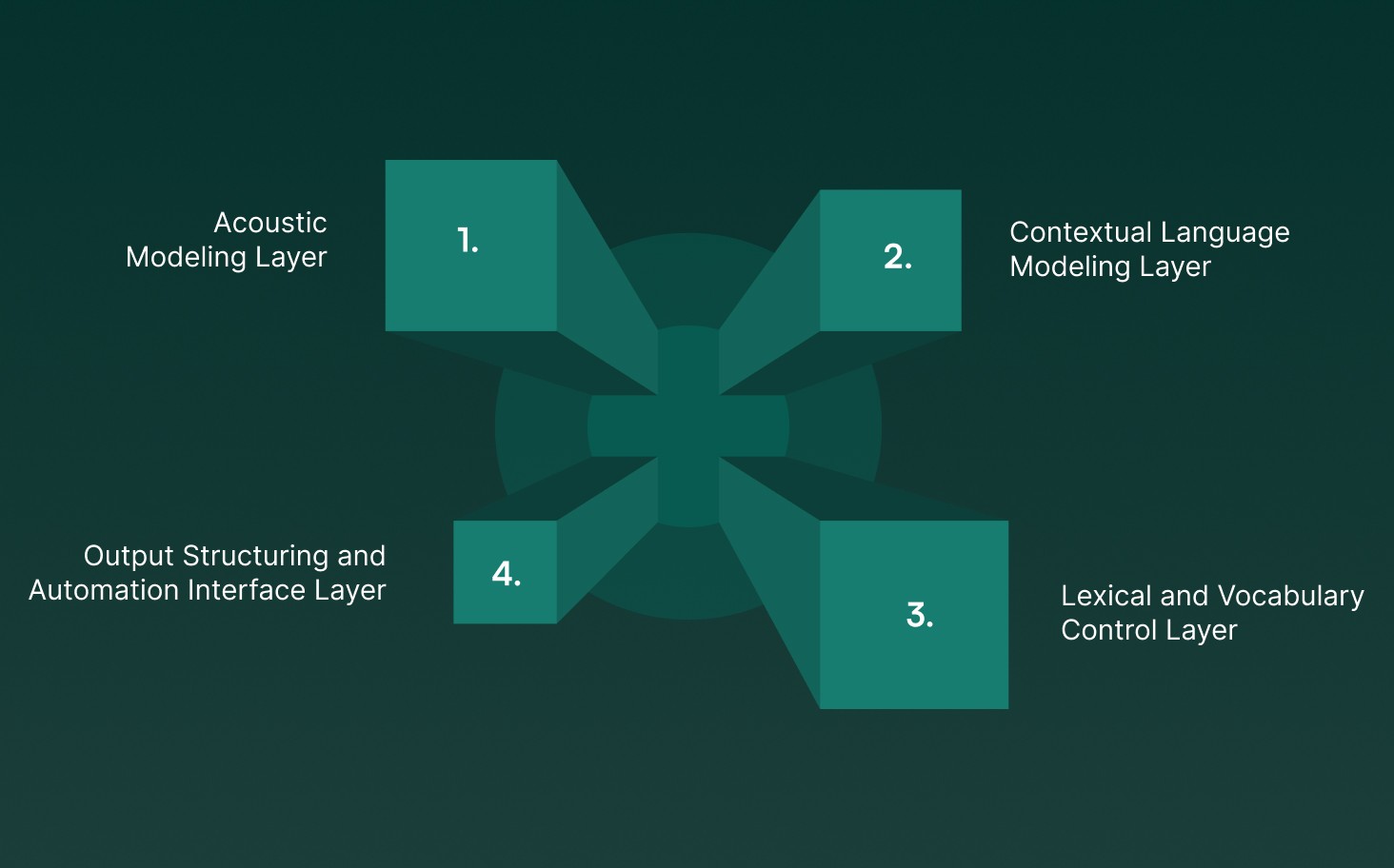

Core Components of Speech Recognition in AI Automation Systems

In production environments, these components must work together under latency constraints, noisy audio conditions, and domain-specific language requirements. When tightly integrated, they transform conversation into reliable, machine-actionable intelligence.

Below are the core components that make this possible.

1. Acoustic Modeling Layer

The acoustic layer determines how raw sound waves are interpreted at the lowest level of the system. It converts continuous audio into distinguishable speech units that can be processed further.

Neural Acoustic Models: Deep learning models analyze waveform input and map it to phonemes or subword units. These models are trained across accents, speaking speeds, and microphone conditions to maintain stability in real-world conversations.

Signal Conditioning and Feature Extraction: Before decoding begins, audio is normalized, denoised, and transformed into spectral representations optimized for phonetic discrimination. This step reduces the impact of telephony compression and environmental interference.

Streaming Frame Encoding: Modern systems generate frame-level embeddings incrementally, enabling live decoding without waiting for complete sentences. This is essential for real-time automation workflows.

The acoustic layer ensures that speech is captured accurately even before context is applied.

2. Contextual Language Modeling Layer

Once sound units are identified, the language modeling layer determines how those units form meaningful word sequences.

Probabilistic Sequence Prediction: Language models evaluate likely word combinations based on conversational context, resolving ambiguity between phonetically similar terms.

Domain-Aware Context Resolution: Enterprise systems incorporate industry vocabulary, structured phrases, and numeric patterns to prevent high-risk misinterpretation.

Long-Range Context Retention: Advanced models maintain coherence across extended utterances, preserving semantic continuity for automation engines.

This layer ensures that transcripts are not just phonetically accurate, but contextually correct.

3. Lexical and Vocabulary Control Layer

The lexical layer connects recognized sound patterns to defined vocabulary entries and pronunciation rules.

Pronunciation Lexicons: Lexicons define how words are spoken and map phonetic sequences to textual vocabulary, improving recognition of specialized terminology, uncommon names, and internal identifiers.

Dynamic Vocabulary Biasing: Enterprise systems can prioritize critical keywords such as compliance statements, product codes, or regulated terminology during decoding.

Structured Token Formatting: Dedicated logic ensures correct transcription of dates, currency values, serial numbers, and account identifiers, which are often high-impact automation inputs.

This layer reduces domain-specific error rates and increases production reliability.

4. Output Structuring and Automation Interface Layer

The final layer prepares recognized speech for direct system execution rather than human review.

Timestamped Transcript Generation: Word-level timing enables synchronization with calls, workflow triggers, and analytics pipelines.

Speaker Diarization: Multi-speaker conversations are segmented and attributed correctly, preserving compliance and analytical integrity.

Confidence Scoring and Metadata Tagging: Per-token confidence scores allow automation engines to apply fallback rules or verification steps when uncertainty exceeds defined thresholds.

This layer transforms transcripts into structured, machine-consumable events.

Speech recognition in AI automation systems operates as a coordinated stack of acoustic interpretation, contextual reasoning, vocabulary control, and structured output delivery.

When these components are engineered cohesively, spoken language becomes dependable, executable input capable of driving real-time automation under production-scale conversational load.

If you’re creating voice applications that must process and reply instantly, discover how Speech-to-Text and Text-to-Speech Technology: Making Interactions Smarter combine to enable intelligent, real-time, production-ready interactions.

Operational Flow of Speech Recognition in AI Automation Systems

Rather than producing static transcripts for later review, the system processes, interprets, and structures speech incrementally so downstream workflows can react immediately. Within live automation environments, this functionality unfolds through the following execution stages:

Voice Ingestion & Signal Stabilization: Speech enters via microphones, telephony, or digital streams, and is normalized, denoised, and filtered to remove distortion, compression artifacts, and background interference for clean input.

Acoustic Feature Transformation: Cleaned audio is segmented into millisecond frames and transformed into spectral features that capture frequency, pitch, and timing, enabling efficient speech analysis without reliance on raw waveforms.

Neural Decoding & Pattern Alignment: Features are processed by neural models aligned with phonetic and subword patterns, producing partial transcripts incrementally while the speaker continues.

Contextual Interpretation & Intent Structuring: Language models apply contextual reasoning to resolve ambiguity, integrate domain vocabulary, numeric rules, and conversational intent, ensuring outputs are stable for automation triggers.

Adaptive Learning & Confidence Control: Deep neural networks improve via retraining and domain adaptation, while runtime confidence scoring guides automation engines to apply fallbacks, verification, or escalation when uncertainty arises.

Together, these stages create a streaming execution framework. Speech is captured, interpreted, structured, and delivered to automation engines without delay.

This operational design enables AI automation systems to treat voice not as passive input, but as a live control layer capable of driving real-time decisions and workflow execution.

Traditional vs End-to-End Architectures in AI Automation Speech Recognition

In AI automation systems, the architectural choice directly affects latency, scalability, contextual understanding, and workflow reliability. Most enterprise platforms today rely on one of two primary approaches: traditional hybrid systems or end-to-end AI models.

The differences are structural, operational, and performance-driven, as shown below.

Comparison Area | Traditional Hybrid Approach | End-to-End AI Approach |

System Architecture | Separate acoustic, language, and decoding models connected in a pipeline. | Single unified neural network mapping audio directly to text or structured outputs. |

Model Coordination | Independent components must be tuned and maintained separately. | Shared representations learned jointly across the full speech recognition task. |

Latency Profile | Higher latency due to multiple processing stages and handoffs. | Lower latency with streaming-first design and fewer internal transitions. |

Error Propagation | Errors in acoustic models can cascade into language and decoding layers. | Reduced compounding errors due to integrated training across layers. |

Real-Time Conversational Fit | May struggle with interruptions, overlapping speech, and rapid turn-taking. | Better suited for live, interruption-aware conversational automation. |

Scalability and Maintenance | Complex configuration and optimization across subsystems. | Simplified scaling with centralized model optimization. |

Domain Adaptation | Requires adjustments across multiple components. | Vocabulary biasing and fine-tuning are applied within a unified architecture. |

Automation Readiness | Often optimized for transcription rather than direct workflow execution. | Designed for structured outputs that can trigger automation instantly. |

The choice between these approaches should align with latency tolerance, deployment constraints, and the complexity of real-world conversational environments.

How Is Voice Data Secured in AI Automation Systems?

Because speech recognition in AI automation systems directly influences workflows and decision engines, privacy and security must be built into the architecture rather than treated as an afterthought.

To maintain trust, compliance, and operational stability, organizations should evaluate voice automation security across multiple structured control layers outlined below.

Focus Area | Why It Matters in Voice Automation | Recommended Controls | Operational Impact |

Data Privacy Protection | Voice systems capture conversations that may include confidential or regulated information | End-to-end encryption, secure cloud or on-prem storage, role-based access controls, and defined data retention policies | Prevents data leakage and protects stored transcripts and recordings |

Access and Identity Security | Automation platforms integrate with multiple users, APIs, and internal systems | Multi-factor authentication, least-privilege access models, secure API key management | Reduces unauthorized access and secures automation pipelines |

Compliance and Governance | Enterprises must meet industry and regional regulations when handling voice data | Audit logging, consent tracking, policy enforcement mechanisms, and regulatory alignment controls | Ensures audit readiness and reduces legal or compliance risk |

Operational Monitoring | Real-time voice systems require continuous oversight to detect misuse or anomalies | Activity monitoring dashboards, anomaly detection alerts, and usage analytics | Enables early detection of suspicious activity and maintains system integrity |

Infrastructure Security | Speech recognition systems operate across cloud, hybrid, or edge environments | Secure deployment pipelines, network isolation, endpoint protection, and infrastructure hardening | Maintains stable and protected automation performance at scale |

By aligning privacy, security, compliance, and infrastructure controls, organizations can deploy AI speech automation systems that are not only intelligent and efficient but also secure, compliant, and enterprise-ready.

Also Read: Top 11 Conversational AI Platforms In 2025

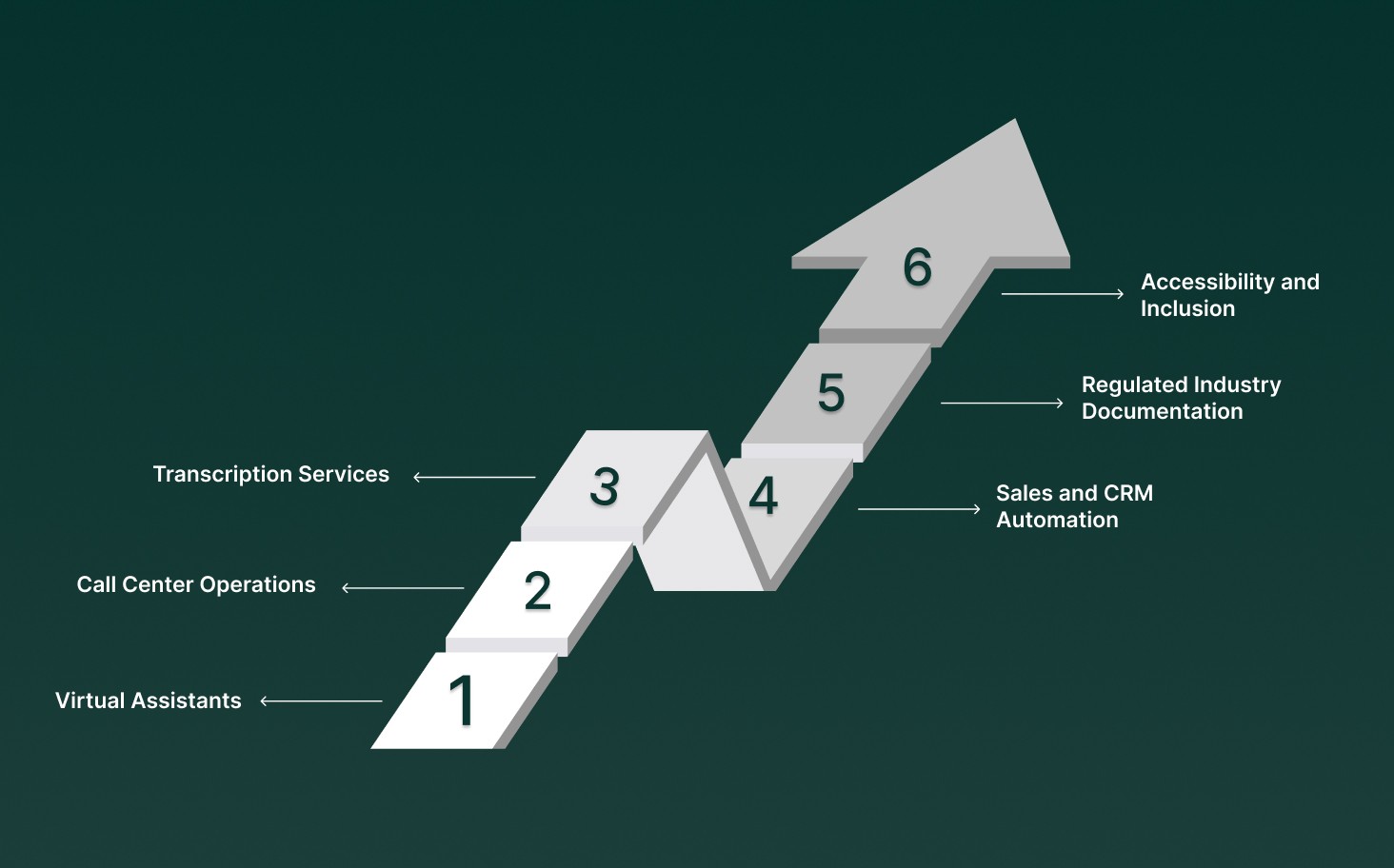

Enterprise Applications of Speech Recognition in AI Automation Systems

Speech recognition in AI automation transforms spoken language into structured, actionable data, enabling seamless execution of workflows across industries. Key applications include:

Virtual Assistants: Voice-enabled assistants leverage speech recognition and understanding to execute commands, answer queries, and automate routine tasks in both consumer and enterprise contexts.

Call Center Operations: Real-time transcription of calls allows automation systems to analyze conversations and trigger workflows such as ticket creation, escalation alerts, or CRM updates instantly.

Transcription Services: Sectors like media, healthcare, and legal use AI-powered transcription to generate accurate transcripts quickly, reducing manual effort and improving documentation efficiency.

Sales and CRM Automation: Client conversations can be automatically transcribed and logged in CRM systems, enabling real-time follow-ups, action-item extraction, and pipeline updates without manual entry.

Regulated Industry Documentation: Speech recognition ensures that domain-specific terminology such as medical, legal, or financial language is captured accurately, meeting audit, compliance, and record-keeping standards.

Accessibility and Inclusion: Real-time voice-to-text outputs provide live captions and transcripts for employees or customers with hearing or language challenges, ensuring equitable access to information during meetings, calls, and events.

Across industries, speech recognition acts as the connective layer that converts spoken interactions into operational data enterprises can trust, analyze, and act on in real time.

Also Read: The Ultimate Guide to Contact Center Automation.

How To Evaluate Accuracy in Speech Recognition for AI Automation?

Measuring speech recognition performance in AI automation systems involves assessing transcription reliability and its impact on downstream workflows. Key evaluation metrics include:

Word Error Rate (WER): WER calculates the percentage of words incorrectly transcribed. A lower WER indicates higher transcription accuracy, ensuring automation engines can act on spoken input with confidence.

Real-Time Performance Metrics: Evaluation also considers latency, partial transcript quality, and consistency during live interactions, which directly affect the responsiveness of automation workflows.

Contextual Accuracy: Beyond individual words, assessing how well the system interprets phrases, domain-specific vocabulary, and numeric data ensures that outputs are actionable and aligned with automation requirements.

Confidence Scoring: Per-word or per-utterance confidence metrics provide a quantitative measure of reliability, enabling AI systems to trigger verification, fallback, or automated actions based on transcript certainty.

By combining these evaluation measures, organizations can ensure that speech recognition systems meet production-grade accuracy requirements and drive effective AI automation.

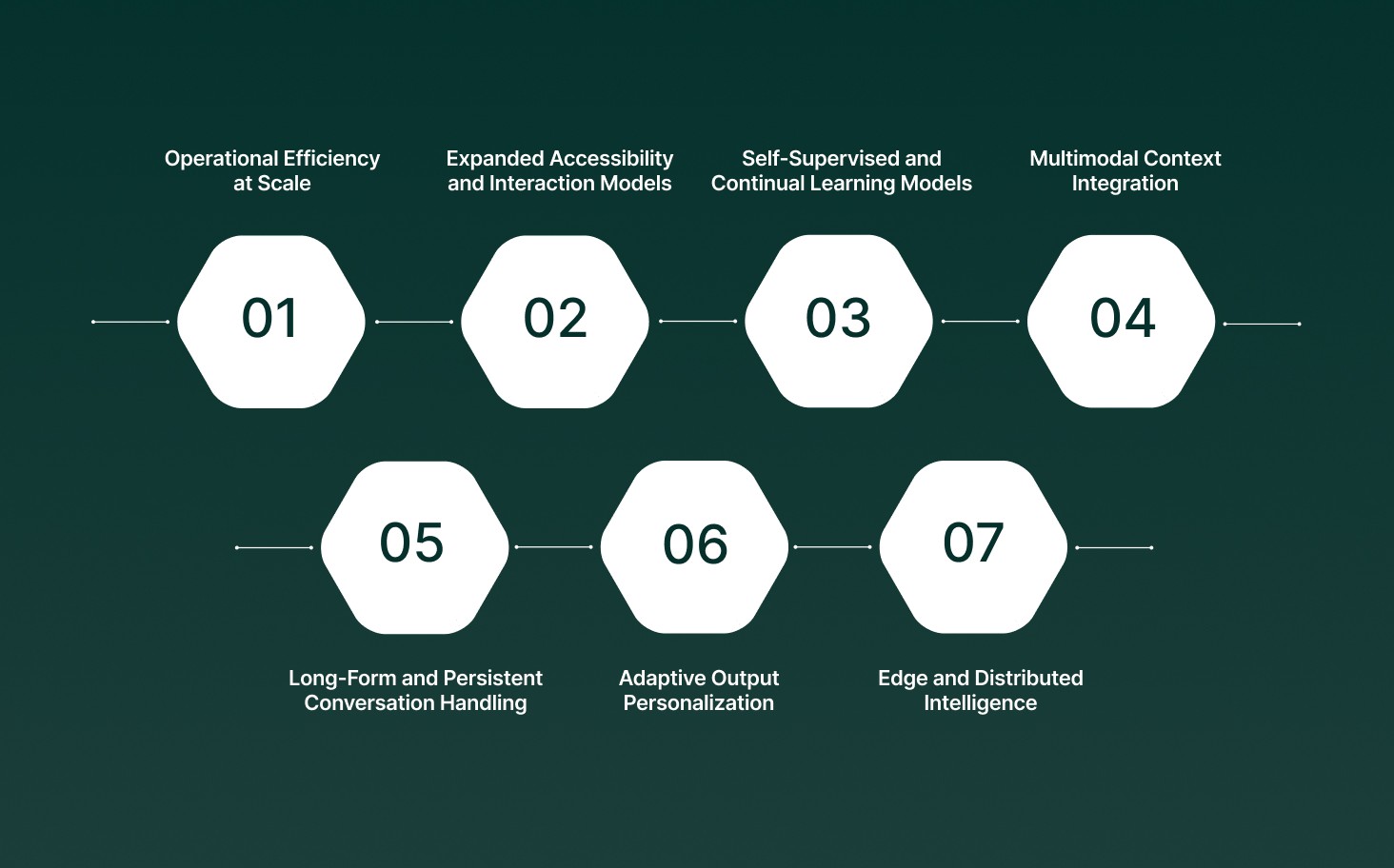

What the Future Looks Like for Speech Recognition in AI Automation Systems

Speech recognition in AI automation systems is evolving from basic transcription into an adaptive, decision-aware voice infrastructure. As automation scales across industries, innovation is focused on contextual intelligence, system stability, and deeper workflow integration under real production conditions.

Future advancements are shaping automation across several critical dimensions:

Operational Efficiency at Scale: Speech systems will improve intent precision, entity extraction, and structured output generation, enabling spoken input to trigger automated business actions instantly, reducing manual review and accelerating workflows.

Expanded Accessibility and Interaction Models: Automation platforms will enable adaptive captioning, multilingual switching, real-time translation, dynamic speech pacing, and hands-free navigation to support diverse users and environments.

Self-Supervised and Continual Learning Models: Next-generation systems will learn from large volumes of unlabeled conversational data, allowing speech models to improve accuracy, adapt to domain shifts, and handle evolving terminology without constant retraining.

Multimodal Context Integration: Speech recognition will combine with text, behavioral signals, and external system data to interpret situational intent, enabling smarter automation decisions beyond keyword detection.

Long-Form and Persistent Conversation Handling: Future architectures will support extended conversations with sliding context windows, maintaining consistent recognition and intent tracking across lengthy voice interactions.

Adaptive Output Personalization: Automation systems will adjust transcript formatting, response style, and conversational tone based on user role, workflow type, or compliance requirements.

Edge and Distributed Intelligence: Speech processing will increasingly run closer to data sources through edge deployments, improving latency stability, privacy control, and resilience in high-volume automation environments.

The systems that succeed will not only transcribe speech accurately but also interpret context reliably, execute workflows predictably, and adapt continuously as enterprise voice environments evolve.

How Pulse STT Powers Speech Recognition in AI Automation Systems?

Instead of operating only as a transcription layer, modern systems embed speech recognition directly into decision pipelines so conversations trigger workflows, alerts, and automated responses in real time.

In production environments, automation performance depends on tightly connected system capabilities that keep voice processing reliable under real workload conditions by using products such as Pulse STT from Smallest AI:

Streaming Transcription with Pulse STT: Continuous audio processing generates transcripts as speech unfolds, allowing automation engines to respond instantly without waiting for full recordings.

Context-Aware Intent Processing: Speech outputs connect with language reasoning layers that identify intent, entities, and conversational meaning, enabling automation systems to execute accurate operational actions.

Real-Time Workflow Activation: Recognized speech is converted into structured triggers that automatically initiate CRM updates, compliance checks, escalation workflows, or analytics pipelines.

Dynamic Vocabulary Control: Pulse STT supports runtime customization for domain-specific terminology, ensuring automation systems capture technical phrases, product names, and internal commands accurately.

Scalable Deployment Across Automation Environments: Flexible cloud, edge, and on-premises deployments keep latency stable and ensure secure voice processing for enterprise automation workflows at scale.

Together, these technologies enable businesses to build automated systems that listen, interpret, and respond instantly during live conversations.

Conclusion

Speech recognition in AI automation systems is no longer just a background feature teams implement and forget. In real workflows, it affects how quickly automation reacts, how accurately systems trigger actions, and how smoothly voice-driven processes run without manual delays. Teams that succeed treat speech recognition as a live operational layer that must perform reliably under real conversations, real noise, and real business pressure.

If you are building or scaling automation where voice drives decisions and workflows, this is where platforms like Smallest AI make a difference. With real-time speech recognition, conversational intelligence, and automated execution working together, voice inputs move seamlessly from spoken interaction to system action.

Explore how Smallest AI supports production-ready speech recognition built for scalable AI automation systems. Get in touch with us!

How does speech recognition performance change during long automation workflows?

Why do accurate transcripts still lead to automation errors?

Does speech recognition accuracy vary across different automation environments?

Yes. Noise, accents, and audio channels affect results. AI automation systems use noise filtering and adaptive training to maintain consistency.

Can speech recognition be optimized differently for analytics versus real-time automation?