Contact Center AI in 2026: Platform Categories and Best-Fit Solutions

Prithvi Bharadwaj

Compare the best contact center AI platforms in 2026. Covers platform categories, evaluation criteria, ROI calculation, and top solutions for voice automation.

Contact center AI has moved well past the experimental phase. Voice agents, automated transcription, intelligent routing, and real-time agent assist are now standard considerations for any customer-facing operation at scale, and the organizations that have moved from pilot to production are pulling ahead of those still evaluating. That gap between adoption and integration is where most organizations are quietly losing ground, and it is the gap this article is built around.

This is written for operations leaders, CX architects, and technical decision-makers who need to evaluate platforms seriously, not collect vendor brochures. You will come away understanding what separates capable contact center AI from the kind that generates more tickets than it closes, which solution categories matter in 2026, and how to match platform capabilities to your actual use case. For broader operational context alongside this platform comparison, the ultimate guide to contact center automation is worth reading in parallel.

What Contact Center AI Actually Does (and What It Does Not)?

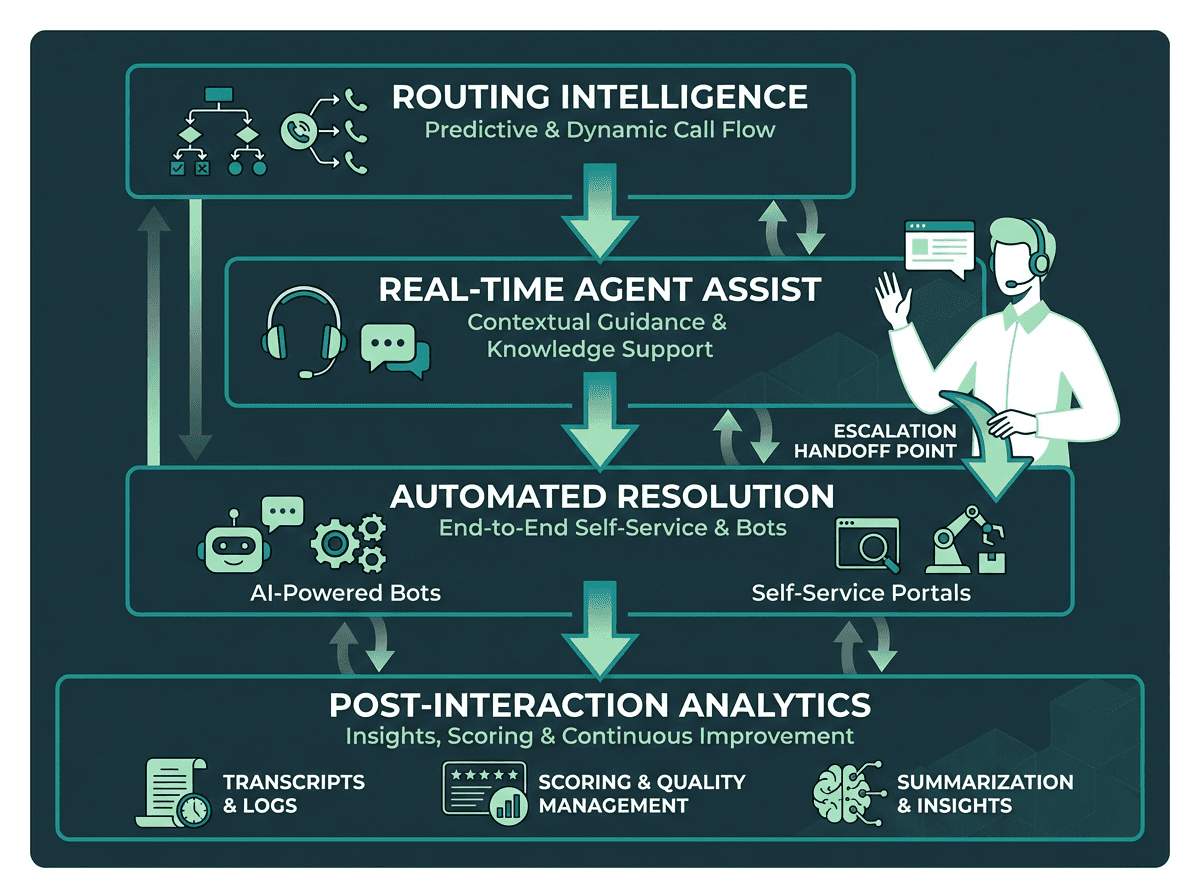

The term gets applied to everything from basic IVR upgrades to fully autonomous voice agents, which makes vendor comparisons genuinely confusing. At a functional level, contact center AI operates across four distinct layers: routing intelligence (deciding who or what handles an interaction), real-time assistance (supporting human agents during live calls), automated resolution (handling interactions end-to-end without a human), and post-interaction analytics (transcription, summarization, quality scoring). Most platforms claim all four. Few execute all four well.

What contact center AI does not do, at least not reliably yet, is replace nuanced human judgment in complex, emotionally charged escalations. The honest framing: AI handles volume, speed, and consistency. Humans handle ambiguity, empathy, and edge cases that fall outside training data. The best deployments in 2026 are designed around that division of labor, AI for volume and consistency, humans for ambiguity and escalation, rather than treating automation as a binary replacement decision.

Effective contact center AI operates across four distinct functional layers, not just one.

The 2026 Platform Landscape: Five Categories Worth Knowing

Listing every vendor alphabetically is less useful than understanding the category each platform occupies. Procurement decisions that start with category fit consistently outperform those that start with feature checklists. The market in 2026 has consolidated around five meaningful solution types.

Category | Primary Strength | Best Fit For | Representative Capability |

|---|---|---|---|

Voice AI Agent Platforms | End-to-end voice automation with low latency | High-volume inbound/outbound voice | Speech-to-speech, real-time TTS, STT |

Conversation Intelligence | Post-call analytics, QA, coaching | Quality assurance teams | Transcription, sentiment, topic detection |

Agent Assist Platforms | Real-time in-call guidance | Blended human+AI centers | Live prompts, knowledge retrieval, summarization |

CCaaS with AI Layer | Full omnichannel suite | Enterprises replacing legacy infrastructure | Routing, CRM integration, reporting |

Developer-First Speech APIs | Flexible, low-level voice primitives | Engineering teams building custom flows | STT, TTS, voice cloning, streaming APIs |

Voice AI Agent Platforms have attracted the most investment in 2026, driven by a clear shift toward autonomous agents that resolve calls without any human involvement. Before committing to this category, understanding how AI voice agents cut costs is essential, because the ROI math depends heavily on call deflection rates, not just licensing costs.

Key Platforms and What Sets Them Apart

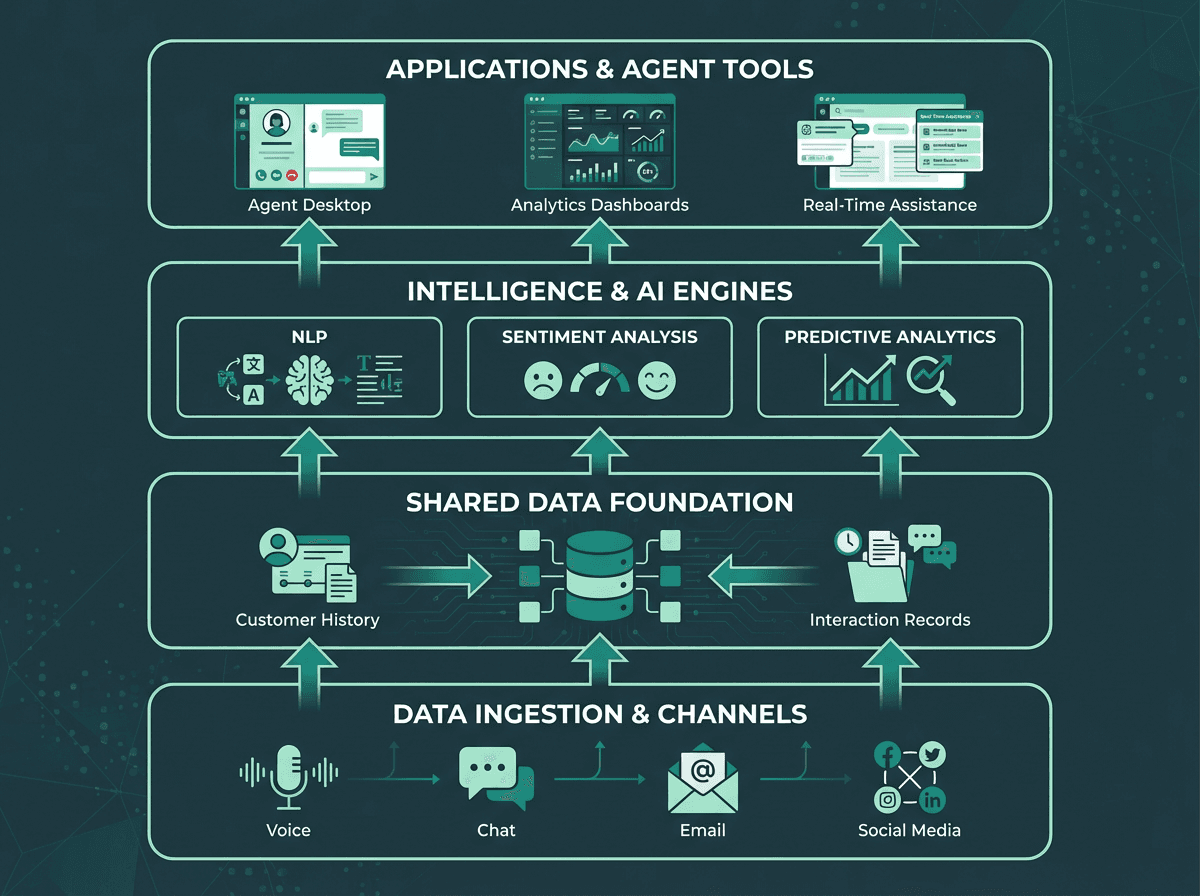

A typical contact center AI system integrates multiple layers for data, intelligence, and agent assistance.

Smallest.ai

Smallest.ai's AI contact center solution is built around a tight stack of purpose-built speech and language primitives: Lightning (text-to-speech API), Pulse (speech-to-text), Electron (a conversational small language model), and Atoms (the voice and text agent platform). The architecture is optimized for real-time performance, which matters enormously in voice applications where latency above 300ms noticeably degrades caller experience. Lightning is designed to achieve sub-100ms time-to-first-audio in real-time scenarios. The target audience is developers and operations teams who want to build or deploy voice agents without stitching together five different vendor APIs. The Waves API gives engineering teams direct access to the full speech stack. Pricing details, including tiers structured for both startup-scale and enterprise-scale deployments, are available on the Smallest.ai pricing page.

ElevenLabs

ElevenLabs is primarily a voice synthesis platform. It offers conversational AI agents as part of its product suite, with a focus on voice output quality. For teams that need deep telephony integration, real-time latency optimization, or post-call analytics, it will need to be paired with additional tools. The platform covers the voice layer but does not offer a full contact center stack.

AssemblyAI

AssemblyAI focuses on post-call audio intelligence: transcription, summarization, sentiment analysis, and topic detection. It is not a real-time voice agent platform and does not offer native TTS or agent orchestration. Organizations that already have a voice layer in place and need to extract structured data from call recordings will find it relevant for that specific function.

Deepgram

Deepgram is a speech-to-text API provider. Its core focus is transcription speed and accuracy. The platform also offers TTS through its Aura models, though synthesis is not its primary offering. Teams using Deepgram for contact center deployments will need to source TTS, agent orchestration, and conversational AI from other vendors, adding integration complexity to the stack.

Cartesia

Cartesia offers a text-to-speech model focused on low-latency synthesis. It is a developer tool, not a packaged contact center solution, and covers only the voice output layer. Teams building on it will need to build or integrate STT, agent orchestration, and conversation management separately.

How to Evaluate a Platform: The Questions That Actually Matter

Feature checklists produce shelfware. The questions that separate good procurement decisions from regrettable ones are operational and architectural. Before shortlisting any platform, get clear answers to these:

Evaluation questions that matter:

What is the end-to-end latency from caller speech to AI response, measured in your network environment, not the vendor's demo environment?

How does the platform handle mid-call interruptions and barge-ins? Many voice AI systems break when a caller speaks over the agent response.

What does the escalation path look like? When the AI cannot resolve an issue, how cleanly does it hand off to a human, and does context transfer with the call?

What are the data residency and compliance options? For healthcare, finance, and public sector, this is non-negotiable.

What does the pricing model look like at 10x your current volume? Per-minute, per-seat, and outcome-based models have very different cost curves at scale.

For a structured framework on comparing solutions head-to-head, the guide on choosing the best voice AI walks through the evaluation criteria that enterprise teams consistently find most predictive of deployment success.

The ROI Calculation Most Teams Get Wrong

The financial case for AI in contact centers is well-established, cost reduction through call deflection is real and measurable, but the ROI calculation most teams get wrong is treating it as a technology installation rather than a workflow redesign. The ROI is real, but so is the organizational disruption. Teams that see the strongest returns treat AI deployment as a workflow redesign project, not a technology installation.

That means mapping which call types are genuinely automatable (typically high-volume, low-complexity: balance inquiries, appointment scheduling, status updates), piloting on those before expanding, and measuring deflection rate and customer satisfaction together, not separately. Cost reduction without satisfaction maintenance is a short-term win that creates long-term churn. For a detailed breakdown of the business case, how conversational AI boosts ROI covers the metrics and measurement approaches that hold up under scrutiny.

What 2026 Trends Are Actually Reshaping Deployment

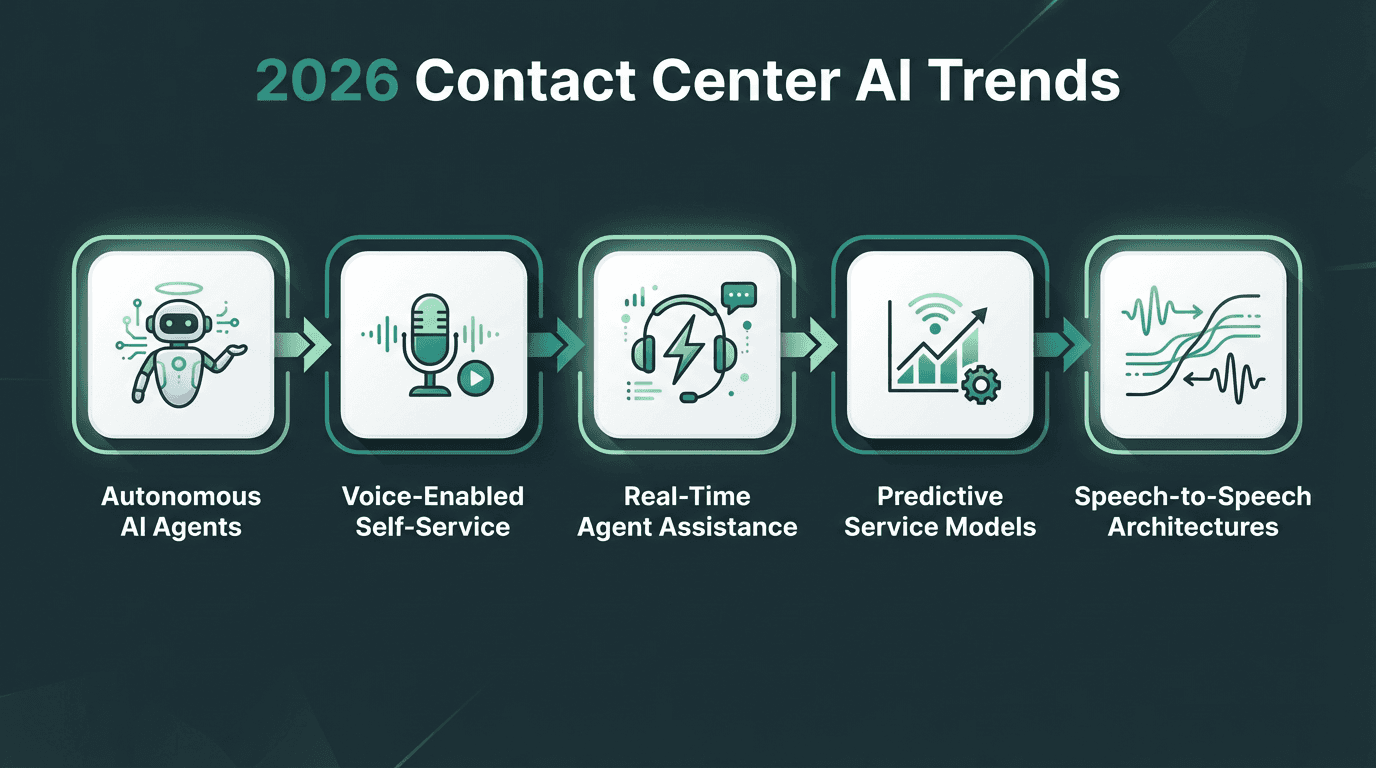

Five trends are reshaping how contact center AI is architected and deployed in 2026.

Five trends are materially changing what 'good' looks like for contact center AI in 2026: autonomous AI agents capable of full call resolution, voice-enabled self-service that goes beyond DTMF menus, real-time agent assistance during live interactions, predictive service that surfaces issues before customers call, and speech-to-speech architectures that eliminate the latency introduced by text as an intermediate step.

That last trend deserves more attention than it typically gets. Traditional voice AI pipelines convert speech to text, process it through a language model, then convert the response back to speech. Each conversion adds latency and a new surface for error. Hydra introduces speech-to-speech capabilities designed to reduce pipeline latency, which is why the architecture is gaining traction in high-volume, latency-sensitive environments. For a forward-looking view on where these shifts are heading, the contact center automation trends analysis covers the architectural and operational changes that will define the next 18 months.

Implementation: The Decisions That Determine Outcome

Platform selection accounts for roughly 40% of the deployment outcome. The other 60% is implementation quality. Three decisions consistently separate successful deployments from stalled ones.

The first is training data. Voice AI models perform best when trained or fine-tuned on call data that reflects your actual caller population, including accents, domain vocabulary, and call patterns. Generic models trained on clean studio audio will underperform on real contact center audio. Ask every vendor for accuracy benchmarks on data that resembles yours, not their benchmark dataset.

The second is integration architecture. The most common implementation failure is a voice AI layer that cannot write back to the CRM in real time, leaving agents handling escalations with no context from the AI interaction. Before signing any contract, map the data flow from AI interaction to CRM record to agent screen. If that path has manual steps, the efficiency gains evaporate. Real-world deployments in hospitality and service industries have demonstrated this clearly, as explored in AI enhancements in hotel customer service case studies.

The third is fallback design. Every voice AI interaction needs a defined escalation path, and that path needs to be tested as rigorously as the primary flow. The cases where AI fails are often the cases where customer frustration is already highest. A poorly designed fallback turns a containment failure into a churn event.

Key Takeaways

What to carry forward:

Contact center AI operates across four layers: routing, real-time assist, automated resolution, and post-interaction analytics. Evaluate platforms against all four, not just the one you need most urgently.

Platform category fit matters more than feature count. Match your use case to the right category before comparing vendors within it.

The ROI case is real but requires workflow redesign. Measure deflection rate and satisfaction together, not separately.

Speech-to-speech architectures are the technically superior choice for latency-sensitive voice deployments in 2026.

Implementation quality (training data, integration architecture, fallback design) determines outcome more than platform selection alone.

The Problem Most Platforms Do Not Solve

The core problem in contact center AI is not a shortage of platforms. It is the gap between what vendors demonstrate and what actually works at production scale, under real call conditions, with real caller accents and genuine integration complexity. Most platforms are assembled from third-party components: one vendor's STT, another's LLM, another's TTS, stitched together with latency accumulating at every seam. That architecture holds up in demos. It struggles when call volume spikes, when a caller has a regional accent the STT was not trained on, or when the CRM API is slow and the voice agent has to wait.

Smallest.ai is built differently. The Lightning, Pulse, Hydra, Electron, and Atoms components are designed as a unified stack, not a vendor aggregation, which means latency is optimized across the full pipeline rather than just within each component. For teams building or scaling voice automation in 2026, Smallest.ai's AI contact center solution is one of the most complete options for teams building production AI call centers, precisely because it addresses the integration and latency problems that cause production deployments to underperform their pilots.

What is contact center AI and how is it different from a traditional IVR?

How do I know if my contact center is ready for AI automation?

What is the difference between agent assist and a fully autonomous AI agent?

Agent assist tools work alongside a human agent during a live call, surfacing suggested responses, relevant knowledge base articles, or compliance prompts in real time. The human still drives the conversation. A fully autonomous AI agent handles the entire interaction without human involvement, from greeting to resolution or escalation. Many contact centers deploy both: autonomous agents for high-volume, low-complexity calls and agent assist for complex or sensitive interactions that require a human.

What should I look for in contact center AI pricing models?