Emotion in Text to Speech: A Complete Guide to Human Like AI Voices

Hamees Sayed

Experience human-like voices with emotional text to speech. Enhance customer service and engage users. Discover cutting-edge TTS tools!

Robotic, monotone AI voices often leave users disconnected, reducing engagement and comprehension. text-to-speech emotion transforms this by adding natural tone, pitch, and context, making AI voices sound human, empathetic, and expressive. From customer service to e-learning and media, emotionally intelligent voices improve understanding, hold attention, and create memorable interactions.

With the global text-to-speech market expected to surpass USD 104.05 billion by 2034, the demand for expressive, real-time AI voices is rapidly growing. Understanding how text-to-speech emotion works, its applications, and industry benchmarks is essential for businesses aiming to deliver authentic, human-like voice experiences at scale.

This guide covers the science, applications, and future of emotion in text-to-speech, showing how emotionally aware AI voices transform digital interactions and create more engaging, human-like experiences.

Key Takeaways

Emotional TTS Brings AI Voices to Life: Adds tone, pitch, and context to make voices human-like, expressive, and engaging.

Real-Time Generation Ensures Natural Interaction: Sub-100ms latency allows AI voices to respond instantly in live applications.

Prosody and Emotion Mapping Define Authenticity: Pitch modulation, speech rate, pauses, and intonation create believable, relatable speech.

Multilingual Support Expands Global Reach: Emotion is conveyed accurately across 30+ languages and accents, enhancing comprehension.

Engagement and Retention Improve Across Use Cases: Learners, customers, and audiences understand content better and feel more connected.

What is text-to-speech Emotion and Why It Matters?

Emotional Text-to-Speech (TTS) turns written text into speech that sounds human and expressive. It goes beyond standard TTS by adding feelings such as excitement, calmness, or empathy, making the voice more natural and engaging.

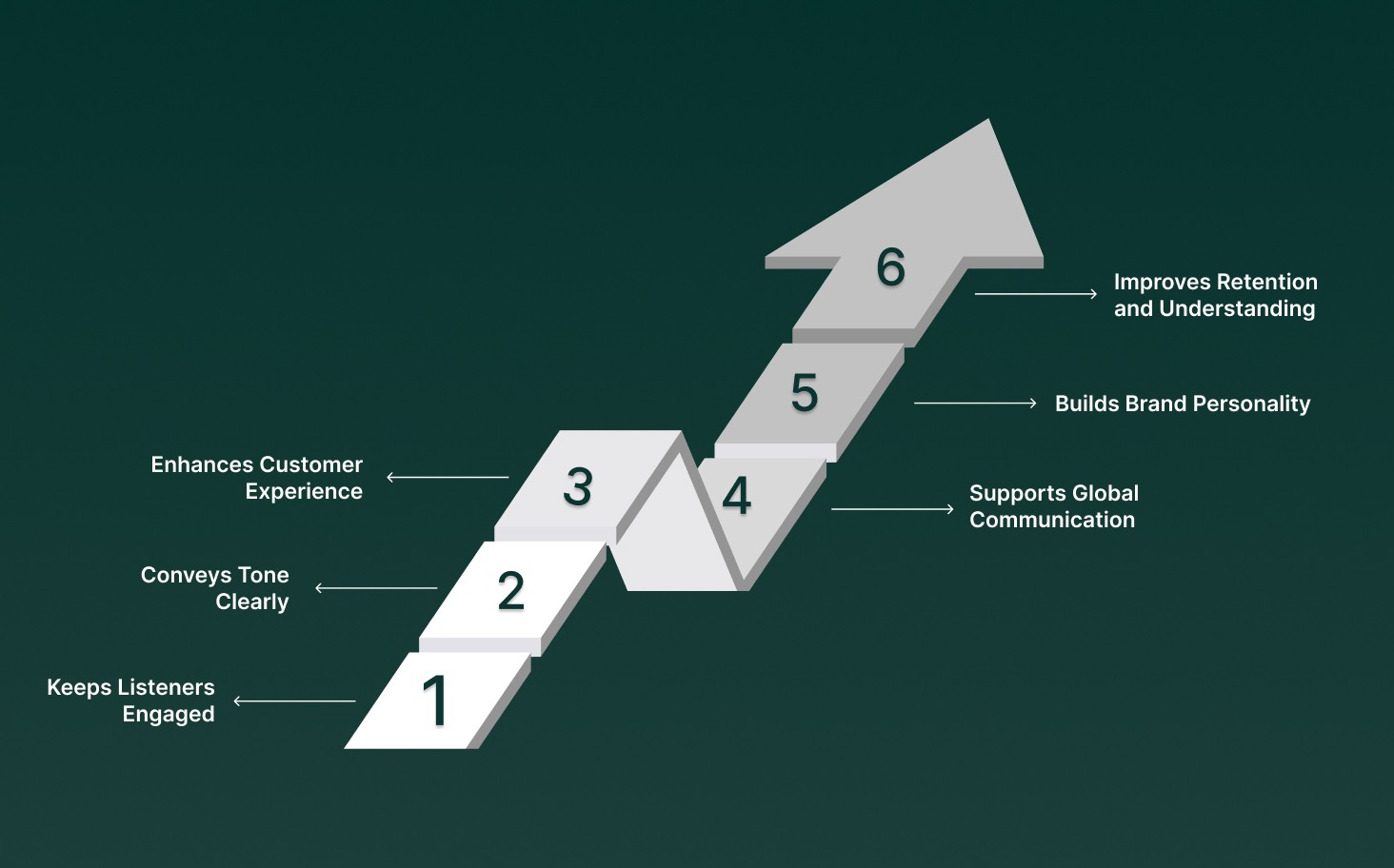

This matters because emotional expression in speech helps listeners connect, understand, and respond more effectively. It improves learning, customer interactions, and communication across languages and accents. Here’s why it’s important:

Keeps Listeners Engaged: Voices with emotion hold attention in e-learning, podcasts, or interactive systems.

Conveys Tone Clearly: Emotional cues help the listener understand the meaning behind words.

Enhances Customer Experience: Empathetic AI voices make automated calls and responses feel more natural.

Supports Global Communication: Works across multiple languages and accents, making messages clear for everyone.

Builds Brand Personality: A voice with emotion can reflect brand values, making interactions memorable.

Improves Retention and Understanding: Emotionally expressive speech helps listeners remember information better and reduces misinterpretation.

After exploring why emotional TTS matters, the next step is to understand how it works behind the scenes.

Also Read: Multilingual Customer Support: Definition, Tips and Strategies

3 Core Technologies of Emotional Text-to-Speech and How They Work

The emotional text-to-speech (TTS) process relies on three core stages: text analysis, prosody modeling, and voice synthesis. Understanding these steps explains how AI produces speech that feels natural and expressive.

1. Text Analysis

The first step is understanding the text. AI uses natural language processing (NLP) to:

Detect Sentiment: Identifies whether the text is happy, sad, urgent, or neutral. For example, “I’m thrilled to meet you” is perceived as positive and excited.

Understand Context: Analyzes surrounding words to avoid misinterpreting sarcasm, idioms, or irony.

Map Emotion: Translates the detected sentiment into parameters for speech, including tone, pitch, and emphasis.

By analyzing the text, AI creates a blueprint of the intended emotion and the context in which the words are spoken.

2. Prosody Modeling

Prosody defines how speech sounds, including rhythm, pitch, speed, volume, and pauses. Once the emotion is mapped, AI adjusts these elements to make the voice feel expressive and natural:

Pitch Modulation: Rising or falling pitch conveys curiosity, excitement, or concern.

Speech Rate: Faster speech signals urgency; slower speech indicates calmness or seriousness.

Pauses and Volume: Strategic pauses highlight important points and emphasize meaning.

Intonation Patterns: Adjusting tone helps reflect joy, sadness, or surprise.

Prosody serves as the bridge between written emotion and audible expression, ensuring that speech clearly reflects the intended feeling.

3. Voice Synthesis

The final stage is generating the voice. Neural AI models take the text and prosody instructions to produce speech:

They learn from large datasets of human speech, which include different accents, ages, and speaking styles.

Models adjust timing, stress, and tone to match the intended emotion.

Output is generated in real time, often with sub-100ms latency, making it suitable for live applications like IVR systems, podcasts, and AI agents.

The synthesis stage ensures the AI voice is realistic, expressive, and maintains clarity across different languages and contexts.

4. Emotional Dataset Training

AI models rely on labeled datasets with emotional speech examples to learn patterns of human expression:

The system recognizes how happiness, sadness, or urgency sounds in real speech.

Training allows AI to generalize across new sentences and maintain emotional accuracy.

Continuous refinement ensures the AI adapts to multiple languages, dialects, and voice styles.

How It All Comes Together

By combining text analysis, prosody modeling, and neural synthesis, emotional TTS systems produce voices that sound human, expressive, and contextually aware. Every step, detecting emotion, adjusting rhythm and pitch, and generating realistic speech, is carefully calibrated to make digital voices feel natural and engaging.

To fully appreciate the value of emotional TTS, it helps to compare it with traditional voice technologies.

How Emotional TTS Compares to Other Voice Technologies?

To understand why emotional text-to-speech (TTS) is becoming essential for modern AI applications, it’s helpful to see how it differs from standard TTS and basic AI voice systems.

Unlike traditional voices that sound robotic or flat, emotional TTS adds nuance, context, and expressiveness, making digital interactions more natural, engaging, and human-like. The table below highlights key differences:

Feature / Aspect | Standard TTS | Basic AI TTS | Emotional TTS (Text-to-Speech Emotion) | Key Benefit of Emotional TTS |

Voice Naturalness | Robotic, monotone | Slightly natural, limited expression | Human-like, expressive | Sounds authentic, builds listener trust |

Emotion & Tone | None | Minimal variations | Dynamic emotion: happiness, sadness, urgency, calmness, empathy | Conveys mood, enhances engagement |

Context Awareness | No | Limited | Understands sentiment, intent, and context | Reduces misinterpretation, improves comprehension |

Pitch & Prosody Control | Fixed | Basic modulation | Full control over pitch, speed, volume, intonation | Matches desired emotional effect precisely |

Real-Time Interaction | Often delayed | Partial support | Fully real-time, sub-100ms latency | Ideal for IVR, live agents, and interactive systems |

Multilingual Support | Limited | 10–20 languages | 30+ languages with accurate emotional mapping | Reaches global audiences with consistent emotion |

Engagement & Retention | Low | Medium | High: keeps listeners attentive | Improves learning, satisfaction, and information retention |

Use Cases | Navigation prompts, basic announcements | Voice assistants, chatbots | Customer service, e-learning, audiobooks, gaming, accessibility | Expands impact across industries |

By using real-time, multilingual, and nuanced emotional capabilities, businesses and creators can deliver more immersive and memorable experiences. While emotional TTS delivers powerful benefits, implementing expressive AI voices also introduces technical and ethical considerations

Also Read: AI for Small Businesses: What’s Actually Working Today?

Text-to-Speech Emotion: Challenges, Ethics, and Best Practices

Creating AI voices that express emotion is transforming digital interactions across education, customer support, media, and global business. Text-to-speech emotion allows AI to convey tone, empathy, and intent, making conversations feel natural and engaging.

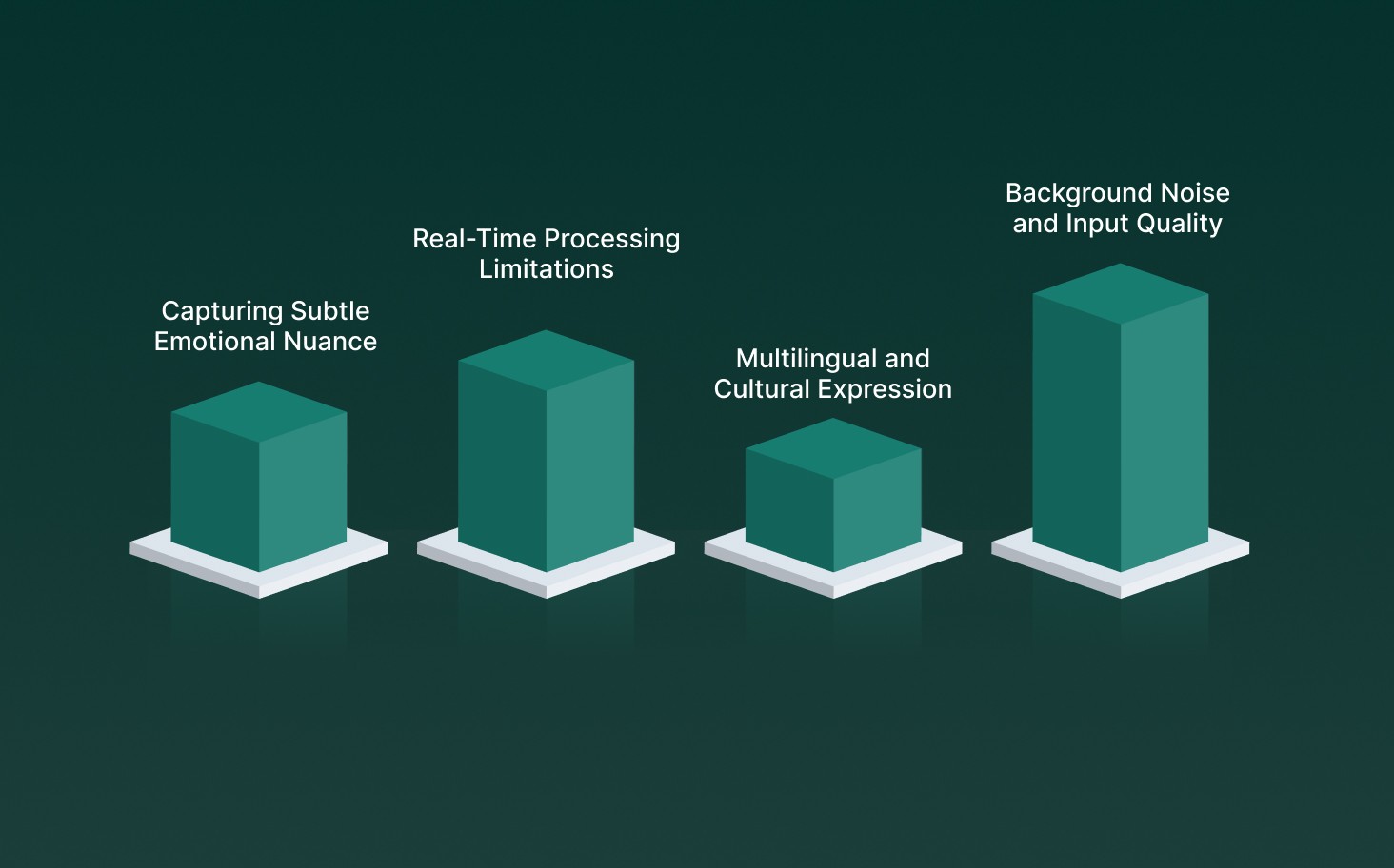

However, developing emotionally expressive AI voices comes with technical and ethical hurdles. Below are the main challenges, along with best practices to prevent or mitigate them.

1. Capturing Subtle Emotional Nuance

Challenge: Human emotions are complex, expressed through pitch, rhythm, intonation, and pauses. AI models often struggle to replicate these subtleties.

Best Practice: Use advanced deep learning models trained on diverse emotional datasets and continuously fine-tune with real-world audio examples to improve authenticity.

2. Real-Time Processing Limitations

Challenge: Generating expressive voices in real time can introduce delays or degrade voice quality, especially in interactive systems such as IVR or AI agents.

Best Practice: Optimize models for low-latency inference, leverage ultra-fast TTS engines, and implement caching or streaming strategies to maintain responsiveness.

3. Multilingual and Cultural Expression

Challenge: Emotional cues differ across languages and cultures, leading to misinterpretation or unnatural-sounding voices in global applications.

Best Practice: Train models on multilingual emotional datasets, incorporate locale-specific prosody adjustments, and validate outputs with native speakers.

4. Background Noise and Input Quality

Challenge: Poor-quality audio or noisy environments can distort emotion recognition, resulting in inaccurate or monotone AI voices.

Best Practice: Use robust speech preprocessing, noise suppression algorithms, and quality checks before generating TTS outputs.

As adoption continues to grow, emotional TTS is changing rapidly with new capabilities and innovations. Let’s explore the trends shaping how expressive AI voices will develop in the coming years.

Top 8 Future Trends in Text-to-Speech Emotion

In the coming years, emotional TTS will play a key role in customer service, e-learning, media, accessibility, and conversational AI, bridging the gap between technology and authentic human communication. Here’s a look at the trends that are set to define its future:

Hyper-Realistic Voices: AI will capture subtle emotions, such as empathy during customer support calls or excitement in audiobook narration.

Multilingual Emotional Adaptation: Voices will adjust emotion when switching from English to Hindi or Spanish in e-learning modules.

Context-Aware Speech: Emotional tone will match user queries in chatbots or interactive voice agents.

Integration with AI Agents: TTS will enable lifelike responses in sales calls, virtual receptionists, or gaming NPCs.

Adaptive Modulation: Pitch, speed, and tone will change dynamically in training videos or conversational coaching sessions.

Ethical AI Use: Ensures privacy and responsible deployment in healthcare or financial services applications.

Enhanced Accessibility: Emotionally expressive voices improve engagement for visually impaired users or those using assistive tools.

Real-Time Emotional Analytics: Live monitoring of tone helps adjust responses during webinars or interactive workshops.

With a clear view of emerging trends, the next question becomes practical application.

Where Emotional Text-to-Speech Makes an Impact: Use Cases and Applications

By adding tone, pitch, and expressiveness, emotional TTS makes interactions more natural, engaging, and human-like. Its impact spans multiple sectors, improving accessibility, learning experiences, entertainment, and customer engagement.

Below are the key areas where emotional TTS is making a difference:

1. Accessibility Applications

Reading Assistance: Converts written content into expressive speech, helping visually impaired users follow along effortlessly.

Language Learning: Highlights emotional cues in speech, aiding learners in understanding context and tone.

Healthcare: Delivers empathetic voices for telemedicine, patient guidance, and wellness support.

2. Education and Entertainment

E-Learning: Keeps learners engaged with lively, expressive voiceovers.

Audiobooks & Podcasts: Adds emotion to storytelling, making content more immersive.

Games & Streaming: Creates realistic, emotionally rich characters for interactive experiences.

3. Customer Service and Business Benefits

Enhanced Customer Experience: Natural, empathetic voices foster positive engagement.

Efficient Service: Real-time emotional speech improves responsiveness and satisfaction.

Brand Identity: Custom emotional voices consistently reflect a company’s personality and tone.

Now that you have seen the real-world impact of emotional TTS, it’s useful to understand how modern platforms implement these capabilities at scale.

How Smallest.ai Enhances Text-to-Speech Emotion for Real-Time Applications?

Smallest.ai advances text-to-speech emotion by combining natural expression, dynamic intonation, and real-time voice adaptation to create immersive, human-like interactions for media, learning, and customer engagement.

Rather than producing static or robotic voices, Smallest.ai integrates pitch modulation, speed adjustment, and emotional tone mapping with low-latency audio generation, delivering lifelike speech that resonates with listeners.

Hyper-Realistic Emotional Voices: Waves TTS creates rich, expressive voices across 30+ languages, allowing businesses and creators to convey emotion naturally and effectively.

Empathetic Real-Time AI Agents: Emotional TTS enables AI agents to respond with appropriate emotion during live conversations, improving engagement and reducing response times.

Instant, Low-Latency Audio: Lightning delivers sub-100ms speech generation, ensuring smooth, real-time emotional voice output for IVR systems, voice assistants, and interactive applications.

Accurate Speech Understanding: Pulse STT captures spoken input in real time, supporting context-aware responses and enhancing the natural flow of AI-driven interactions.

Easy Conversational Emotion: Hydra preserves overlapping speech, timing, and emotional cues, making conversations feel authentic and dynamic.

Flexible, Scalable Deployment: Emotional TTS is supported on both secure cloud and on-premise environments, making it ideal for enterprises, content creators, and global operations.

By combining real-time generation, multilingual support, and expressive voice modeling, Smallest.ai positions text-to-speech emotion as a scalable solution for creating lifelike digital communication that truly connects with audiences.

Conclusion

Emotional TTS is transforming digital communication, making AI voices sound natural, expressive, and human-like. By adding empathy and nuance, it enhances customer interactions, e-learning, entertainment, and accessibility, creating experiences that feel personal and engaging.

Smallest.ai offers a full suite of real-time voice and language products to bring this capability to life: Waves for hyper-realistic text-to-speech, Pulse for live speech-to-text transcription, Hydra for natural speech-to-speech interactions, and Electron for fast conversational reasoning. Together, these tools empower businesses to deliver human-like, responsive, and emotionally intelligent voice experiences.

Discover how these products can elevate your voice interactions and book a demo today to experience the power of emotive AI voices in action.

What is emotional TTS and why is it important?

How is emotion added to AI voices?

Where is emotional TTS commonly used?

It’s applied across customer service, e-learning, audiobooks, video games, accessibility tools, and any platform where natural, human-like speech improves engagement and comprehension.

Is real-time emotional speech generation possible?