What is a Conversational AI Platform? A 2026 Guide for Voice AI Teams

Prithvi Bharadwaj

Learn what a conversational AI platform is, how the technology works, and which top options are worth evaluating for voice and text agent deployments in 2026.

Most voice AI projects fail in production, not in the demo. The demo runs on clean audio, scripted inputs, and a controlled network. Production runs on accented callers, mid-sentence topic changes, and CRM APIs that time out. The platform you pick determines which of those environments your system is actually built for. This article covers the platforms worth evaluating in 2026, and the criteria that actually predict production success.

This blog is written for developers, product managers, and technical decision-makers who need to understand what these platforms do under the hood, how to evaluate them rigorously, and which options deserve serious consideration in 2026.

What a Conversational AI Platform Actually Does

A conversational AI platform ingests spoken or typed input, determines what the user means, decides what to do about it, and delivers a response within a latency window that feels natural. Everything else is implementation detail.

The core components of any conversational AI system are automatic speech recognition (ASR), natural language processing (NLP), natural language understanding (NLU), dialogue management, and text-to-speech (TTS) synthesis. A platform that handles all of these in a unified pipeline is a fundamentally different product from a collection of loosely coupled APIs. The integration quality between those layers is often what separates something production-ready from a prototype that collapses under real traffic.

The primary business drivers for adoption are enhancing customer engagement, automating repetitive tasks, and providing 24/7 support without scaling headcount. Those are real business outcomes. If a platform cannot connect its technical capabilities to those outcomes reliably, it is not ready for production. For a closer look at how chatbot voice recognition and conversational AI intersect at the component level, that breakdown is worth reading before you start evaluating vendors.

The Technology Stack: What Lives Inside These Platforms

Most evaluations focus on the surface: dashboard quality, language support, pricing. Those questions matter, but the more important one is what is actually happening inside the pipeline. Here is the broader picture of what every serious platform must handle well.

Five layers every production-grade conversational AI platform must get right:

ASR (Automatic Speech Recognition): Converts raw audio into text. The gap between demo-grade and production-grade ASR shows up on domain-specific vocabulary, accented speech, and noisy environments, not on clean studio recordings.

NLU (Natural Language Understanding): Extracts intent and entities from the transcript. This is where the system decides what the user actually wants, not just what they literally said.

Dialogue Management: Tracks conversation state and manages context across turns. Weak dialogue management is the most common reason voice agents feel robotic, and it is frequently underweighted during procurement. For a deeper look, our deep dive on voice bot architecture explains why it is so critical for natural interactions.

Response Generation: Either retrieves a pre-written response or generates one dynamically via a language model. That choice carries significant implications for latency, controllability, and cost.

TTS (Text-to-Speech): Converts the response back into audio. Voice quality and expressiveness here directly affect whether users trust the system or abandon the call. The technical specifics of the text-to-speech layer are a key evaluation point.

How to Evaluate a Conversational AI Platform: The Criteria That Matter

Enterprise adoption of conversational AI for customer service has accelerated significantly, with production deployments now common across telecommunications, financial services, and healthcare. That scale puts real pressure on evaluation criteria that many buyers underweight during procurement. The table below is a working framework for any platform evaluation or proof of concept.

Criterion | Why It Matters | What to Test |

|---|---|---|

End-to-end latency | Determines whether conversations feel natural or stilted | Measure time from speech end to first audio byte of response |

ASR accuracy on your data | Generic benchmarks rarely reflect your domain vocabulary | Run your own audio samples through the pipeline |

Voice quality (TTS) | Poor voice quality erodes user trust faster than most other factors | Listen to synthesized speech in noisy playback conditions |

Scalability and pricing model | Cost per conversation can balloon unexpectedly at scale | Model your expected volume against per-minute and per-request pricing |

Integration flexibility | Rigid platforms create lock-in that is expensive to escape | Check for REST APIs, webhooks, and SDK availability |

Dialogue management depth | Shallow systems break on multi-turn conversations | Test with realistic multi-step scenarios, not just single-turn demos |

How to Choose the Right Conversational AI Platform in 2026

The market has matured enough that there is no single best platform. The right choice depends on whether you are building a voice agent, a text-based assistant, or a full omnichannel system, and those are genuinely different engineering problems.

Conversational AI platforms fall into a few distinct categories. The right choice depends less on brand and more on architectural fit:

Full-stack voice platforms (e.g., Smallest.ai) focus on low-latency, real-time interaction.

Cloud-native modular stacks (e.g., Amazon Web Services) provide flexibility but require integration work.

Enterprise conversational platforms (e.g., IBM) prioritize governance and compliance.

API-first speech layers (e.g., Deepgram) specialize in individual components like transcription.

Each approach solves a different problem, and the best choice depends on whether you are optimizing for speed, control, or enterprise governance.

Smallest.ai is built for low-latency voice AI, especially for teams deploying real-time voice agents at scale. The product suite covers the full conversational stack: Atoms is the voice and text agent platform for deploying production agents; Hydra introduces speech-to-speech capabilities designed to reduce pipeline latency; Lightning is the TTS API; Pulse is the speech-to-text engine; and Electron is a conversational small language model, available on enterprise plans, optimized for fast, on-task dialogue. Voice customization and cloning capabilities are available through the Lightning API, giving developers access to custom voice identities without building from scratch.

The technical differentiator is end-to-end latency. Most assembled pipelines accumulate latency at three vendor handoffs (ASR to LLM to TTS). Smallest.ai's integrated stack is designed to minimize that overhead, which is where most conversational latency actually originates. Pricing is structured around usage tiers for API access. For teams comparing Smallest.ai against alternatives in a broader infrastructure decision, the latency problem: what's killing your voice AI provides a direct technical comparison.

Choosing by Category, Not by Name

Before shortlisting specific platforms, match your use case to the right category. Teams building on existing cloud infrastructure will find native services from major cloud providers easier to connect but harder to optimise for latency. Teams in regulated industries with strict governance requirements will find enterprise-focused platforms have more compliance tooling out of the box, at the cost of deployment flexibility. Teams who need only the ASR layer, for transcription, analytics, or quality assurance, have a separate set of options that cover that layer well but stop there. In each case, the evaluation criteria in the table above apply regardless of which specific platform you evaluate.

Where Conversational AI Platforms Are Being Deployed

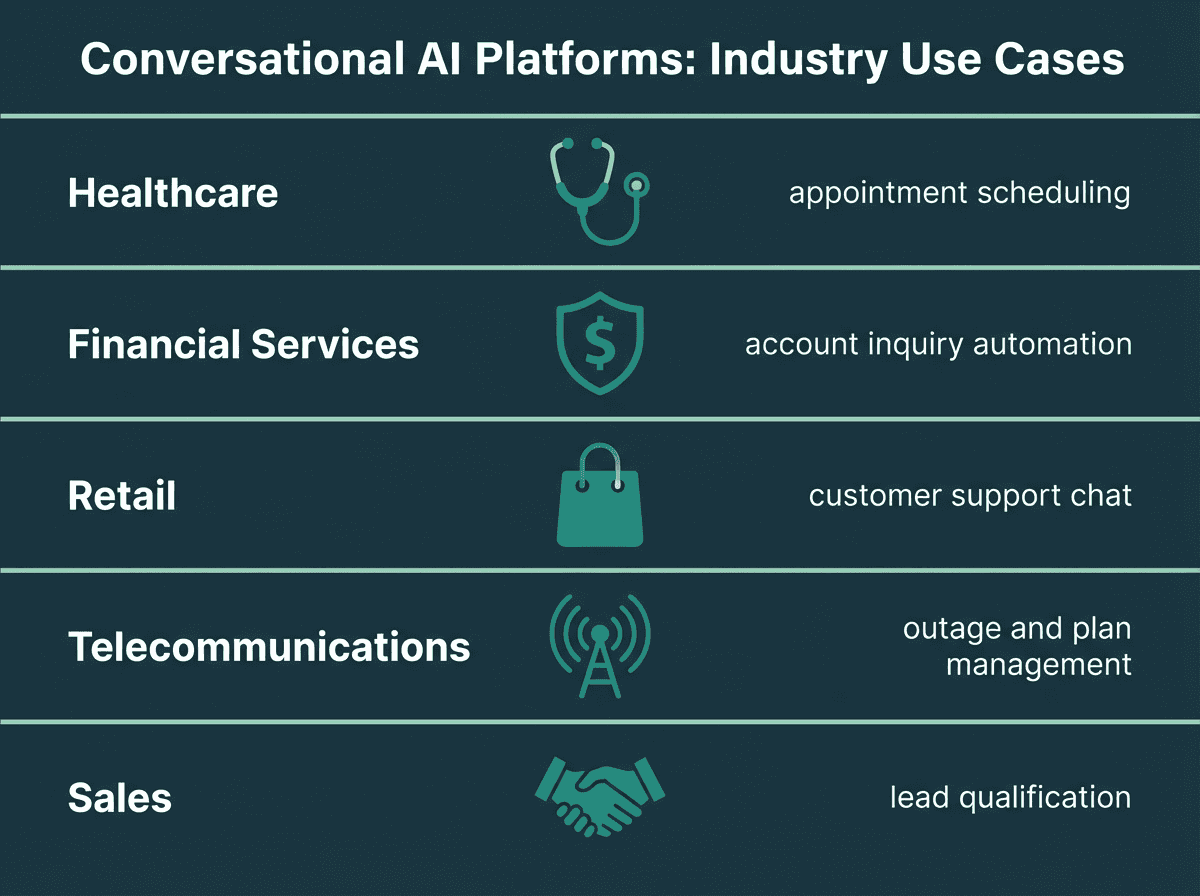

Industry verticals where conversational AI platforms are delivering measurable results

In financial services, conversational AI handles inbound account inquiry calls like balance checks, transaction disputes, and card replacement requests that previously required a human agent. The bounded intent set makes dialogue management tractable, and the interaction volume justifies the engineering investment.

In healthcare, platforms automate appointment scheduling, reminders, and cancellations, which can reduce patient wait times and administrative workload for front-desk staff. This allows staff to focus on more critical patient care tasks. The our guide on deploying voice agents in enterprise and regulated environments details how these systems are being deployed today.

Telecommunications companies use conversational AI to manage high volumes of support requests for tasks like checking for service outages, troubleshooting basic connectivity issues, and processing plan changes.

Sales qualification is another area seeing rapid adoption. Voice agents can handle initial lead qualification calls, maintain consistent messaging, and hand off qualified leads to human representatives, which can help shorten sales cycles. The guide on how to use voice agents for sales to qualify leads on autopilot covers the implementation specifics for that use case.

What Most Evaluations Get Wrong

Teams spend most of their evaluation time on the demo experience and almost none on failure modes. A platform that sounds impressive in a controlled demo can fall apart when it encounters an accent it was not trained on, a noisy call center environment, or a user who changes their mind mid-sentence. That is not an edge case. That is Tuesday.

The second mistake is evaluating components in isolation. ASR accuracy tested on clean audio tells you almost nothing about how the system performs when that transcript feeds into an NLU model trained on different vocabulary distributions. The only meaningful test is end-to-end, on your data, under conditions that approximate your production environment.

Third: latency gets ignored until it is too late. A 2-second response time may be tolerable for a text chatbot. For a voice agent, it is the difference between a conversation that feels natural and one that feels broken. Measure first-byte latency from the moment the user stops speaking, not from the moment the request hits your server. Those two numbers can differ by several hundred milliseconds, and that gap matters. For a deeper look, see this post on why streaming architecture is non-negotiable.

Key Takeaways and Next Steps

A conversational AI platform is an integrated pipeline of ASR, NLU, dialogue management, response generation, and TTS. The quality of that integration determines whether the system works in production or only in demos. Most platforms still make trade-offs that matter: some prioritize voice quality over latency; others prioritize enterprise governance over developer flexibility. Neither is wrong, but neither is neutral either.

The problem most teams face is not a shortage of options. It is the difficulty of evaluating those options against real production requirements rather than feature checklists. Latency, accuracy on domain-specific data, and cost at scale are the three variables that most often determine whether a deployment succeeds or stalls.

Smallest.ai's Atoms platform is designed specifically for teams building voice agents who need to close that gap. With an integrated stack covering ASR (Pulse), TTS (Lightning), speech-to-speech (Hydra), and a conversational small language model (Electron for language understanding, available on enterprise deployments), Atoms reduces the integration overhead that causes most latency and reliability problems in assembled pipelines. For customer service, sales, or any other high-volume voice use case, it is the most direct path from prototype to production.

What is the difference between a conversational AI platform and a regular chatbot?

What should I prioritize when evaluating a conversational AI platform for voice use cases?

Can I build a conversational AI system by assembling separate APIs instead of using a platform?

Yes, and many teams do. You can combine a standalone ASR service, an LLM for response generation, and a TTS API into a functional pipeline. The trade-off is integration complexity and latency overhead at each handoff point. A purpose-built platform like Smallest.ai's integrated stack (Pulse for ASR, Electron for language understanding, Lightning for TTS) reduces those handoffs and the engineering effort required to manage them. For teams without dedicated voice AI infrastructure engineers, a unified platform is usually the faster and more reliable path. For a practical walkthrough of what that assembly process looks like and where it breaks down, designing voice assistants: STT, LLM, TTS, and latency budget covers the architecture decisions in detail.

What is a realistic timeline to deploy a production voice agent?