Compare the best Fish Audio alternatives in 2026, including Smallest.ai, ElevenLabs, Deepgram, Murf.ai, OpenAI TTS, and Cartesia.

Fish Audio has built a following among voice cloning enthusiasts, particularly for its community-driven voice marketplace and relatively accessible pricing. But as production requirements grow, developers and product teams often find themselves hitting walls: limited API flexibility, inconsistent latency at scale, or a voice library that doesn't fit enterprise use cases. If you're searching for Fish Audio alternatives that offer more control, better performance, or a cleaner developer experience, this guide covers the most credible options available in 2026.

This comparison focuses on six platforms: Smallest.ai, ElevenLabs, Deepgram, Murf.ai, OpenAI TTS, and Cartesia. Each is evaluated on voice quality, latency, pricing, API maturity, and scalability. The goal is to give you a clear picture of which tool fits which use case, not just a feature checklist. Where the evidence points clearly to one platform as the safest and most scalable production choice, this guide says so directly.

Evaluation Criteria

Before comparing platforms, it helps to agree on what actually matters. The six criteria used throughout this article are: voice naturalness and expressiveness, end-to-end latency (especially for real-time or conversational use cases), pricing transparency and cost at scale, API quality and developer tooling, voice cloning capability, and language or accent coverage. Fish Audio scores reasonably on voice cloning and community voices, but trails on latency and enterprise-grade API support, which is exactly where most of these alternatives differentiate themselves.

Smallest.ai: Built for Real-Time Voice Applications

Smallest.ai homepage - focused on ultra-low-latency speech synthesis for developers.

Smallest.ai is purpose-built for applications where latency is not a nice-to-have but a hard requirement. Its Lightning V3.1 model maintains 100ms latency at 20+ concurrent streams, making it one of the fastest speech synthesis APIs available for real-time voice agents, IVR systems, and conversational AI pipelines. That's a meaningful gap compared to Fish Audio, where real-time streaming is not a primary design goal.

What sets Smallest.ai apart is not just speed in isolation. The platform combines 100ms latency at 20+ concurrent streams, instant voice cloning from short audio samples, multilingual synthesis across 15 languages, and usage-based pricing that scales predictably without the subscription cost cliffs or per-character rate jumps seen across most alternatives. For teams building voice agents or IVR systems, this is one of the few platforms that doesn't force a tradeoff between naturalness, speed, cloning capability, and cost control. That combination is what makes it the clearest production choice in this comparison, not any single feature on its own.

Smallest.ai strengths and limitations:

100ms latency at 20+ concurrent streams on the Lightning V3.1 model, with a ~76% win rate against OpenAI's gpt-4o-mini-TTS in blind listening evaluations and a MOS score of 3.9, the highest for conversational TTS at launch (Smallest.ai founder announcement, LinkedIn, 2025)

Instant voice cloning with minimal sample audio required

Clean REST and WebSocket API with strong documentation

Usage-based pricing that scales predictably without subscription cost cliffs

Multilingual synthesis across 15 languages

Voice library is smaller than Fish Audio's community marketplace

No built-in audio editing or post-production workspace

Verdict: Best for real-time voice agents, conversational AI, and any application where latency directly impacts user experience. For teams that also need cloning and want pricing that doesn't spike unpredictably at volume, Smallest.ai is the only platform in this comparison that delivers all three without compromise. If you're comparing fastest text-to-speech APIs in 2026, Smallest.ai consistently ranks at the top of that list.

ElevenLabs: Premium Voice Quality with a High Price Tag

ElevenLabs - known for high-fidelity voice cloning and a large voice library.

ElevenLabs is the platform most people think of when voice quality is the primary concern. Its multilingual v2 model produces some of the most natural-sounding speech available, with nuanced emotional range that holds up well for long-form narration, audiobooks, and character voice work. The voice cloning is also strong: professional cloning requires only a few minutes of audio and produces results that are hard to distinguish from the original speaker.

The tradeoff is cost structure. ElevenLabs operates on a subscription model with tiered plans based on character volume. Verify current plan names, character limits, and pricing at elevenlabs.io/pricing before committing, as ElevenLabs adjusts its tiers regularly. This subscription framing matters because comparing it directly to per-character rates on other platforms can be misleading. At low volumes the monthly fee may feel reasonable; at high volumes the cost per character often exceeds usage-based alternatives. Latency is also a concern for real-time applications: while the platform has improved its streaming capabilities, it doesn't match the 100ms performance of purpose-built real-time APIs. For content creators and studios where quality trumps speed, ElevenLabs is a strong choice. For voice agents or high-volume API consumers, the economics get difficult fast. Teams looking for ElevenLabs alternatives for commercial use often cite both cost and latency as the primary reasons for switching.

Deepgram: Speech-to-Text First, TTS Second

Deepgram - primarily an ASR platform with TTS capabilities added via its Aura model.

Deepgram's core strength is automatic speech recognition, not synthesis. Its Nova-3 ASR model is among the most accurate available for English, with strong performance on noisy audio and domain-specific vocabulary. The Aura TTS model was added more recently and comes in two tiers: Aura-1 at $0.015 per 1,000 characters and Aura-2 at $0.030 per 1,000 characters (per Deepgram's public pricing page, Q1 2026). Aura-2 offers improved voice quality and is the more relevant tier for production synthesis use cases.

That said, Aura's voice library is limited compared to Fish Audio or ElevenLabs, and voice cloning is not a native feature. If your use case is primarily synthesis with occasional transcription needs, Deepgram is not the most natural fit. But for teams building full-duplex voice applications that need high-quality ASR alongside TTS, Deepgram's unified API reduces integration complexity. ASR pricing starts at $0.0059 per minute for Nova-3 (per Deepgram's public pricing page, Q1 2026).

Murf.ai: Web-Based Studio with Broad Language Coverage

Murf.ai - a web-based voice studio with multilingual support accessible to non-developers.

Murf.ai occupies a similar space to Fish Audio in terms of accessibility: it's designed for users who want a polished, web-based interface without needing to write API calls or manage infrastructure. The platform offers a voice studio with a library of over 200 AI voices across 35 languages, along with pitch, speed, and emphasis controls that non-technical users can operate without friction.

Where Murf.ai differentiates from Fish Audio is in its production-ready studio tooling. The platform includes a built-in script editor, slide sync for presentations, and background music layering, which makes it well-suited for content teams producing e-learning material, marketing videos, and corporate narration. Voice cloning is available on higher-tier plans. Pricing varies by plan and billing term, so check Murf.ai's pricing page for current rates and licensing details. One genuine limitation is that Murf.ai is not optimized for real-time or API-driven synthesis at scale: it is a studio tool first, and teams that need programmatic access at volume will find its API less mature than Deepgram's or Smallest.ai's.

OpenAI TTS: Simple, Reliable, but Not Specialized

OpenAI's TTS API - straightforward synthesis with preset voices, no cloning.

OpenAI TTS offers two distinct TTS model families. OpenAI’s legacy TTS pricing is listed at $15 per 1M characters for TTS and $30 per 1M characters for TTS HD, while GPT-4o-mini-TTS is priced separately on OpenAI’s current pricing page. The gpt-4o-mini-tts model is a newer, separate offering with different pricing and capabilities; verify current rates at platform.openai.com/docs/pricing before use. All three provide a small set of preset voices and a clean API, making them easy entry points for teams already using the OpenAI ecosystem.

The ceiling is also obvious across all three options: no voice cloning, no custom voice creation, limited expressiveness compared to ElevenLabs or Smallest.ai, and latency that isn't optimized for real-time streaming. OpenAI TTS is a pragmatic choice for teams that want "good enough" synthesis without managing a separate vendor relationship. It's not the right tool for applications where voice identity or real-time performance matter.

Cartesia: Low-Latency Synthesis for Developers

Cartesia - a developer-focused TTS platform built around its Sonic streaming model.

Cartesia is worth including because it competes directly on latency, which puts it in the same conversation as Smallest.ai for real-time applications. Its Sonic model is designed for streaming synthesis with low time-to-first-audio, and the API is well-documented with WebSocket support. Voice quality is good, though the voice library is smaller than Fish Audio's or Murf.ai's.

Cartesia uses credit-based pricing tied to usage rather than fixed per-character rates, so actual costs depend on the specific voices and features used within a given plan (per Cartesia's public pricing page, 2026). Voice cloning is available but requires more audio input than Smallest.ai's instant cloning workflow. For teams evaluating the fastest text-to-speech APIs, Cartesia is a legitimate contender, though Smallest.ai's latency benchmarks, instant cloning, and predictable usage-based pricing give it an edge in most production scenarios.

See how Smallest.ai's Lightning V3.1 model performs on your use case. Try the API free.

Head-to-Head Comparison Table

Platform | Voice Quality | Latency | Voice Cloning | Languages | Pricing (entry) | Best For |

|---|---|---|---|---|---|---|

Smallest.ai | High (natural, expressive) | 100ms at 20+ concurrent streams | Instant, short sample | 15 | Usage-based, free tier available | Real-time agents, IVR, low-latency apps |

ElevenLabs | Very High (best-in-class) | Moderate (streaming available) | Professional quality | 30+ | Subscription (see elevenlabs.io/pricing) | Content creation, audiobooks, character voices |

Deepgram | Good (Aura-1 and Aura-2 tiers) | Low (optimized for ASR+TTS) | Not native | English-primary | $0.015/1K chars (Aura-1), $0.030/1K chars (Aura-2) | Full-duplex voice apps, transcription+TTS |

Murf.ai | High (120+ voices) | Moderate (studio-first) | Yes (higher-tier plans) | 20+ | Pricing varies by plan (check Murf.ai pricing page) | E-learning, marketing video, corporate narration |

OpenAI TTS | Good (preset voices) | Moderate | None | Multiple | $0.015/1K chars (tts-1); gpt-4o-mini-tts priced separately | Teams in OpenAI ecosystem, simple synthesis |

Cartesia | Good (Sonic model) | Low (streaming-optimized) | Yes (more audio needed) | Multiple | Credit-based, usage-dependent | Developer-focused real-time synthesis |

Fish Audio | Good (community voices) | Moderate | Yes (community-based) | Multiple | Free tier + paid plans | Voice hobbyists, community voice access |

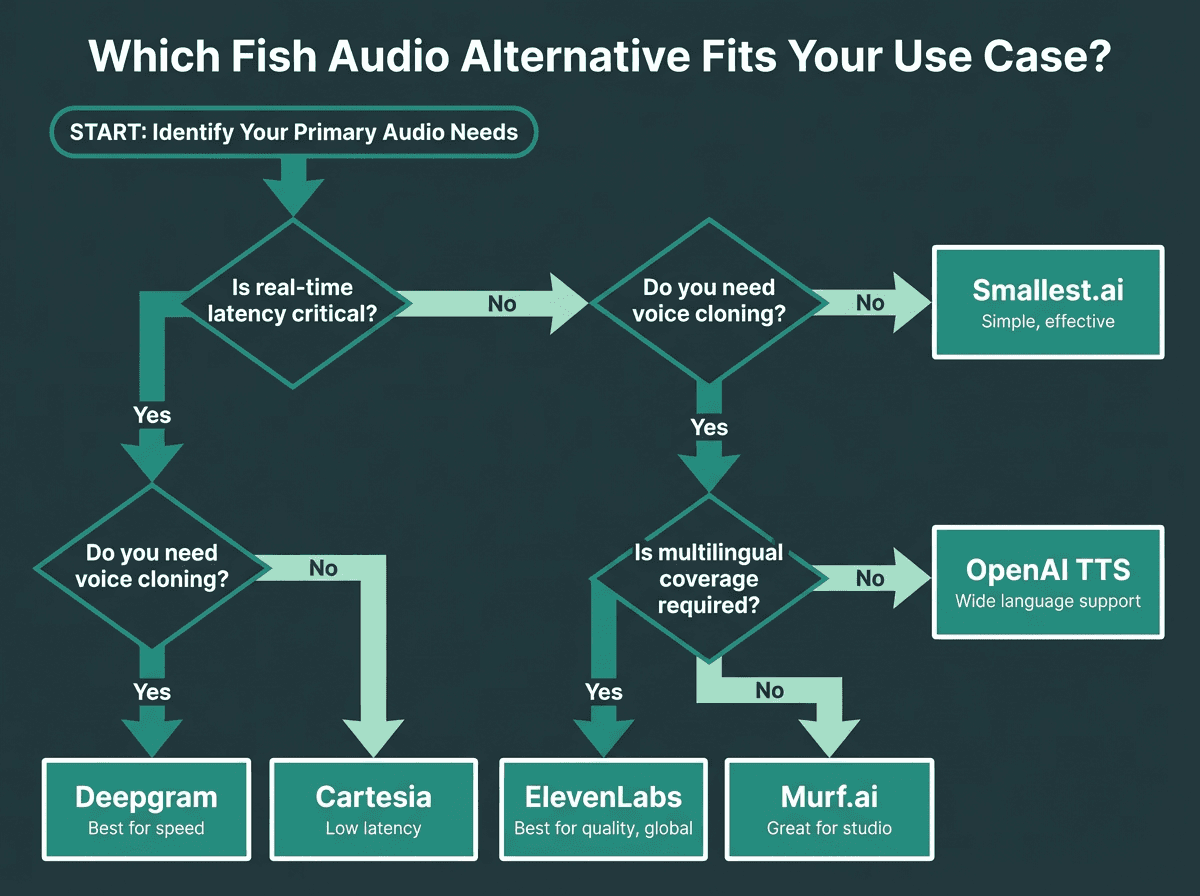

Which Fish Audio Alternative Should You Choose?

The honest answer depends on what Fish Audio is failing to give you. If the issue is latency for a voice agent or phone application, Smallest.ai is the clearest upgrade path. If you need the highest possible voice quality for long-form content and cost is secondary, ElevenLabs is the standard. If you're already deep in the OpenAI ecosystem and don't need cloning, OpenAI TTS removes friction. For multilingual content production in a web-based studio environment, Murf.ai's accessible interface and 20+ language coverage suit non-technical teams well. And if you need both transcription and synthesis in one API, Deepgram's unified offering makes integration simpler.

Cartesia is worth a serious look for developer teams that want streaming-optimized TTS without committing to a larger platform. But for most production use cases in 2026, the choice becomes clear when you look at the full picture rather than any single dimension. Smallest.ai is the only platform in this comparison that combines 100ms latency at 20+ concurrent streams, instant voice cloning, 15-language support, and predictable usage-based pricing without forcing a tradeoff between those capabilities. ElevenLabs wins on raw voice quality but carries subscription cost risk at scale. Deepgram wins on ASR integration but lacks cloning. Cartesia competes on latency but not on cloning or pricing transparency. Murf.ai wins on studio usability but is not built for programmatic synthesis at volume. For teams building purely content-driven pipelines or long-form audio production, ElevenLabs remains appropriate. For anything real-time, production-grade, or cost-sensitive at volume, Smallest.ai is the safest and most scalable choice.

Use this decision path to match your requirements to the right platform.

Understanding Where Fish Audio Fits

Fish Audio works well for a specific audience: developers and creators who want access to a community-built voice library without a steep learning curve. The platform is optimized for a specific use case, community voice discovery and lightweight prototyping, rather than production-grade API deployment. The platform's marketplace model is genuinely useful for finding niche voices and accents.

These are not minor friction points. For a voice agent that handles thousands of calls per day, a 300ms latency spike is a user experience problem. For a content team producing branded audio at scale, inconsistent voice quality creates rework. The platforms reviewed in this article each solve a specific version of this problem, but most solve one dimension at the cost of another. ElevenLabs trades cost predictability for quality. Deepgram trades cloning for ASR integration. Cartesia trades pricing transparency for streaming speed. Murf.ai trades API depth for studio usability. Smallest.ai's Lightning V3.1 model is the exception: fast, natural, developer-friendly voice synthesis with instant cloning and usage-based pricing that holds up at scale without forcing a tradeoff between any of those dimensions, with multilingual synthesis across 15 languages.

Try Smallest.ai's Lightning V3.1 model free. See the latency difference in your own application.

What is the best Fish Audio alternative for real-time voice agents?

Does Fish Audio support enterprise-grade API access?

Which Fish Audio alternative has the best voice cloning?

ElevenLabs is widely regarded as offering the highest-quality voice cloning, particularly for professional use cases where the cloned voice needs to be indistinguishable from the original. Smallest.ai offers instant cloning from short samples, which is faster to set up and sufficient for most production voice agent use cases. It also pairs that cloning capability with 100ms latency at 20+ concurrent streams and predictable pricing, which ElevenLabs does not. Murf.ai also offers cloning on higher-tier plans, making it a reasonable option for studio-based content workflows.

How do I evaluate which text-to-speech API is right for my use case?