AI-Powered Voice Assistants for Healthcare: Appointment Intake, Reminders, and Triage

Prithvi Bharadwaj

AI voice assistants help healthcare teams automate appointment intake, reminders, and triage while improving patient access and reducing admin workload.

Every day, healthcare staff field thousands of routine calls: appointment confirmations, pre-visit intake questions, medication reminders, and basic symptom triage. These interactions are necessary, repetitive, and consuming staff time that could go toward direct patient care. The voice assistant has quietly become one of the most practical solutions to this problem, not as a futuristic concept but as deployable infrastructure that health systems are actively adopting right now.

This guide is written for healthcare IT leaders, clinical operations managers, and developers building or evaluating voice AI systems. By the end, you will understand how voice assistants function in clinical workflows, where they deliver the most measurable value, what compliance requirements shape their design, and how to assess readiness for deployment. For broader context on the technology itself, the comprehensive guide to AI voice assistants is a useful starting point before going deeper into healthcare-specific applications.

The Scale of the Problem Voice AI Is Solving

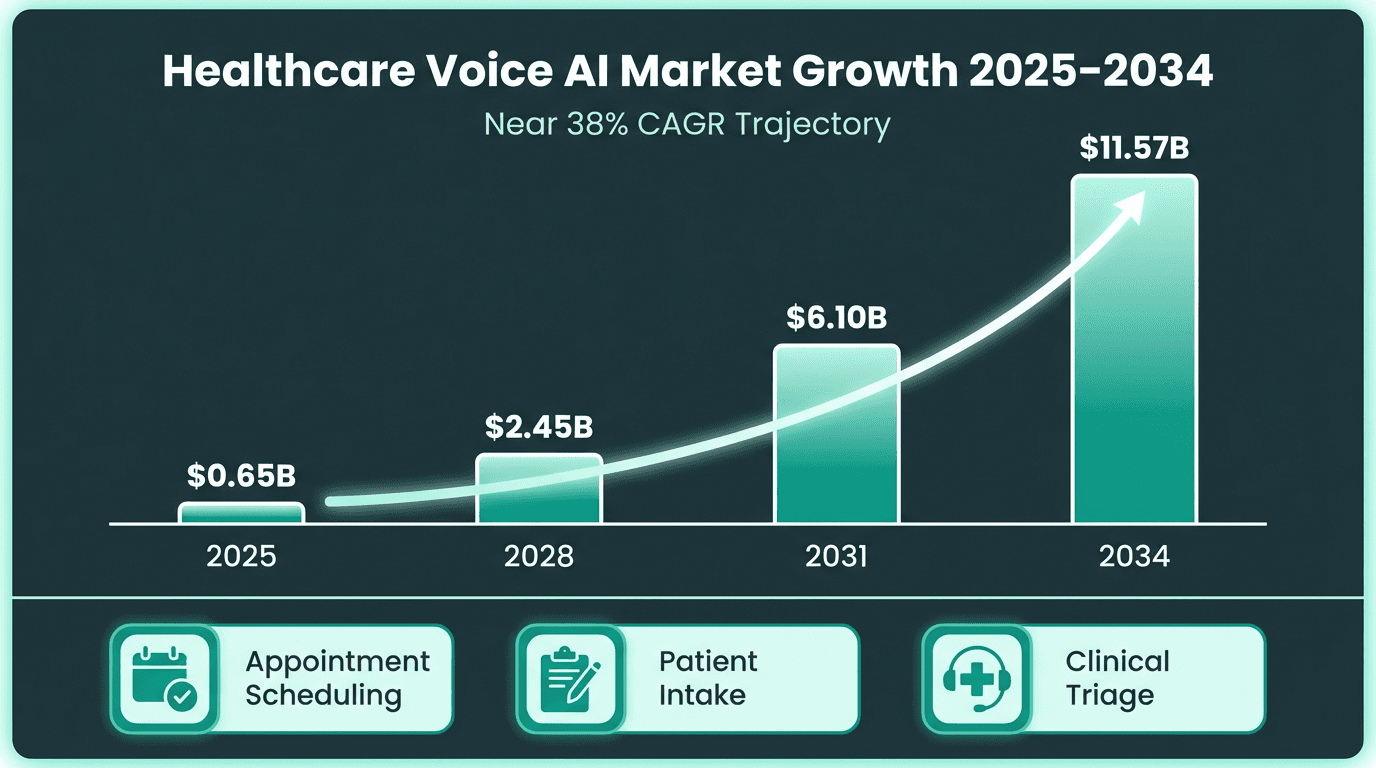

The numbers are striking. The digital assistants in healthcare market was valued at USD 2.31 billion in 2025 and is projected to reach USD 12.7 billion by 2031, growing at a CAGR of 32.84% (Mordor Intelligence, 2026). A separate analysis of AI voice agents specifically in healthcare projects growth from approximately $0.65 billion in 2025 to $11.57 billion by 2034, at a CAGR of nearly 38%. These figures reflect genuine operational demand, not speculative hype.

The underlying driver is straightforward. Healthcare is one of the largest employment sectors in the US economy (Bureau of Labor Statistics, 2025), yet administrative burden continues to grow faster than staffing capacity. Phone-based scheduling, intake, and reminder workflows are labor-intensive and error-prone when handled manually. Missed appointments alone cost the US healthcare system an estimated $150 billion annually. Voice assistants address this at scale without requiring patients to download apps or navigate portals.

Market projections reflect accelerating adoption across scheduling, intake, and triage use cases.

How Voice Assistants Actually Work in Clinical Settings

A clinical voice assistant is not a simple IVR system with better audio. It combines automatic speech recognition (ASR), natural language understanding (NLU), a dialogue management layer, and text-to-speech (TTS) synthesis into a coherent conversational experience. The system must handle accents, background noise, interrupted speech, and domain-specific medical terminology, all in real time.

What separates production-grade clinical voice assistants from consumer-grade equivalents is latency and accuracy under pressure. A patient calling to confirm a cardiology appointment expects a response that feels natural, not a 2-second pause after every utterance. Low-latency TTS synthesis is not a nice-to-have; it is the difference between a patient completing the interaction and hanging up. The architecture must also integrate with EHR systems, scheduling platforms, and notification infrastructure through secure APIs, which is where many early deployments have stumbled.

For teams building these systems, Smallest.ai's voice agents are designed specifically for real-time, low-latency conversational use cases, with speech models that handle the acoustic and linguistic complexity of clinical phone interactions.

Appointment Scheduling and Confirmation: Where Voice AI Earns Its Keep

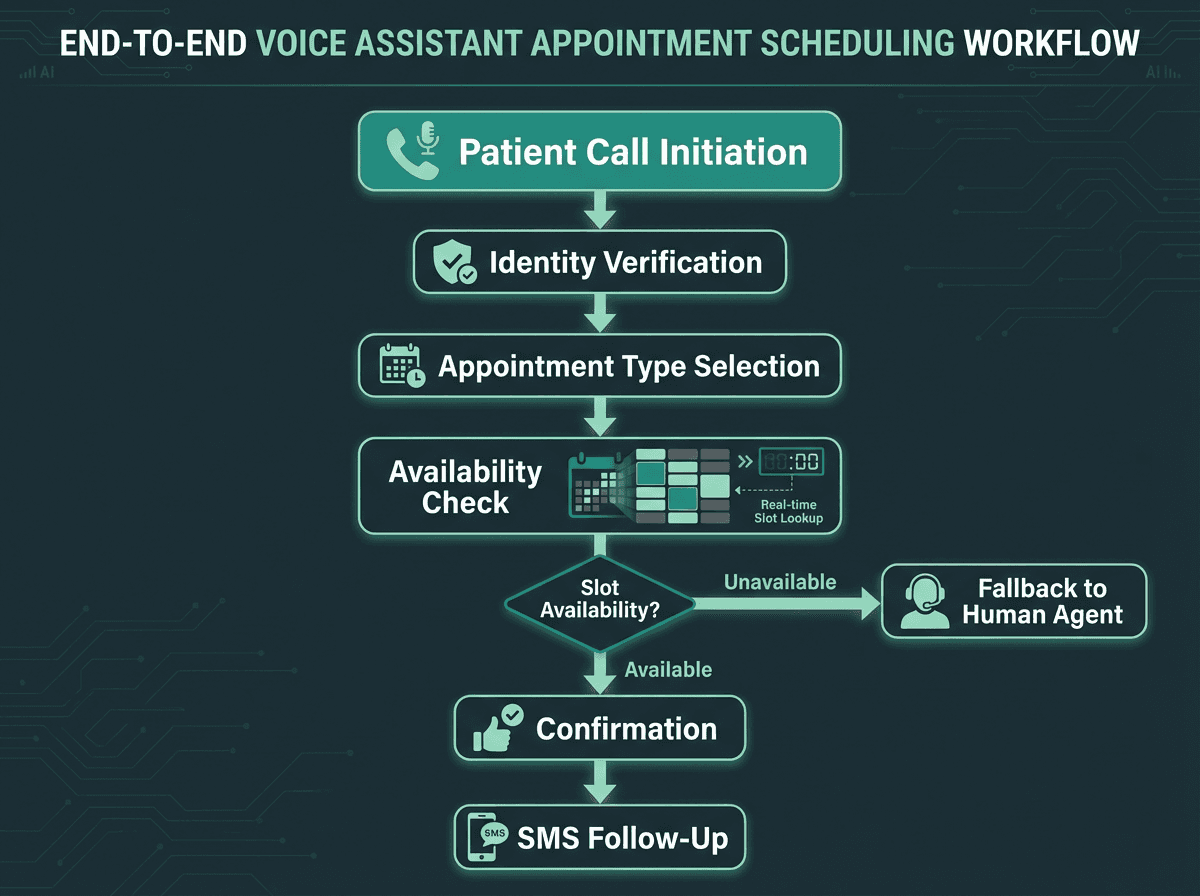

A well-designed scheduling flow handles the full interaction without human intervention in most cases.

Appointment scheduling is the highest-volume, lowest-complexity use case for healthcare voice assistants, which makes it the logical starting point for most deployments. A voice assistant can handle new appointment requests, reschedules, cancellations, and confirmations across multiple departments simultaneously, something no human call center can replicate at equivalent cost.

What a well-configured scheduling voice assistant handles without escalation:

New appointment booking with provider preference and insurance verification prompts

Automated confirmation calls 48 and 24 hours before scheduled visits

Cancellation and same-day reschedule requests with real-time slot availability

Waitlist management: notifying patients when earlier slots open

Multi-language support for diverse patient populations

The practical gains here are measurable. Appointment reminder systems have been shown to improve attendance and reduce missed appointments, especially when they make it easy for patients to confirm, cancel, or reschedule. The voice assistant does not get tired, does not forget to make the call, and does not need a lunch break. For high-volume outpatient settings, this alone can justify the deployment cost within a single quarter.

See how Smallest.ai voice agents handle real-time appointment workflows at scale.

Patient Intake: Moving the Paperwork Out of the Waiting Room

Pre-visit intake is one of the most friction-heavy moments in the patient journey. Paper forms, portal logins, and rushed in-office questionnaires all produce incomplete data and frustrated patients. A voice assistant can conduct a structured intake conversation before the patient arrives, collecting symptom history, medication lists, insurance updates, and consent confirmations through natural dialogue.

The key design consideration here is structured data extraction from unstructured speech. When a patient says 'I've been taking the blood pressure medication, the one my cardiologist prescribed last year,' the system needs to recognize this as a medication reference requiring clarification, not a complete answer. This requires NLU models trained on clinical language patterns, not general conversational data. The quality of the underlying speech model directly determines how much of the intake can be completed without human review.

For a broader look at how voice AI is transforming patient care with voice AI across the full care continuum, the operational patterns extend well beyond intake into chronic disease management and post-discharge follow-up.

Automated Reminders: The Underrated Workhorse

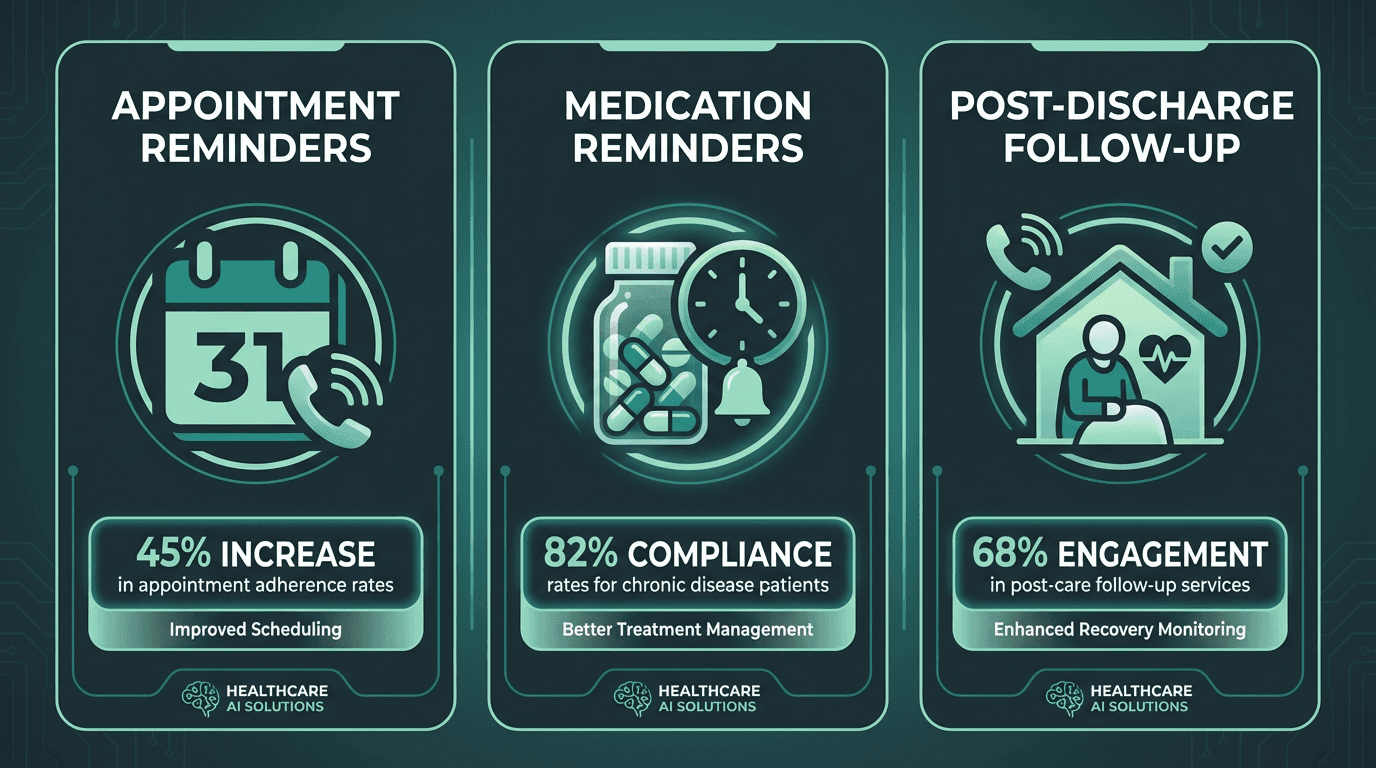

Automated voice reminders cover appointment, medication, and post-care follow-up scenarios.

Reminder calls are where voice assistants deliver value that is easy to quantify and hard to argue with. Medication adherence reminders for chronic disease patients, post-discharge follow-up calls, and preventive care outreach (annual screenings, vaccination schedules) are all high-value, low-complexity interactions that voice AI handles reliably.

What most implementations get wrong here is treating reminders as one-way broadcasts. The most effective reminder systems are conversational: the voice assistant calls, delivers the reminder, and then asks a simple confirmatory question. Did you take your medication this morning? Are you planning to attend your appointment on Thursday? The patient's response is logged, and if it signals a problem (missed doses, intent to cancel), a flag is raised for clinical staff review. This turns a passive notification into an active data collection event.

Symptom Triage: Where the Stakes Are Higher

Triage is the most technically demanding and clinically sensitive application of voice AI in healthcare. The goal is not to replace clinical judgment but to perform initial symptom screening at scale, routing patients to the appropriate level of care before a clinician is involved. A patient calling after hours with chest tightness and shortness of breath needs a different response than one calling about a mild rash.

Effective triage voice assistants use validated clinical decision support logic (often based on established triage protocols like those from the American College of Emergency Physicians) mapped to conversational dialogue trees. The system asks structured questions, interprets responses against risk thresholds, and routes accordingly: emergency services, urgent care, next-day appointment, or self-care guidance. The NIH has funded research into voice assistant applications for cognitive assessment, including a grant to researchers at Dartmouth-Hitchcock and the University of Massachusetts Boston studying voice assistants for early detection of cognitive impairment (NIH Reporter, 2020), which illustrates the clinical depth these systems can reach.

The honest caveat: triage voice assistants require rigorous clinical validation before deployment. The dialogue logic must be reviewed by clinical staff, tested against real patient scenarios, and updated as protocols evolve. This is not a set-and-forget deployment. It requires ongoing governance.

For deployment frameworks covering complex healthcare environments, the enterprise voice AI assistant guide covers escalation architecture and governance in more detail.

HIPAA Compliance Is Not Optional, and It Is Not Simple

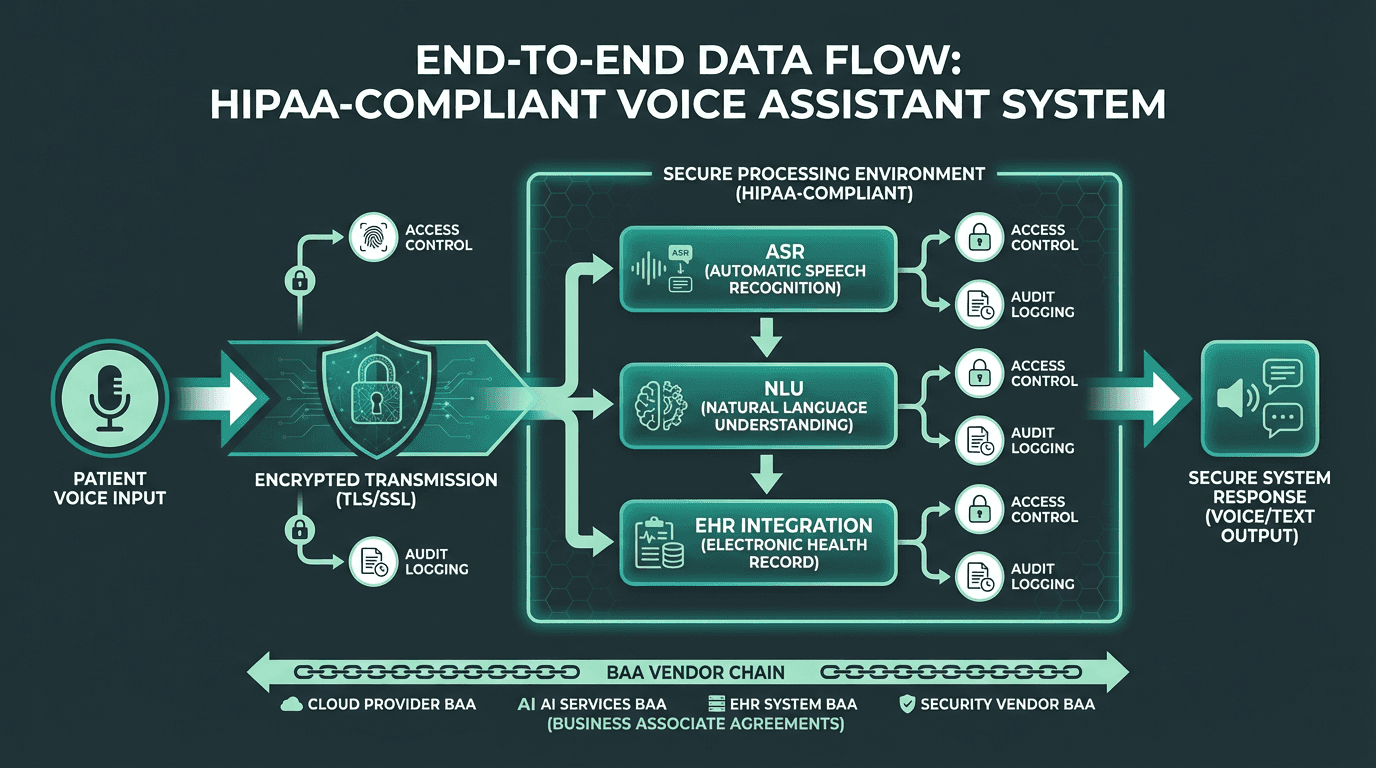

Every stage of voice data processing must meet HIPAA Security Rule requirements for PHI handling.

Any voice assistant that handles protected health information (PHI) is subject to HIPAA's Privacy Rule and Security Rule. This covers not just stored data but voice data in transit, ASR processing logs, and any outputs written to EHR systems. The requirements are specific: encryption in transit and at rest, access controls, audit logging, and business associate agreements (BAAs) with every vendor in the processing chain.

For teams evaluating voice AI vendors, the BAA question is the first filter. If a vendor cannot or will not sign a BAA, the conversation ends there. Beyond that, the architecture must be auditable: you need to know exactly where voice data is processed, how long it is retained, and who has access to it. Cloud-based ASR services that route audio through third-party infrastructure introduce compliance complexity that on-premise or private cloud deployments avoid. The detailed requirements for building HIPAA-compliant voice AI in healthcare are worth reviewing before any vendor selection process begins.

Implementation Considerations That Rarely Make It Into Vendor Decks

EHR integration is consistently underestimated. Most health systems run Epic, Cerner, or Meditech, and each has its own API patterns, rate limits, and data models. A voice assistant that cannot write confirmed appointments back to the EHR in real time creates a reconciliation problem that erodes clinical trust quickly. Insist on seeing a working EHR integration, not a roadmap item, before signing a contract.

Fallback design matters more than primary flow design. The primary flow (patient calls, assistant handles it, interaction completes) works well when it works. But patients will say unexpected things, connections will drop, and edge cases will appear. The fallback to a human agent must be fast, warm (the agent receives context from the voice session), and available. A cold transfer that makes the patient repeat everything they just said to the voice assistant is worse than having no voice assistant at all.

Pre-deployment checklist for healthcare voice assistant projects:

BAA signed with all vendors handling voice or PHI data

EHR write-back tested in staging environment against real appointment types

Clinical review of triage dialogue logic completed and documented

Fallback-to-human transfer tested for latency and context handoff

Multi-language support validated for your patient population demographics

Staff training completed on how to review flagged interactions and override decisions

Audit logging configured and accessible to compliance team

Ready to evaluate Smallest.ai speech models for your healthcare voice assistant build?

Key Takeaways

Voice assistants in healthcare are not a single product category. They are a set of capabilities (scheduling, intake, reminders, triage) that each require different levels of clinical validation, compliance rigor, and technical integration depth. The market growth projections from Mordor Intelligence and others reflect real adoption pressure, but successful deployments are the ones that start with a specific, measurable workflow problem and build outward from there.

The technology infrastructure underneath these systems matters enormously. Low-latency speech synthesis, accurate clinical ASR, and secure API architecture are not differentiators in a marketing sense; they are table stakes for a system that patients and clinicians will actually trust. Smallest.ai builds the speech model layer that powers production-grade voice agents for exactly these environments: Pulse STT for clinical-grade transcription accuracy on telephony audio, and Lightning TTS for sub-100ms synthesis latency that keeps patient interactions feeling natural. If your team is building or evaluating a healthcare voice assistant, start by testing the speech infrastructure against your own patient audio - that is where the real performance gap between vendors becomes visible.

What is a voice assistant in healthcare, and how does it differ from a standard IVR system?

Can a voice assistant handle after-hours triage calls safely?

What does HIPAA compliance require for a voice assistant handling patient data?

At minimum: encryption of voice data in transit and at rest, access controls limiting who can retrieve interaction logs, audit trails for all PHI access, and a signed Business Associate Agreement with every vendor in the processing chain. The HIPAA-compliant voice AI requirements also extend to how long voice recordings are retained and whether ASR processing occurs on infrastructure you control or a third party's.

What languages and accents can healthcare voice assistants support?