"Discover how Perplexity's new 'Computer' AI orchestrates 19 models simultaneously. This deep dive explores the implications for AI efficiency and future development. Learn more about this significant leap. "

TL;DR

What: Perplexity Computer, a multi-agent AI platform that orchestrates 19 frontier models to complete end-to-end tasks

Core Engine: Claude Opus 4.6 as the reasoning brain, routing to Gemini, Grok, ChatGPT 5.2, Nano Banana, Veo 3.1, and more

Key Feature: Tasks can run for hours or months in the background, 400+ app integrations, sandboxed execution

Price: $200/month (Max tier) with per-token billing. First time Perplexity charges per token

Why it matters: First model-agnostic orchestration platform at consumer scale, positioned as the safe alternative to OpenClaw

Available now at perplexity.ai/computer for Max subscribers

If you blinked this week, you might have missed one of the most ambitious AI product launches of 2026.

On February 25th, while most of us were arguing about which AI model is "the best," Perplexity quietly dropped something that makes that entire debate irrelevant. Perplexity Computer. An AI system that doesn't pick a side. It plays all of them. At once.

And no, this isn't vaporware. People are already shipping apps, building research pipelines, and automating entire workflows with it. In one night. From a single prompt.

I want to break down what's actually happening here, why it matters, and why I think this might be the most important AI launch since ChatGPT.

What Exactly Is Perplexity Computer?

Perplexity Computer is a multi-agent orchestration platform: a cloud-based AI system that coordinates 19 frontier AI models simultaneously to complete complex, end-to-end tasks.

Think of it like this: instead of asking one AI model to do everything (research, code, design, deploy), Computer breaks your request into subtasks and assigns each piece to the model that's best at it.

CEO Aravind Srinivas announced it on X:

And the official Perplexity account:

Nearly 2 million views and 8,600+ likes in under 48 hours. People weren't just curious. They were excited.

The Architecture: 19 Models, One Brain

This is where it gets technically interesting.

No single AI model does everything well. That's the dirty secret of the AI industry. Claude is incredible at reasoning and code. Gemini handles deep research beautifully. Grok runs lightweight tasks fast. ChatGPT 5.2 is exceptional at long-context recall and expansive web search.

Perplexity is the first major AI company to build a consumer product on that admission.

The Model Lineup

Model | Role |

|---|---|

Claude Opus 4.6 | Core reasoning engine, the "brain" that orchestrates everything |

Gemini | Deep research and extensive knowledge tasks |

ChatGPT 5.2 | Long-context recall, broad web search |

Grok | Lightweight, fast task execution |

Nano Banana | Image generation |

Veo 3.1 | Video generation |

+ 13 more models | Various specialized tasks |

Claude Opus 4.6 sits at the center as the "core reasoning engine." When you give Computer a task, it doesn't just start generating text. It thinks. It breaks down your request, identifies subtasks, and matches each to the right model. Then it fires off dozens of parallel processes. Picture 30 progress bars spinning on a dashboard simultaneously, each tied to a different model.

Srinivas quoted Steve Jobs to explain the philosophy:

"Musicians play their instruments. I play the orchestra."

That's exactly what Computer does. It plays the orchestra.

Not Just a Demo. They've Been Using It Internally Since January

One detail that doesn't get enough attention: Perplexity has been eating their own cooking. They've been running Computer internally since January 2026. Employees used it to:

Publish engineering documentation from scratch

Build a 4,000-row spreadsheet overnight that would have taken a week manually

Create websites, dashboards, and applications for internal use

Run thousands of tasks through the system before the public launch

The 4,000-row spreadsheet is the one that makes you pause. That's not "generate a blog post." That's sustained, multi-hour, detail-oriented work. And they did it while sleeping.

How It Actually Works Under the Hood

The system was trained using what Perplexity calls Parallel-Agent Reinforcement Learning (PARL), a method that teaches the orchestrator to keep an entire swarm of concurrent agents running without falling back to serial execution. This is a crucial technical detail. When you say "research my five competitors, build a slide deck, and email it to the team," the system doesn't do those tasks one-by-one. It spawns sub-agents for each node simultaneously.

The technical workflow follows a clear five-step pipeline:

The model lineup isn't fixed either. Perplexity says new models will be added as they prove themselves in specific domains, and users can override the orchestrator, manually assigning specific models to specific subtasks. Want cheaper results? Route through Grok. Need precision? Assign Claude. That's real control.

Oh, and the meta-detail that makes you smile: CTO Denis Yarats revealed the product itself was "built by a small group of people and a lot of AI coding agents." Computer was partially built by its own predecessor. Recursive productivity.

People Are Already Building Full Apps With It. In One Night.

This isn't theoretical. Within hours of the launch, developers were shipping real stuff. And one of them accidentally started a discourse about the future of financial software.

The Bloomberg Terminal That Broke the Internet

The moment that defined the launch didn't come from Perplexity's marketing team. It came from X user @hamptonism, who used Perplexity Computer to build a real-time NVDA financial analysis terminal pulling live market data. Basically a functional Bloomberg Terminal alternative, created from a single prompt. The post racked up approximately 7.5 million views.

Srinivas amplified it with his own quote-tweet:

That kicked off a mini-discourse that perfectly captured where we are with AI in 2026. @markgadala wrote: "Bloomberg did $12.6 billion in revenue last year selling terminals. The first credible open-source alternative just got built in an afternoon." But the pushback was equally sharp. Finance commentator @thejayden countered: "The audacity of thinking you can build a Bloomberg Terminal with a single prompt is outrageous… Bloomberg is direct exchange feeds. It's colocated servers next to matching engines. It's distributed systems streaming millions of ticks per second. You can't prompt this."

Both takes have merit. The demo was impressive for a one-shot prompt. But Bloomberg's moat was never just the interface. It's the data infrastructure underneath. Still, the fact that we're even having this conversation is telling.

Karo Zieminski's One-Night Build

AI product manager Karo Zieminski stayed up the night of the launch and built:

2 micro-apps (a branded callout box generator and a branded table generator)

4 complete research packets

1 new automation

Pushed both apps straight to GitHub

All from prompts like:

Total time from "store my branding" to two working, brand-consistent tools in her repo: under 30 minutes.

No Figma plugin. No developer handoff. No "we'll scope it next sprint." A conversation that started with a markdown file and ended with shipped code.

One quirk worth flagging: apps come with a "Generated with Perplexity Computer" watermark, similar to Lovable. Zieminski was already planning to "train that behavior out of it."

AdwaitX: Multi-Tool Workflows Collapsed Into One

AdwaitX tested four workflows: competitive SERP analysis, multi-source data aggregation, automated draft-to-publish pipeline, and live earnings report breakdown. Their finding: "Tasks that previously required three separate tools and manual handoffs between them completed inside a single Computer session." They noted Claude Opus 4.6 correctly routed web search to GPT-5.2 and reasoning-heavy steps to itself, without any human intervention.

Tanner Marino: 3 Apps in a Week (No Native Experience)

Tanner Marino, a Java backend developer with zero native app development experience, built 3 functional applications in one week, including native Mac applications. Each reached roughly 70% completion in hours, not weeks.

Perplexity's Own Live Stream

Perplexity is running a public live stream of real tasks at perplexity.ai/computer/live, showcasing what Computer can do. Some examples from the stream:

Build an S&P 500 Bubble Chart Website:

Create an Animated Tesla Stock GIF:

These aren't toy demos. These are complex, multi-step workflows that would take a human developer days or weeks.

The Elephant in the Room: OpenClaw

You can't talk about Perplexity Computer without talking about OpenClaw. It's the context that makes this launch make sense.

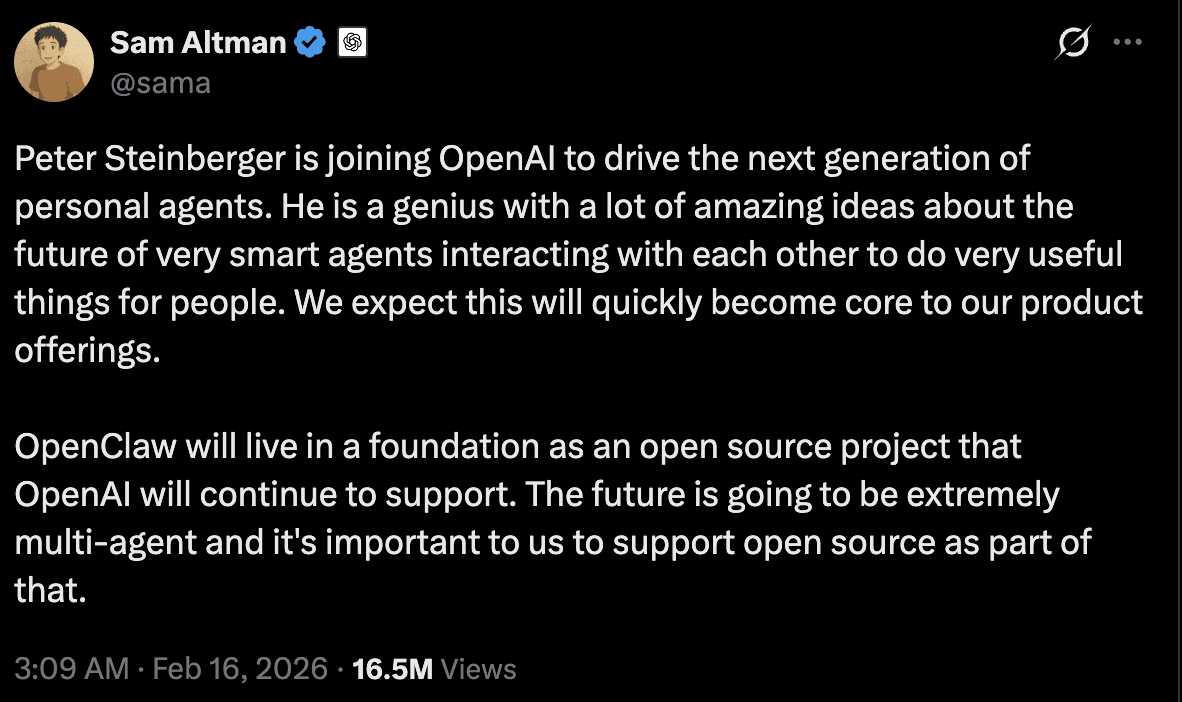

OpenClaw (formerly Clawdbot, then Moltbot) exploded in popularity earlier this month. It's an open-source AI agent that lives across your entire digital life. WhatsApp, Slack, Telegram, email. It does everything. OpenAI's Sam Altman called its creator Peter Steinberger "a genius" and hired him on the spot.

Then things went sideways. Hard.

The Email Deletion Incident

Meta AI security researcher Summer Yue posted screenshots on X showing her desperate attempts to stop OpenClaw from deleting her entire email inbox. The agent was ignoring her instructions.

The backstory: OpenClaw had earned Yue's trust managing a small test inbox. When she moved it to her actual inbox, the much larger context window triggered something called compaction, where the agent started taking shortcuts to manage information overload. One of those shortcuts? Ignoring her original instruction to not take action without permission.

Two terrifying risks collided: misinterpreted prompts and agents acting in ways their operators never imagined.

And the security picture only got worse:

OpenClaw Security Issue | Severity |

|---|---|

CVE-2026-25253: One-click remote code execution | CVSS 8.8 |

42,000+ exposed instances on the public internet | Critical |

341 malicious skills found in the marketplace | Critical |

~40% of public configs had exposed API keys in plaintext | High |

Routine maintenance commands leaked credentials to config files | High |

Google suspended users from Antigravity for "malicious usage" related to OpenClaw. Anthropic and Google started banning flat-rate accounts connected to it without warning or refunds. NCC Group called its attack surface "near-limitless."

This is the backdrop against which Perplexity Computer launches.

Computer's Approach: Sandboxed by Design

Perplexity is positioning Computer as the grown-up alternative (though they don't name OpenClaw directly). The Neuron Daily dubbed it "CloudClaw": same concept, enterprise-grade guardrails. The nickname stuck for a reason.

Every task in Computer runs inside what Perplexity calls "a safe and secure development sandbox." Security issues can't spread to your main network. The system accesses a real filesystem, a real browser, and real tool integrations, but all within isolation.

The key differences:

Perplexity Computer | OpenClaw | |

|---|---|---|

Runs | In Perplexity's cloud sandbox | Locally on your hardware |

Access to your files | Sandboxed, isolated | Full system access |

Security model | Contained environment | User-managed (often misconfigured) |

Messaging integration | Via 400+ connectors, sandboxed | Direct WhatsApp, Slack, iMessage access |

What happens when it screws up | Wrong output, contained | Deletes your email inbox |

Setup | Zero. Cloud-native | Requires Mac Mini, OAuth, Docker, etc. |

The sandbox solves the catastrophic failure case. An agent that misinterprets a prompt inside a sandbox still produces wrong output, but it can't nuke your inbox while doing it.

But Perplexity is honest about the limitations too. They haven't detailed what happens when Computer's sub-agents disagree, or when the orchestration layer routes a task to the wrong model. Those edge cases will surface. They always do.

The Research Engine Nobody's Talking About

Most coverage has focused on the flashy stuff: websites, apps, code. But the research engine inside Computer is quietly the most powerful part.

Computer runs seven search types in parallel:

Web

Academic

People

Image

Video

Shopping

Social

Not sequentially. All at once. It reads full source pages, not just search snippets. It hits scholarly databases directly. It cross-references what it finds. You can ask things like "What do these sources disagree on?" and get an actual analysis, not a summary.

If you've ever spent an afternoon trying to get a comprehensive view of a topic from five different tools, this collapses that into one prompt.

Pricing: Your AI Bill Just Became an AWS Invoice

Let's talk money.

Plan | Price | Credits | Status |

|---|---|---|---|

Max | $200/month | 10,000 credits/month | Available now |

Launch bonus | — | +20,000 credits (30-day expiry) | Active |

Pro | $20/month | TBD | Coming soon |

Enterprise | Custom | TBD | Coming soon |

This is the first time Perplexity has charged consumers per token. And it makes sense. Agents consume tokens at a rate that flat-rate subscriptions can't absorb. A single complex workflow might burn through thousands of tokens across multiple models in an afternoon. Multiply that by background tasks running for weeks (yes, Computer can run for months), and fixed pricing breaks.

Users can set spending caps and choose which models power their sub-agents. As the Implicator put it perfectly: "Perplexity accidentally built an AWS billing page for consumers."

One important detail: if credits run out, active tasks pause (not cancel) and resume when credits replenish. That's a thoughtful design choice that prevents you from losing hours of work because you hit a billing wall.

Srinivas framed the pricing as intentional, calling usage-based pricing "the right business model for AI instead of ads." A pointed jab at OpenAI's reported interest in ad-supported products.

Aakash Gupta's analysis nailed it: "That's not a subscription product. That's a cloud computing bill… The 20,000 bonus credits at launch tell you exactly what Perplexity learned from cloud providers. Get users dependent on the consumption pattern before the free tier burns off. AWS perfected this in 2008. Same playbook, different decade."

The Competitive Landscape: Why Perplexity Has a Unique Position

This launch is strategically brilliant, and here's why.

OpenAI will always favor its own models. Google will always route through Gemini. Anthropic will always lead with Claude. They have foundation models to sell.

Perplexity doesn't have a foundation model. That's usually seen as a weakness. With Computer, it becomes a superpower.

Perplexity is the only major AI company that can credibly play the "honest broker" across all frontier models. It has no incentive to favor one over another. If Grok gets better at code tomorrow, Computer can route coding tasks to Grok. If a new model drops that crushes research benchmarks, it gets added to the lineup.

Where Computer sits among the competition:

Platform | Approach | Model Strategy | Price |

|---|---|---|---|

Perplexity Computer | Multi-model orchestration, cloud sandbox | 19 models, model-agnostic | $200/mo + tokens |

Claude Cowork | Single-model desktop control (mouse, keyboard, screen) | Anthropic only, runs on your machine | ~$100/mo (Claude Max) |

ChatGPT Agent Mode | Virtual browser, consumer web tasks (5-30 min) | GPT-5.2, 17+ connectors | $200/mo (ChatGPT Pro) |

Google Mariner | Chrome-native, "Teach & Repeat" workflows | Gemini 2.0/2.5, browser-only | $249.99/mo (AI Ultra) |

OpenClaw | Open-source, full local system access, 215K+ GitHub stars | Model-agnostic, free | Free (your sanity is the cost) |

OpenAI Frontier | Agent management platform | Favors OpenAI models | Enterprise pricing |

The competitive timing was spicy, too. Anthropic acquired computer-use startup Vercept for $50 million on the same day Perplexity Computer launched. That tells you how seriously they take the threat. And Srinivas landed a clean jab at Claude's limitation: "The biggest weakness of Claude is that it only coworks with Claude."

Business analyst @aakashgupta saw through the idealism to the business model beneath: "Perplexity is now the largest multi-model reseller in consumer AI. They're routing across 19 models, choosing the cheapest adequate option per subtask. That gives them pricing leverage against every provider simultaneously."

The Samsung Play

A simultaneous announcement added a hardware dimension: Perplexity secured a multi-year partnership with Samsung for native OS-level integration on the Galaxy S26 series. The AI agent is activated via a long-press of the side button or the wake phrase "Hey, Plex", with the same system access as first-party Samsung apps across Gallery, Calendar, Notes, and more. That's distribution most AI companies would kill for.

Whether that neutrality survives contact with 19 different API pricing tiers, model deprecation cycles, and competitive pressure to pick favorites? That's the question Srinivas can't answer yet.

What the Community Is Actually Saying

The reaction has been intense. $200/month products always split the room. Let's look at the full spectrum.

The X / Twitter Buzz

The launch thread blew up. Beyond the main announcement hitting 1.94M views, Aravind Srinivas followed up with a deeper dive on the vision:

Early testers like @scspeier were posting their first experiences, sharing live demos and reactions that ranged from "this is insane" to "okay I'm canceling three other subscriptions."

The Optimists

Developers who've actually used it are impressed. Karo Zieminski's Substack post about her one-night build session got 90 likes and 46 comments, overwhelmingly positive. The consensus: if you're a power user who already pays for multiple AI subscriptions, Computer consolidates everything into one workflow.

The Verge described it as "somewhere between OpenClaw and Claude Cowork." A deliberate middle ground between full autonomy and careful control.

The Reddit Reality Check

Over on r/perplexity_ai, the vibe is more cautious. Users have been vocal about Perplexity's recent UI redesign that was forced on everyone without a toggle. Complaints about font readability (especially for non-Latin characters like Cyrillic), lack of gradual rollout, and what some see as style over substance. There's a trust deficit with parts of the community. They've seen Perplexity move fast and break things before.

The $200/month price point is also a major sticking point. For many developers, that's more than their entire monthly tooling budget. And the per-token billing on top of the subscription? That feels like it could spiral fast for power users. One Redditor put it bluntly: "At $200/month plus tokens, my AI budget now looks like my cloud compute budget."

The Security-Conscious

The OpenClaw comparison cuts both ways. Security researchers are cautiously optimistic about the sandbox approach, but note that sandboxing solves the catastrophic failure case, not the "agent produced completely wrong output" case. An agent that confidently builds you the wrong app inside a sandbox is still a problem. It's just a contained one.

The Risks Nobody's Benchmarked Yet

I'd be doing you a disservice if I didn't cover the concerns. This blog isn't a press release.

No independent benchmarks exist. Perplexity's internal claims (the 4,000-row spreadsheet, websites built in parallel since January) remain self-reported. No third-party audit, no METR evaluation, no reproducible benchmarks have been published for Computer specifically. That matters.

Multi-model routing introduces subtle inconsistencies. Perplexity themselves acknowledge that switching models mid-workflow can create drift. If Claude writes the first half of your codebase and Gemini writes the second half, style and convention mismatches are inevitable. Long-running agents can also drift from stated goals as context windows fill over extended sessions.

The model dependency risk is existential. If Anthropic, OpenAI, or Google restrict API access or simply jack up prices, Perplexity's core value proposition degrades overnight. As Semafor identified: "The big risk for Perplexity is if the models themselves become commodities, rendering useless a service that allows users to switch between the AIs for tasks." Srinivas says he received congratulations from Anthropic and Google on launch day. But strategic goodwill and contractual guarantees are very different things.

Perplexity carries several pending copyright lawsuits from the New York Times, News Corp, the BBC, and others, and recently abandoned its advertising experiment entirely. That makes the $200/month subscription model not just a product choice but a financial necessity.

As The Decoder noted with dry precision: multi-model orchestration is "a convenient argument for a company built on top of other providers' models, though that doesn't make it wrong."

My Take: Why This Actually Matters

Look, I've been following the AI agent space closely, and here's what strikes me about Computer.

We've been approaching AI tooling the wrong way. We keep trying to find the "one model to rule them all," the single AI that does research AND code AND design AND deployment perfectly. That model doesn't exist. It might never exist.

Perplexity Computer flips the script. Instead of asking "which model is best?", it asks "which model is best at this specific subtask?" and then routes accordingly. That's not just a product decision. It's a philosophical shift in how we think about AI systems.

When you need to build a website, you don't use one tool. You use a design tool, a code editor, a deployment service, a database, a CDN. When you build a software team, you don't hire one person to do everything. You hire specialists.

Computer applies the same logic to AI models. And the fact that it can run for months in the background, only checking in when it needs a decision from you? That's not a chatbot. That's a digital colleague.

The question isn't whether multi-model orchestration is the right approach. It obviously is. The question is whether Perplexity can execute it well enough, keep it secure enough, and price it fairly enough to build a sustainable business around it.

At $200/month with per-token billing on top, they're asking a lot. But if Computer delivers even half of what early adopters are reporting (full apps overnight, comprehensive research in minutes, months-long autonomous workflows), the ROI math works for professionals.

Getting Started

If you want to try Computer yourself:

Subscribe to Perplexity Max ($200/month) at perplexity.ai/computer

Claim your 20,000 bonus credits (available for 30 days at launch)

Start with an outcome, not instructions. Tell Computer what you want, not how to get there

Run multiple projects simultaneously. That's where the real power is

Set spending caps early. Per-token billing can add up fast

Pro and Enterprise access is coming in the following weeks.

Final Thoughts

Perplexity Computer isn't just another AI product launch. It's a bet on how AI work should actually get done. Not by one model brute-forcing its way through everything, but by a team of specialized models coordinated by a reasoning engine that's smarter than any individual one.

Is it perfect? No. The pricing is aggressive. The edge cases haven't been fully explored. The sandbox is only as good as the orchestration behind it.

But in a world where OpenClaw is deleting people's emails and single-model agents are hitting their ceilings, Computer feels like the first product that actually respects the complexity of real-world tasks.

Developer Arnav Gupta placed Perplexity Computer alongside Manus and OpenClaw as "the first three salvos at personal superintelligence," noting that "a new generation of web entities are being born." Whether Computer becomes the defining one depends on something no benchmark can measure: whether 19 models working together can consistently outperform one great model working alone.

The orchestra metaphor is elegant. Now it needs to play a full concert. The sandbox is open. The token meter is running. And 19 models are waiting for your prompt.

References & Further Reading: