Read Aloud Text to Speech for Accessibility: Best Tools and Key Criteria in 2026

Prithvi Bharadwaj

Discover the best read aloud text to speech tools for accessibility in 2026. Compare features, latency, and pricing to build truly inclusive digital experiences.

According to the World Health Organization, an estimated 1.3 billion people globally, roughly 16% of the world's population, live with a significant disability. A large portion of that group depends on read aloud text to speech technology every day just to access the internet, read a document, or follow along with educational content. The scale of unmet need is hard to overstate.

This piece is for developers building accessible products, content creators reaching wider audiences, and educators designing inclusive learning environments. The goal is straightforward: identify what separates a genuinely accessible TTS solution from one that merely checks a compliance box, and which tools are actually worth integrating.

What Read Aloud Text to Speech Actually Means

Text-to-speech (TTS) technology converts written text into spoken audio. In accessibility contexts, it functions as assistive technology, allowing people with visual impairments, dyslexia, cognitive disabilities, or motor limitations to consume written content without reading it themselves. The W3C Web Accessibility Initiative defines accessible design as ensuring that people with disabilities can perceive, understand, navigate, and interact with digital content. TTS sits at the center of that definition.

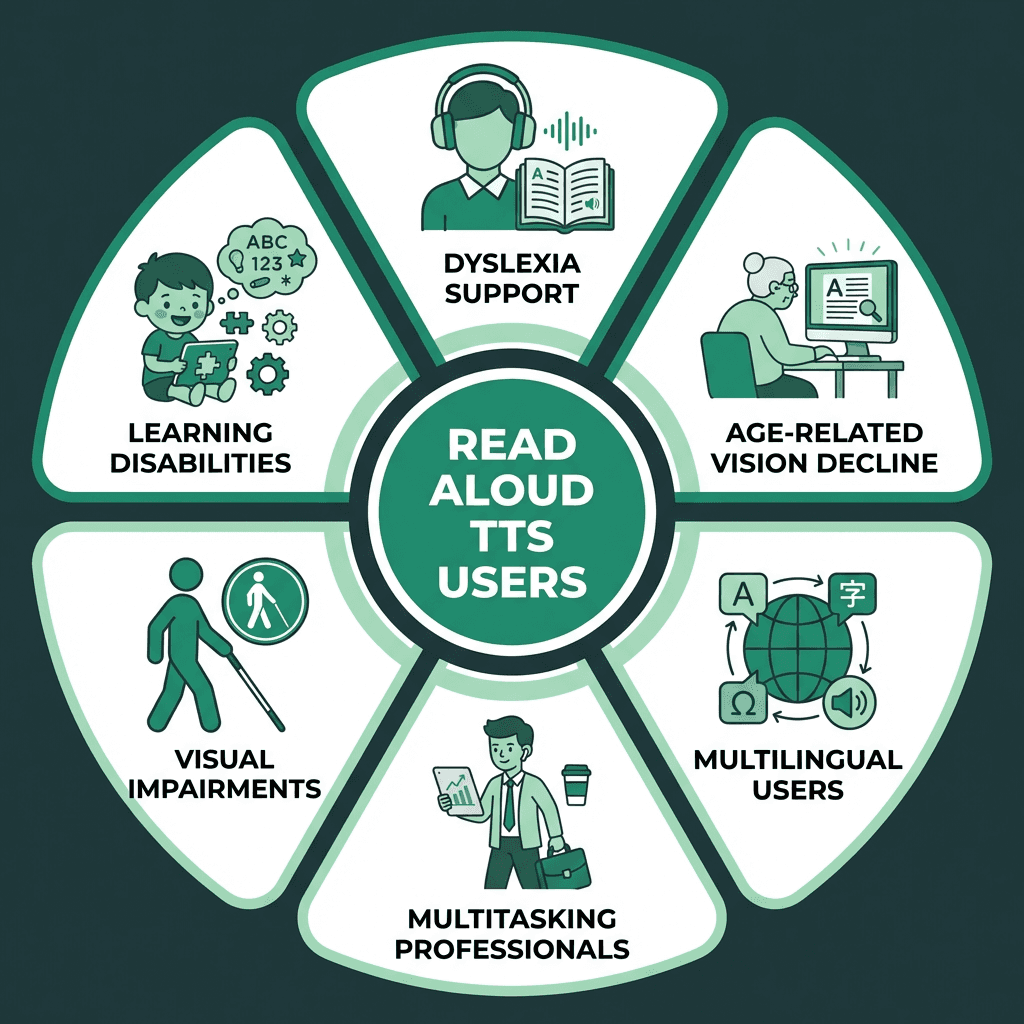

The common mistake is treating read aloud TTS as a single-use tool for blind users. It serves a far broader population: students with learning differences, non-native language speakers, people with attention disorders, elderly users experiencing age-related vision decline, and professionals who prefer audio while multitasking. The Web Content Accessibility Guidelines (WCAG) require that digital content be properly coded to work with assistive technologies like screen readers and TTS software, making compliance both a legal obligation and an ethical one for most organizations.

TTS accessibility extends well beyond a single user group.

The Accessibility Criteria That Actually Matter in a TTS Tool

Not all TTS tools are built with accessibility as the primary design goal. Some are built for content production, others for entertainment, and a few are genuinely designed to serve users with disabilities. Before comparing specific tools, it helps to know what criteria separate the functional from the exceptional.

Criterion | Why It Matters | What to Look For |

|---|---|---|

Voice Naturalness | Robotic voices cause listener fatigue and reduce comprehension | Neural TTS voices with natural prosody and pacing |

Language Coverage | Accessibility must extend across languages | Support for 20+ languages with regional variants |

Latency | Delays break the reading flow for real-time use cases | Often targeted for real-time applications, with some systems achieving lower latency |

SSML Support | Allows fine control over emphasis, pauses, and pronunciation | Full or partial SSML tag support |

API Accessibility | Developers need to build TTS into accessible apps | Well-documented REST or WebSocket APIs |

Customization | Different users need different speaking rates and voices | Adjustable speed, pitch, and voice selection |

WCAG Compatibility | Legal and ethical compliance for web products | Output compatible with screen reader workflows |

Best Read Aloud Text to Speech Tools for Accessibility in 2026

The text-to-speech market is growing rapidly, driven largely by accessibility demand and the expansion of voice-first products.

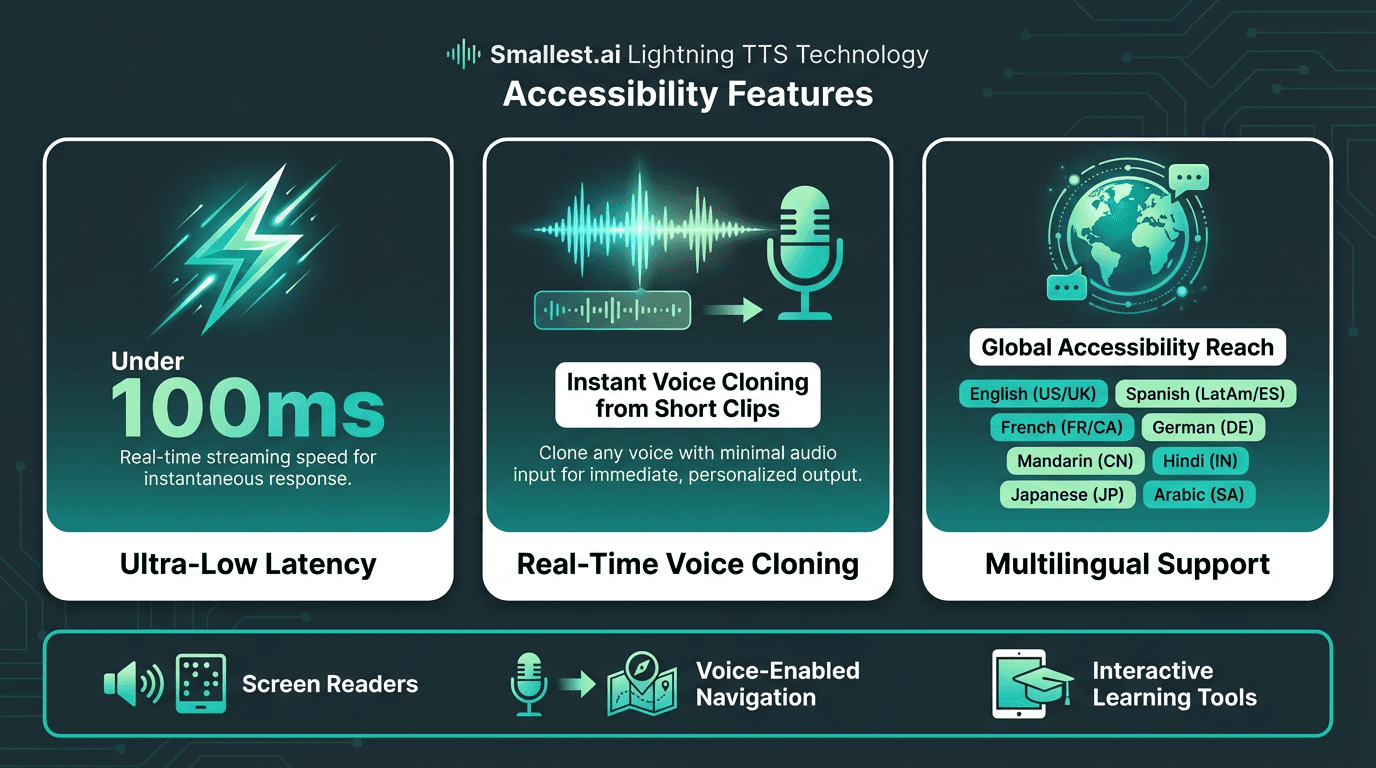

Smallest.ai

Smallest.ai targets developers who need production-grade TTS with minimal latency. Its Lightning TTS delivers ultra-low latency streaming audio, which matters most in real-time accessibility applications: screen reader integrations, live captioning companions, and interactive learning tools. Accessibility is a listed first-class use case on the platform, and the output is well suited for screen reader and assistive use cases. The platform supports multilingual text-to-speech across multiple languages, making it a strong foundation for globally inclusive products. Pricing starts with a free tier and scales based on usage. Current plans are available on the Smallest.ai Lightning TTS page.

Smallest.ai's Lightning TTS model is designed for real-time, low-latency use cases like voice agents and accessibility tools.

ElevenLabs

ElevenLabs is primarily designed for content creation, with tooling built for narration and dubbing workflows. For developers building real-time accessible applications, its architecture may require additional integration work to meet the demands of production accessibility use cases like streaming and full WCAG compatibility.

Deepgram TTS

Deepgram is a speech-to-text API provider that has added TTS capabilities. As a component rather than a complete accessibility solution, it may require additional integration for accessibility use cases. Teams without significant engineering resources might find this requires more development before an accessible output reaches an end user.

Cartesia

Cartesia is a low-latency TTS API component, not a deployable accessibility tool. Integrating it into a functioning accessible product requires substantial engineering work. For a full breakdown of where the architecture differs from a complete solution, the Cartesia AI review on Smallest.ai's blog covers this in detail.

How to Actually Implement TTS for Accessibility

Selecting a tool is the easy part. Implementing it in a way that genuinely serves users with disabilities is where most teams stumble. A few principles that hold across nearly every accessible TTS integration:

Implementation steps for accessible TTS integration:

Audit your content first. Identify which content types need TTS support: articles, UI labels, error messages, form instructions. Over-narrating creates its own usability problems, and not everything needs to be spoken.

Choose the right API architecture. Streaming APIs with low first-byte latency are essential for real-time reading assistants. For pre-generated audio like audiobooks, batch processing is more cost-efficient. Understanding the latency and cost tradeoffs here shapes your entire infrastructure decision.

Use SSML for meaningful content. Mark up abbreviations, numbers, dates, and proper nouns so the TTS engine pronounces them correctly. A screen reader rendering 'Dr.' as 'drive' rather than 'doctor' changes the meaning entirely.

Test with actual users. Automated tools catch structural issues. Only real users with disabilities can tell you whether the spoken output is actually usable. Include people with dyslexia, visual impairments, and cognitive disabilities in your testing cycle.

Build in user controls. Speed adjustment, pause, rewind, and voice selection are not optional enhancements. They are core accessibility features that directly affect whether a user can engage with your product at all.

TTS for Specific Accessibility Use Cases

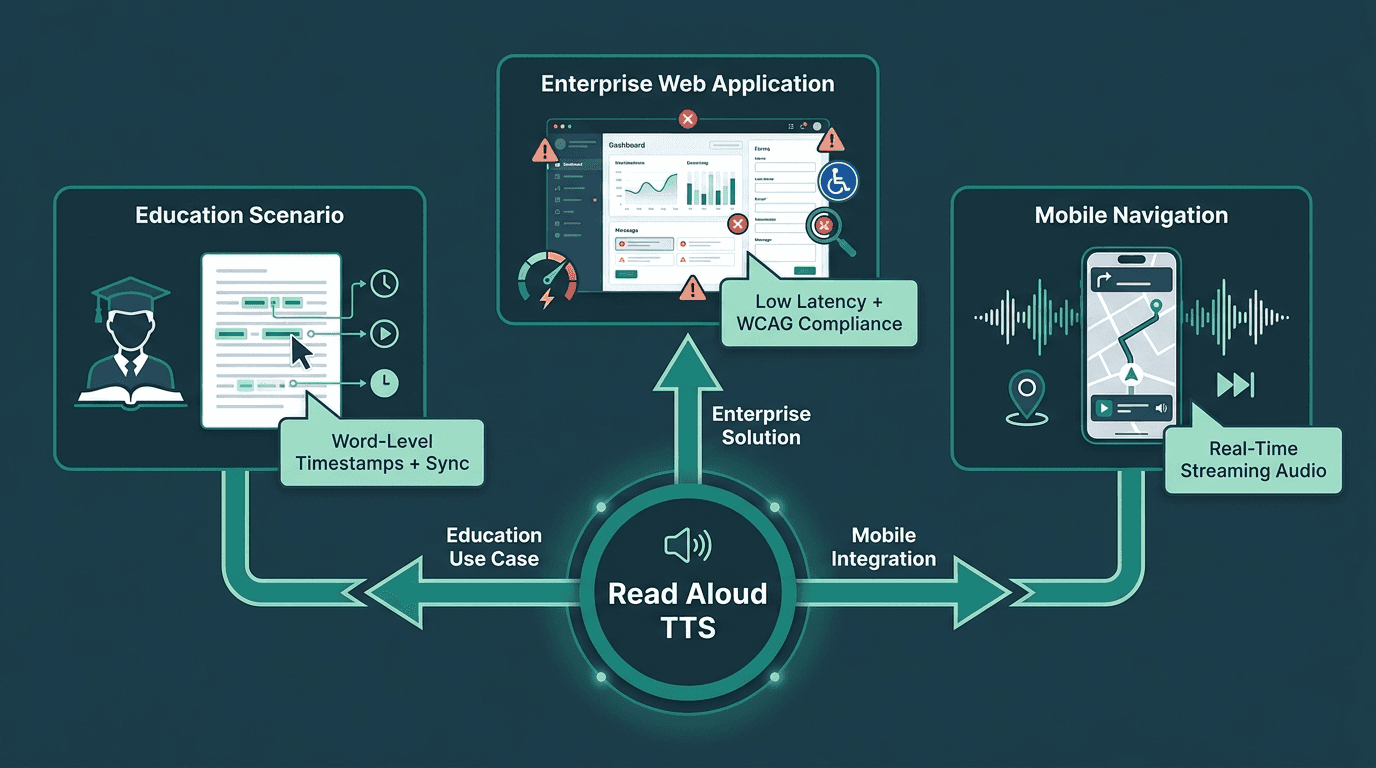

A reading assistant for a student with dyslexia has fundamentally different technical requirements than a navigation voice for a visually impaired user on a mobile app. Collapsing these into a single configuration leads to over-engineering some use cases and under-serving others.

For educational content, word-by-word highlighting synchronized with audio is one of the most effective interventions for readers with dyslexia. This requires a TTS API that returns word-level timestamps alongside the audio stream. For long-form material like textbooks or documentation, TTS for audiobooks is a proven format that lets users consume content at their own pace without visual strain.

Enterprise software and web applications shift the priority toward low latency and WCAG compatibility. UI elements, error messages, and navigation prompts need to be spoken instantly and accurately. A 2-second delay on a form validation error is not merely annoying. For a user relying on TTS as their primary interface, it breaks the entire interaction flow. That is where the best text-to-speech tools with streaming architecture hold a clear advantage over batch-processing alternatives.

Match your TTS architecture to the specific accessibility use case, not a one-size-fits-all setup.

Advanced Considerations: Voice Cloning, Multilingual Support, and Compliance

This section is for teams building production accessibility systems at scale. If you are still evaluating which tool to start with, the earlier sections are the more relevant starting point.

Voice cloning introduces an accessibility dimension that rarely gets discussed. For users with degenerative conditions like ALS who are losing their ability to speak, cloning their voice before that loss occurs creates a deeply personal form of assistive technology. Smallest.ai offers voice cloning capabilities applicable in these contexts. The ethical and consent frameworks around voice cloning are still maturing, so any deployment in healthcare or assistive technology should include explicit user consent protocols and clear data governance policies.

Multilingual accessibility is consistently underestimated. A Spanish-speaking user with a visual impairment deserves the same TTS quality as an English speaker, but many tools have a sharp quality drop-off outside their primary language. When evaluating tools for global deployment, test the actual audio output in each target language rather than relying on a supported-language count. Regional accents matter too: a Brazilian Portuguese speaker and a European Portuguese speaker will have very different experiences with a tool that offers only one variant.

On compliance, WCAG 2.2 is the current standard, with WCAG 3.0 in development. Building TTS integrations that hold up means structuring implementation around semantic HTML, proper ARIA labels, and ensuring your TTS output does not conflict with native screen reader behavior. The goal is augmentation of existing assistive technology, not replacement of it.

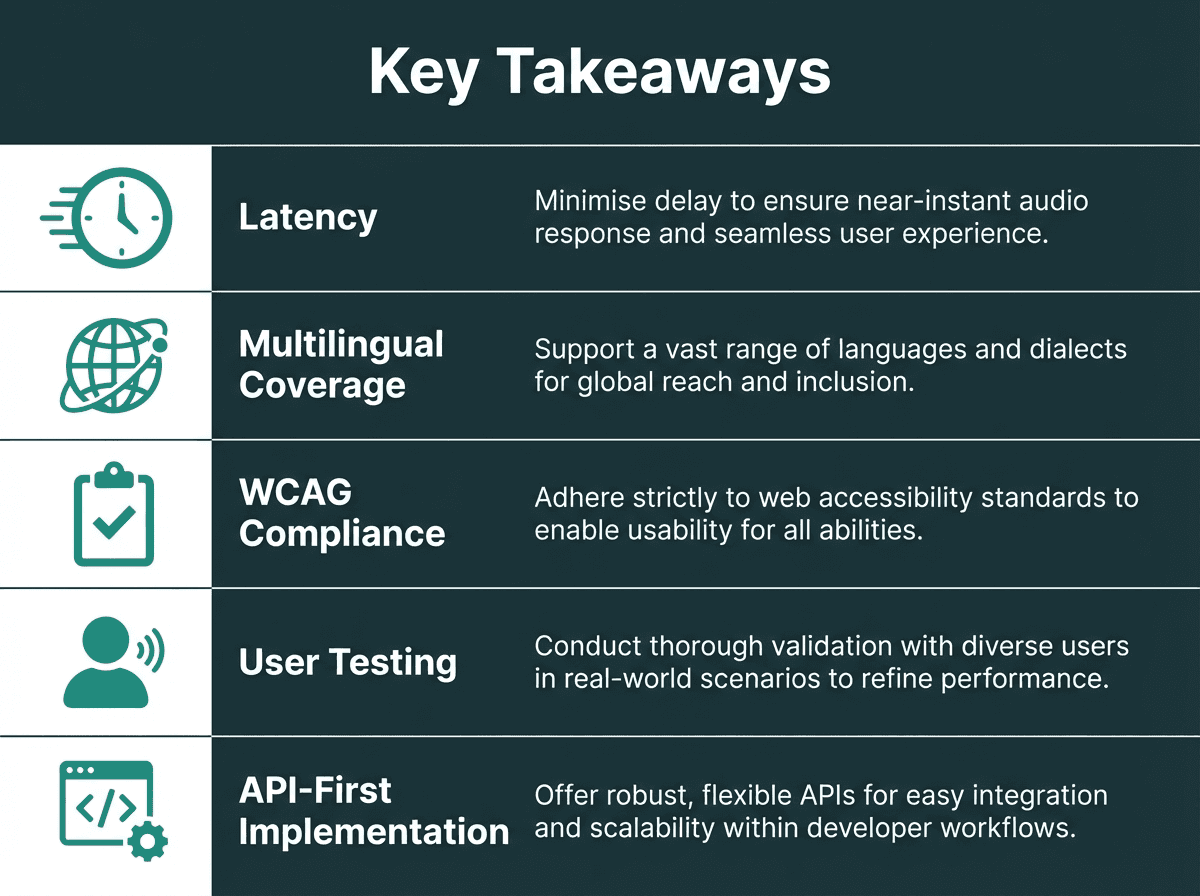

Key Takeaways and Next Steps

With 1.3 billion people globally living with a significant disability, the gap between what exists and what is needed is not shrinking on its own. The tools covered here each address part of that gap, but with different strengths and different use case fits.

If accessibility is a core product requirement rather than an afterthought, TTS infrastructure decisions matter from day one. Latency determines whether a reading assistant feels responsive or broken. Voice quality determines whether users with cognitive or learning disabilities can actually comprehend the output. Language coverage determines who your product can serve. These are not implementation details; they are product decisions.

Five principles that separate effective accessibility TTS from checkbox compliance.

Most digital content remains inaccessible to a significant share of the global population, and the tools meant to close that gap are often too slow, too robotic, or too narrow in language support to do the job well. Smallest.ai's Lightning TTS is built to address the latency and voice quality requirements that real accessibility use cases demand. Whether you are integrating TTS into a web application, building a reading assistant, or producing accessible audio content, Smallest.ai's text-to-speech platform gives you the infrastructure to do it properly.

What is read aloud text to speech and how does it support accessibility?

Which TTS tool is best for developers building accessible web applications?

Does text to speech satisfy WCAG accessibility requirements?

TTS is one component of an accessible experience, not a complete solution. WCAG requires that content be perceivable, operable, understandable, and reliable. TTS addresses the perceivable dimension, but you also need proper semantic markup, keyboard navigation, and contrast ratios. Treat TTS as part of a broader accessibility strategy, not a standalone compliance fix.

What is the difference between a screen reader and a read aloud TTS tool?